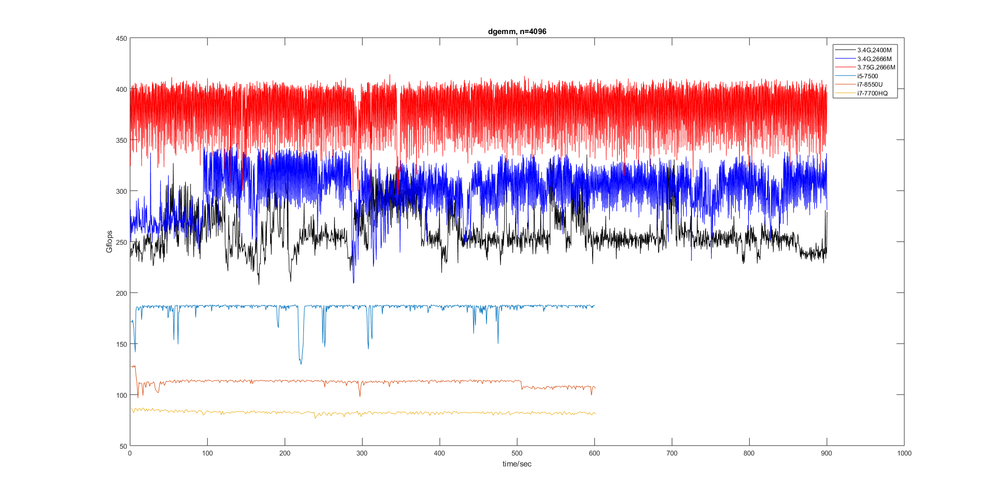

The figure below is the floating point performance vs time. The x axis is time, y axis is GFLOPS, y = 2*n^3/time consumed for BLAS function dgemm(). I have tested serval processors. The performance of TR1950x(the three upper curves) is obviously fluctuant while others(the other three curves) are relatively stable. So what's the reason?

Another question. The peak GFLOPS of TR 1950x@3.75G is 480G(AIDA64), 3.75G*16cores*8ops=480G, my best result is 380G(average),414G(max) in dgemm().Ryzen can only do one 256bit FMA per cycle(throughput). It's only the half of intel's solution. So why? cost,power consumption or heat?

Windows 10. OpenBLAS v0.2.19, OPENBLAS_CORETYPE=EXCAVATOR.