Hey there.

Yesterday I replaced my old Dell U2413 monitor with a new U3417W, which should also be 10-bit-capable (8-bit + FRC).

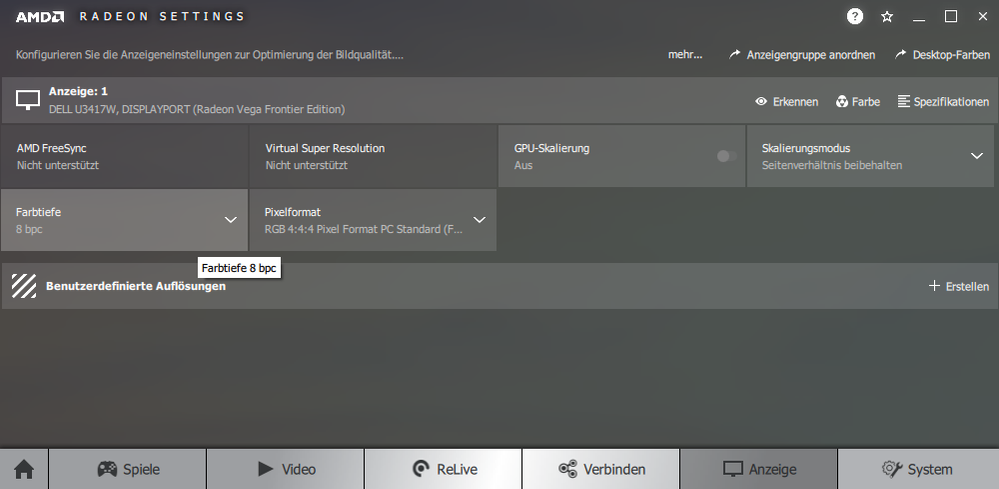

Had no problems getting 10-bit with the old one, but with the new one there suddenly is no more "10-bit color"-option in the Radeon settings, just 8- and 6 bit. Both monitors are/were connected using a DP-MiniDP-cable. Is the 10-bit-output somehow dependent from the used resolution and not supported at resolutions this high? This was suggested over at the Dell Forums. Or am I doing something wrong?

System specs: Vega Frontier Edition w/ gaming drivers 18.2.3, Dell U3417W @ 3440x1440, monitor driver installed.

Thanks in advance for any help & advice.

Greetings, Malte