- AMD Community

- Communities

- Radeon ProRender

- Maya Discussions

- Re: Error: Radeon ProRender: Invalid parameter - U...

Maya Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Error: Radeon ProRender: Invalid parameter - Unable to set Adaptive Subdivision!

Hello,

Adaptive subdivision needs to be disconnected and reconnected (from hypershade) each time the viewport panel is set to Radeon Pro Render, and the adaptive subdivision doesn't seem to be working in production render or batch render.

Error: Radeon ProRender: Invalid parameter - Unable to set Adaptive Subdivision!

I also noticed that adaptive subdivision has to be adjusted when changing the camera FOV, which isn't correct it should be based on pixels.

This was Maya 2019, not sure if the problem exists in earlier versions of Maya.

Thank you,

409

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Fourzeronin,

I can confirm there is an issue with adaptive subdivsions.

I went ahead and sent the information you provided to developers.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This scene also has issues with multi gpu or gpu+cpu rendering. half of the image doesnt resolve properly with multiple gpu's and more issues including mesh visibility issues when cpu is also enabled.

I have attached a newer version of the scene. I am also observing that the uber material emission doesnt render properly unless there is a light source in the scene. In this case I just made a RPRSky node and set the intensity to 0.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Fourzeronin,

I was able to confirm that the issue with emissive seems to happen on PBR and Uber materials. RPR Material emissive seems to work OK.

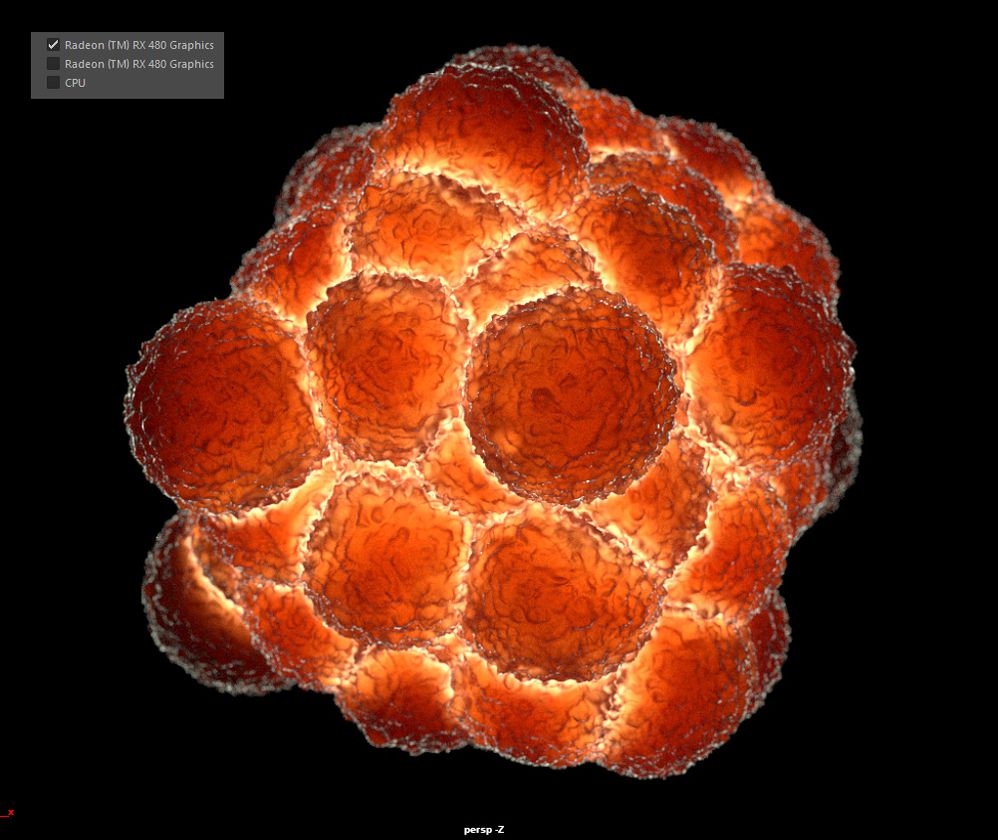

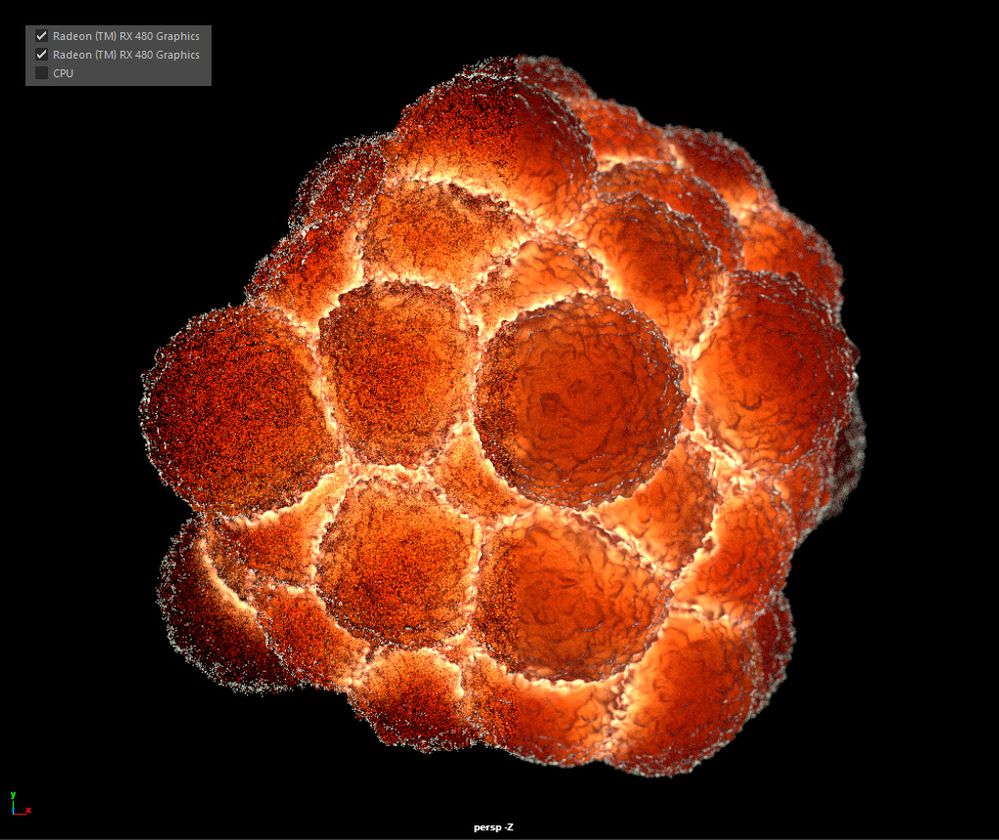

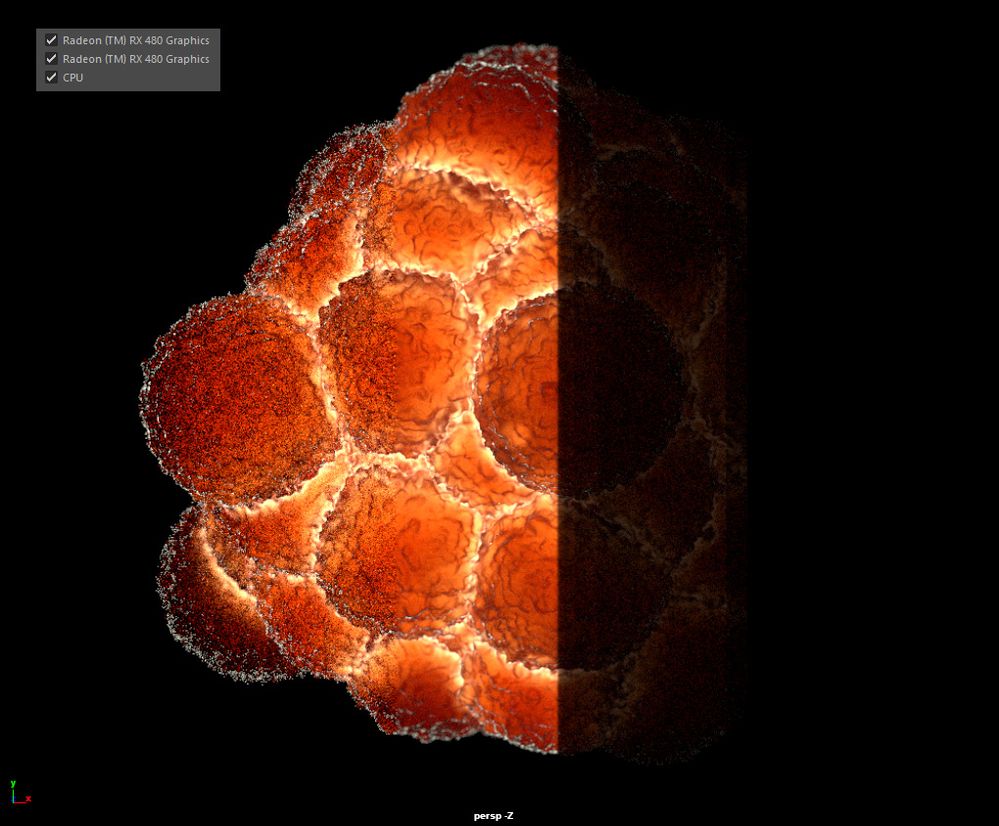

Could you provide an image showing the results you got on the GPU+CPU and MGPU issue? Does it look like a line running down in middle of image?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sorry about the delay. Here are the images you requested.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Fourzeronin,

No worries about delay. This is actually a known issue, what happens is CPU renders the right side of the image and tends to fall behind the left side of image which is running on GPU.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi David.

Thank you for the reply.

The second image is no CPU just two GPU's which are identical (make model brand clock speed etc). The issue is the left side of the image NEVER resolves further than what you see there.

The same applies also for the 3rd image (2 GPUS + 1 CPU). Except the CPU seems to be rendering black while the second GPU still appears to render an image that never clears up.

As a note to maybe pass to the developers is to have an option that each GPU and each CPU in the system should all be contributing to the entire image and the samples should be combined. I'm sure its more complex than this but I believe it requires the raycasts on each device to derive from a different seed value so that each device isn't casting the same exact rays atop one another.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Fourzeronin,

I will pass this information to the developers.