- AMD Community

- Support Forums

- General Discussions

- WCCFTech: RX 5700, 5700XT >60FPS average in Cryeng...

General Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

WCCFTech: RX 5700, 5700XT >60FPS average in Cryengine Neon Noir ray tracing demo at 1920x1080

A link to the download is included in the article, but considering Vega 64 averages 58FPS at 1920x1080, it's pretty telling that only minimal, if any, ray tracing will be capable on RX 500 and earlier series cards from AMD, which is pretty expected given the computational demand of ray tracing. It's a 4.35GB download, so with my limited data cap I can't download it, but it would be interesting to see how older cards, like the Fury series, performs, though I'm still expecting all pre RX hardware to be dropped to legacy support very soon.

https://www.wccftech.com/neon-noir-cryengine-hardware-api-agnostic-ray-tracing-demo-tested-and-available-now/

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

no 4K testing?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

No, WCCFTech did not do 4K testing in the article, hence why it was not posted. Perhaps they thought, like most rational people, that with the ungamable frame rates shown by the 5700XT at 2560x1440, as well as the -barely- gameable frame rates shown by the RTX 2080 Super despite having Tensor cores, it was pointless and a waste of time to run them again at 3840x2160.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have a 4K IPS panel and I play games at 3840x2160 if playable, but I can dial down the resolution when games are too sluggish

so far not many games at all cannot be played at 4K

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

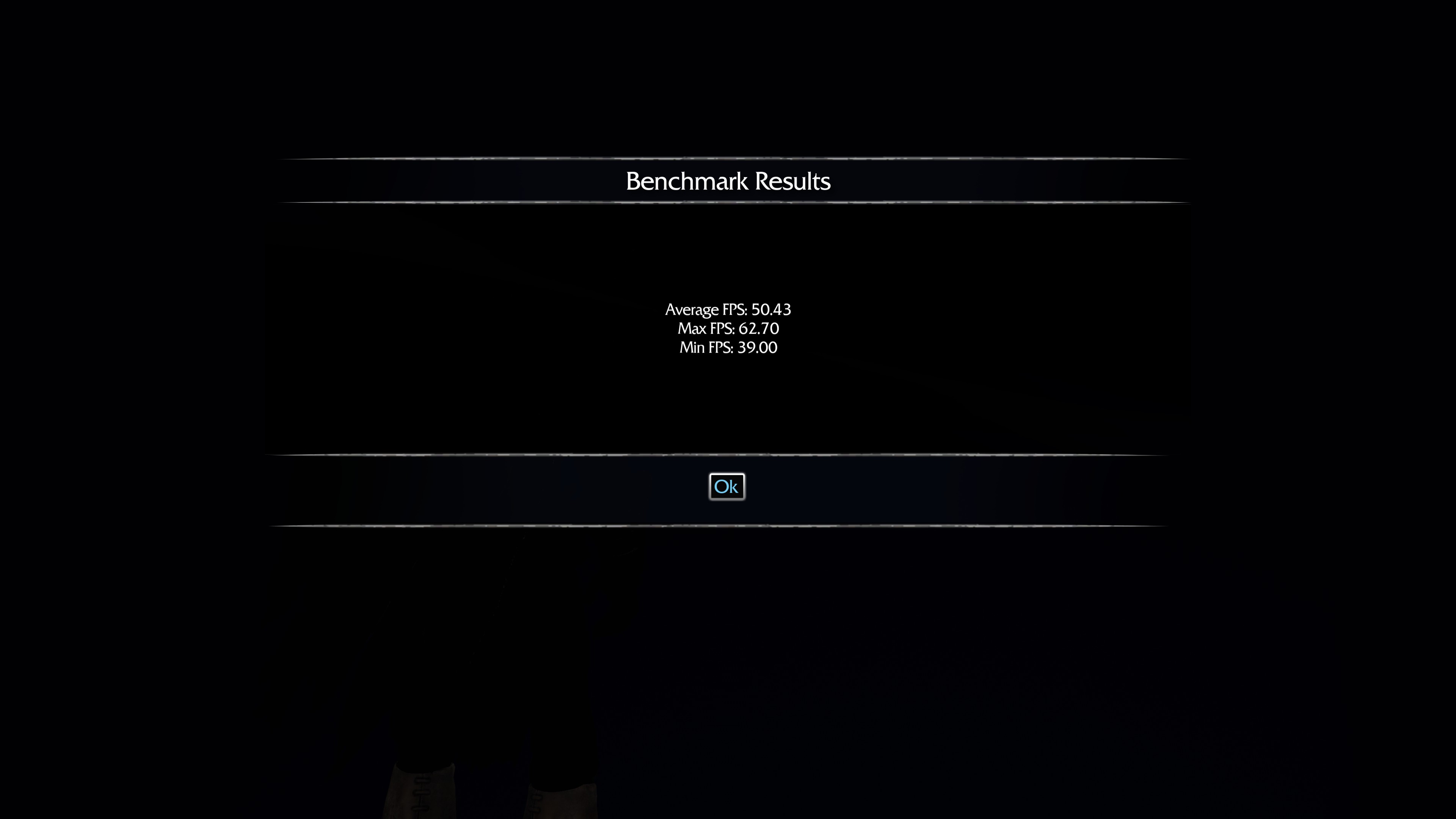

Benchmark Scores (R5 1600 @ 3.5GHz / DDR4 16GB 2667MHz / RX Radeon 5700 XT Reference)

1080p / Min: 53 / Max: 109 / Mean: 81

1440p / Min: 33 / Max: 69 / Mean: 50

2160p / Min: 11 / Max: 33 / Mean: 22

This is using the Radeon Performance Metrics, as opposed to the Crytek … mainly because I can actually log the results without recording the screen and manually writing down all the numbers.

Now this Engine is arguably pretty Smooth., so Min/Max/Mean actually is a decent enough representation; but for those who might feel it isn't "Good Enough", below are the Standard Deviation Results.

For those curious: Data Sets are 375 Data Points per Run (and I did 3 Runs Per Resolution on "Ultra" Ray-Tracing)

As such the result are as follows:

1080p

Min: 53 (Base)

Max: 109 (Peak)

Range: 56 (Frame Range)

Median: 81 (Middle Framerate)

Mean: 81.349 (Avg.)

Mode: 80 (Common)

Standard Deviation: 12.778 (Frame Jitter)

(Values in FPS)

This means the 99th Percentile Avg. is 68.57 (Min) to 94.13 (Max)

1440p

Min: 33 (Base)

Max: 69 (Peak)

Range: 36 (Frame Range)

Median: 48 (Middle Framerate)

Mean: 49.762 (Avg.)

Mode: 48 (Common)

Standard Deviation: 8.485 (Frame Jitter)

(Values in FPS)

This means the 99th Percentile Avg. is 41.28 (Min) to 58.25 (Max)

2160p

Min: 11 (Base)

Max: 33 (Peak)

Range: 22 (Frame Range)

Median: 23 (Middle Framerate)

Mean: 22.448 (Avg.)

Mode: 23 (Common)

Standard Deviation: 5.353 (Frame Jitter)

(Values in FPS)

This means the 99th Percentile Avg. is 17.10 (Min) to 27.80 (Max)

•

Now these figures actually showcase what I mentioned initially about the Min/Max/Mean being enough to really give a good showcase of the Engine Performance.

If we were for example looking at the GTA V or RDR2 Engine., those figures wouldn't showcase each Avg. as being as close as they are... actually that's a showcase of an impressively stable Engine., at least Graphically; who knows when they add in things like AI, Networking, etc.

And this isn't just impressive for Crytek who tend to release Engines that have numerous bottlenecks and crush even High-End Hardware most dream of having... this is just impressive in general, as you wouldn't expect such a smooth Frame Output from most Engines today.

Some Benchmark Results for Unreal Engine 4 (Fornite / Jedi), Snowdrop (The Division 2), Playground (Forza Horizon 4), Frostbite 2 (Battlefield V, Battlefront 2) and Crystal (Shadow of the Tomb Raider) ... just to help give some more real-world figures that showcase what I mean.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I downloaded and ran the demos on Ryzen 2700X with RX Vega 64 Liquid. Adrenalin 2019 19.12.1 GUI, FRTC = 59, Adrenalin 2020 20.2.2 drivers installed using device manager:

Note I was recordiung video using ReLive at the benchmark resolution and I have Radeon Performance overlay running which affect performance.

I can run the performance metrics, later, but videos are here:

CRYENGINE Neon Noir Raytracing Demo on AMD RX Vega 64 Liquid at 1080p Ultra - YouTube

CRYENGINE Neon Noir Raytracing Demo on AMD RX Vega 64 Liquid at 2K Ultra - YouTube

CRYENGINE Neon Noir Raytracing Demo on AMD RX Vega 64 Liquid at 4K Ultra - YouTube

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have run Neon Noir on a Palit RTX2080 OC on the same Ryzen 2700X machine for comparison to RX Vega 64 Liquid.

The videos are still processing.

I will post them later.

I am running on a single R9 Fury X with an Intel i7 4790K next just to see if it will work at all.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Here are the links to the performance running the CRYENGINE Neon Noir Demo on a Palit RTX 2080 OC which should have similar performance to an RTX 2070 Super.

CRYENGINE Neon Noir Raytracing Demo on Nvidia Palit RTX2080 OC at 1080p Ultra - YouTube

CRYENGINE Neon Noir Raytracing Demo on Nvidia Palit RTX2080 OC at 2K Ultra. - YouTube

CRYENGINE Neon Noir Raytracing Demo on Nvidia Palit RTX2080 OC at 4K Ultra. - YouTube

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Single R9 Fury X with an Intel i7 4790K results:

CRYENGINE Neon Noir Raytracing Demo on AMD Sapphire R9 Fury X at 1080p Very High - YouTube

CRYENGINE Neon Noir Raytracing Demo on AMD Sapphire R9 Fury X at 1080p Ultra - YouTube

CRYENGINE Neon Noir Raytracing Demo on AMD Sapphire R9 Fury X at 2K Very High - YouTube

CRYENGINE Neon Noir Raytracing Demo on AMD Sapphire R9 Fury X at 2K Ultra - YouTube

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

colesdav wrote:

Single R9 Fury X with an Intel i7 4790K results:

CRYENGINE Neon Noir Raytracing Demo on AMD Sapphire R9 Fury X at 1080p Very High - YouTube

CRYENGINE Neon Noir Raytracing Demo on AMD Sapphire R9 Fury X at 1080p Ultra - YouTube

CRYENGINE Neon Noir Raytracing Demo on AMD Sapphire R9 Fury X at 2K Very High - YouTube

CRYENGINE Neon Noir Raytracing Demo on AMD Sapphire R9 Fury X at 2K Ultra - YouTube

So the R9 Fury can handle 1080p raytracing

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes.

The Fury X is running at PCIe3.0x8 on an Intel i7-4790K - an old Haswell PC I use for MultiGPU Rendering.

Running on a more modern processor with higher clock speed or IPC should boost the performance.

I don't have time to do that though. So I just ran it on a machine with a primnary R9 Fury X connected.

Since the R9 Fury X supports FreeSync on DisplayPort then anything running over 40 FPS (low end of FreeSync Range on many monitors) should be enough to give a reasonably O.K. experience. Given that, a faster processor might allow the R9 FuryX to run to run at 2K Very High at least.

Note the demo is DX11 not DX12.

I tried running the benchmark with DX11 Crossfire on a pair of R9 Fury X's in Adrenalin 2019 19.12.1.

I managed to get one Crossfire setting that looked like it was giving some positive scaling but there was bad flicker.

So it is likely that AMD could work on it to produce a working CrossFire profile.

Nvidia cards can run the Demo in SLI/NVLink.

Crytek are working on Vulkan implementation though so perhaps when that is ready then AMD cards could perform much better.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Interesting that the $80 R9 Fury still has some potential left in it.

I tried Halo on an EVGA GTX 1060 at 3840x2160 and I was shocked to be able to play the game fine. The R9 Fury is double the performance so I am expecting lots of gaming on the card.

Too bad the R9 Fury did not have an 8GB model, AMD probably could have sold a lot of them.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Memory Bandwidth of HBM on R9 Fury X can get required data onto the GPU quickly.

I have tested many games with large texture packs such as Middle-earth: Shadow of Mordor HD Texture pack which requires 6GB Vram on R9 Fury X and it runs fine.

Middle-earth: Shadow of Mordor - HD Content on Steam

The real pity is AMD made changes to their drivers which locked out HBM overclocking on R9 Fury X, R9 Fury and R9 Nano using MSI Afterburner and Sapphire Trixx shortly before the release of the RX Vega 56 and 64 GPUs.

The Vega GPU HBM2 have lower memory bandwidth than R9 Fury X.

I wish that AMD would unlock HBM overclocking for the cards again using Sapphire Trixx and MSI Afterburner.

Increasing HBM frequency definitely improves performance on some games I tested and on 3DMark scores.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This is on the RX 480 8GB card. Medium settings 3840x2160 and very playable. I am hoping that the R9 Fury can bump the game to maybe high graphics.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It will be interesting to see but don't expect miracles.

The R9 Fury X is falling behind in performance in some titles like Forza 4 Horizon.

See: https://community.amd.com/message/2884079?commentID=2884079#comment-2884079

Also: Forza Horizon 4 Benchmarked - TechSpot

The game was developed targeting XBOX One-X which uses an underclocked RX580 based design.

Perhaps the port from Console to PC runs particularly well on Polaris GPUs.

It could also be that no driver optimization is done for any AMD GPU older than Polaris

Maybe because Polaris is most popular AMD GPU it gets most of the attention from the drivers team.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Some opinions on the R9 Fury seem warranted

- It trades blows with a $450 GTX 1070 in DX11 games

- It nearly matches a GTX 1080 in DX12/Vulkan games

- It costs 10% more than an RX 480, while performing 30% better.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content