- AMD Community

- Communities

- General Discussions

- General Discussions

- The State of Boosting Clocks AMD Ryzen 3000 series...

General Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The State of Boosting Clocks AMD Ryzen 3000 series.

Hello, I posted this data in a few different threads but I thought I would consolidate my testing results here. I would preface this by saying, I have never hit the advertised "4.6GHz boost clock" on a single core load. The closest I have ever come was on UEFI 2501 based on AGESA 1.0.0.2. All of the AGESA 1.0.0.3XX releases appear to have modified the boost behavior and reduced the clocks.

This testing was performed on a ASUS Crosshair VII X470, UEFI 2703, Ryzen 9 3900X, Corsair Dominator 32GB (4X8GB) at 3600MHz CL16, Windows 10 1903. On to the observations.

1. PBO appears to do nothing. If I enable it in any fashion there is no tangible effect on core performance. All it seems to do is raise voltage, but not apply that voltage to doing any additional work.

2. The precision boost algorithm doesn't take into account differing CCD quality. The power budget is wasted by boosting less efficient cores. This will likely be less of an issue on the single CCD models. Below you will find the data by which I came to these two conclusions.

Figure 1: CCX Overclock using Ryzen Master.

CCX0 is at 4.5 GHz, CCX1 is at 4.4 GHz for CCD0.

CCX0 and CCX1 are at 4.1 GHz for CCD1. Voltage is at 1.3V.

The downside to any manual overclock is that you cannot apply additional voltage on a lightly threaded, low current workload. Here are my Cinebench scores with this setting.

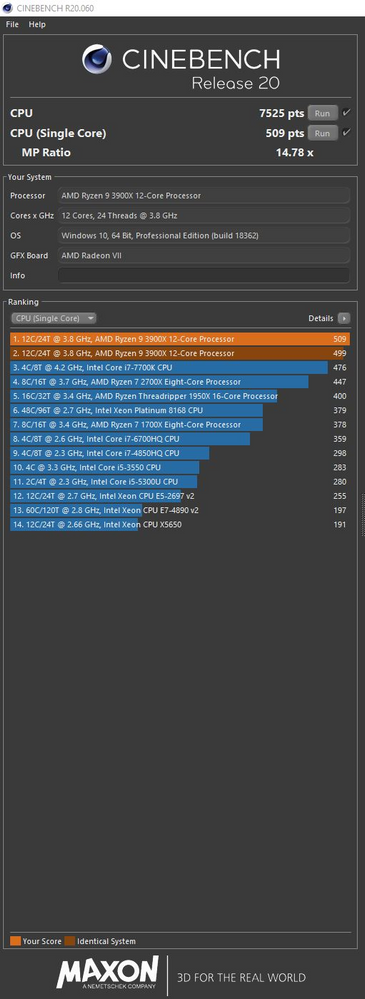

Figure 2: Cinebench Result CCX Overclocking (1.3V)

Now, here are my numbers again if I use PB and XFR with PBO enabled.

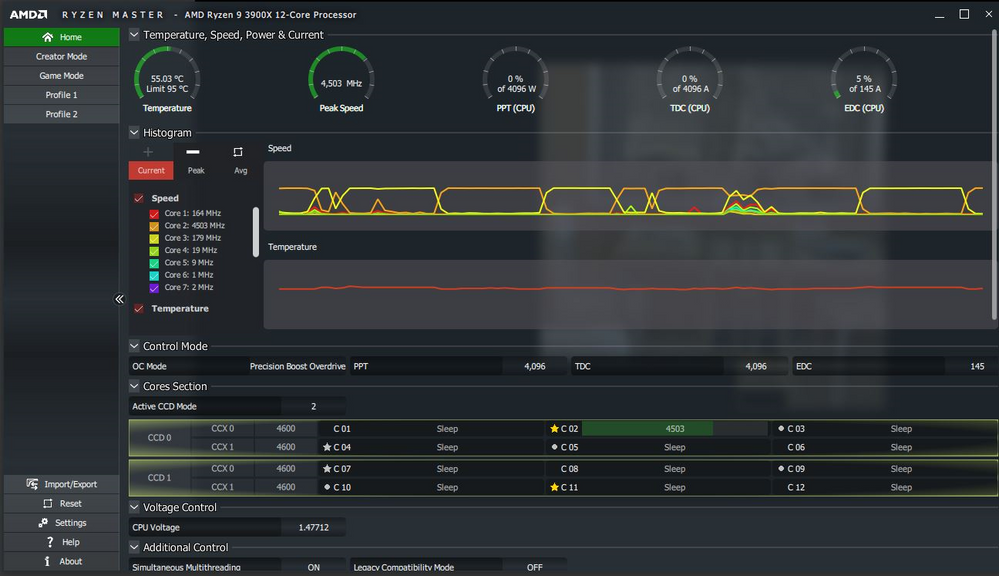

Figure 3: Ryzen Master, Percision Boost, Extended Frequency Range, Precision Boost Overdrive Enabled.

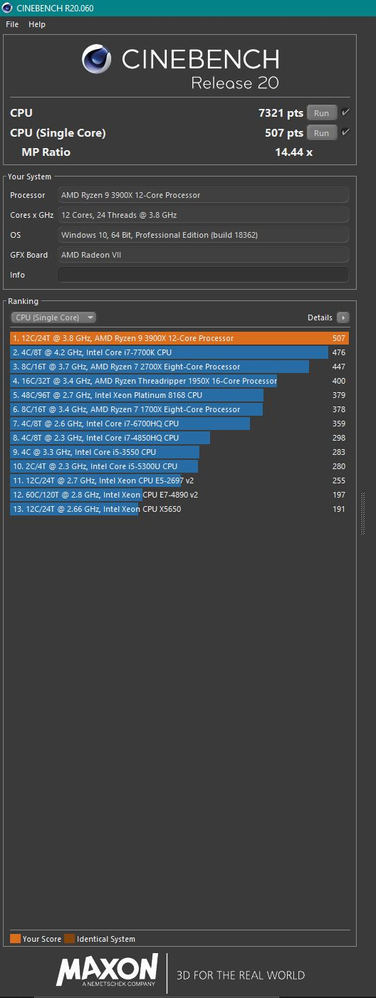

Figure 4: Cinebench Results, using PB, XFR with PBO Enabled.

Discussion: What is immediately striking to me about these results is the single thread score. When PB and XFR are applied, the single core voltage is boosted to 1.47V and increase of 170mv over my manual setting. The result performance and clock speed however, are exactly the same. That extra voltage doesn't appear to be used for anything.

Figure 5: Comparison PB, XFR vs Ryzen Master CCX Overclock.

| Overclocking | CB20 (single) | Voltage | CB20 (multi) | Voltage |

| PB, XFR | 507 | 1.48 | 7321 | 1.3 |

| Manual (CCX) | 509 | 1.3 | 7525 | 1.3 |

| %Difference | 0.4% | -12.2% | 2.8% | 0.0% |

As we see in the table, a 12% drop in voltage nets the same performance. This leads me to conclude that PB is somehow broken on the 3000 series. When the number of cores being used declines, PB should add additional voltage and boost the few cores up higher, which it does, but at a certain point it just stops scaling. It appears to stop scaling at the FIT limit for a high current workload. On an all core boost (high current workload), my CPU maxes out at 1.3V, which is the identical voltage I set to achieve a 4.5 GHz clock on CCX0 in CCD0. In the low current workload, the voltage continues to go up, but I don't see any performance gains. It is as if the voltage limit for high current workloads is still being applied despite the fact that more voltage is displayed in the measurement tools. Whether or not this is a bug, or simply a change made with the 1.0.0.3XX AGESAs is unclear, but it does seem like single core performance is being hardcapped at 1.3V just like multicore, despite the higher readings in Ryzen Master.

Now the issue with the multicore boost is a bit different. When boosting a multicore load, PB and XFR boost all cores simultaneously, which was fine for the 2000 and 1000 series when all cores were relatively the same. However, AMD has already revealed that the 3900X (maybe the 3950X) has one CCD of 6 cores that performs better than the other. Trying to push the "bad" CCD to higher clocks takes more voltage than pushing the same clocks on the "good" CCD. This causes quite a bit of the power budget to be wasted on the "Bad" CCD. It would be a better use of the available power budget to only push all cores together to say 4.0 or 4.1 GHz, and then only push CCD0. I was able to increase my Cinebench multicore score by 3% simply by doing exactly that.

I would recommend right now disabling PBO as it appears to do nothing, and overclocking via CCX controls in Ryzen master. Sure, you lose the high voltage boost on lightly threaded loads, but right now that extra voltage isn't doing anything as far as I can tell.

Additional Notes: On my motherboard (Crosshair VII X470), setting the XMP profile breaks boosting. The XMP profile only sets DRAM clockspeed on the main UEFI page. When setting the clocks here, Infinity Fabric and the northbridge do not scale with RAM speed and boosting breaks. There are two other places to set RAM speed, in AMD CBS and AMD Overclocking. After setting an XMP profile, if the clock speed is set manually in the other two places, Infinity Fabric and northbridge speed scale correctly and the boosting is restored.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

A couple of things from your screenshots stand out, and that's that Ryzen Master may not be detecting everything properly, considering AMD CPUs cannot clock down to what your screenshots show, so I would hold final testing and judgement in abeyance. Remember the BIOS you're using has an AGESA code which was pushed out very quickly and designed for Destiny 2 and Linux, so it is likely that it is buggy in some respects

And to refer back to the Guru3D article posted a week ago comparing the 3800X across different motherboards, it is clear that most motherboard manufacturers still have work to do when it comes to Ryzen 3000 series performance even on X570 chipsets, not to mention previous boards, as most of them did not reach their maximum boost speeds even though the same CPU was used in each test.

Right now it says to me the "early adopter penalty" is still in full effect, just be glad it's not going to cost you over $150 the way my 1800X did...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Based on what I have observed in testing, is that the problem does not lie with the chips themselves. For example, I also set my fastest core directly to 4.6 GHz, and gave it the 1.48V observed when using precision boost. The result was a constant 4.6 GHz clockspeed and the expected performance uptick.

So it is clear, the processors can do 4.6 GHz, and they can even do it at the voltages supply by precision boost. They just don't. Instead, they use all that voltage to supply a level of performance you can get with a 1.3V manual clock. So yes, something seems to be messed up with precision boost right now, and the single core boost either isn't actually going past 1.3V or it isn't able to utilize more that that. 1.3V appears to be the limit for a all core boost, at least it is what precision boost settles at. And it would appear that the limit is being applied to low current workloads as well, most likely inadvertently. This is probably something that will be fixed in future AGESA updates.

Meanwhile, the all core boost, I don't see that being fixed. You can get better performance boosting CCD0 preferentially vs both together, but that is something I don't see AMD fixing. The overall percentage you gain is relatively small, and the gains are only in all core workloads.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thought this would be the best place to put this: In a survey of 722 Ryzen 3900X under a single threaded Cinebench R20 test, only 5.6% of owners had their CPUs hit their advertised boost speeds.

https://www.tomshardware.com/news/amd-ryzen-3000-not-hitting-advertised-boost-speeds-survey,40291.html

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

And the Guru3D article on this issue quotes a supposed ASUS employee from the overclock,net forums (below) where he/she states it is expected behavior. This makes sense given the high voltages the boost states must use to ensure stability, and technically the "max boost clock" IS true since it can hit that on some motherboards still, but it's still another blemish on the Ryzen 3000 series on top of the "only one core can boost to max speed" blemish.

https://www.guru3d.com/news-story/ryzen-3000-amd-deliberately-limited-boost-behavior-in-favor-of-longevitysays-asus-staff.html

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Well, the max boost clock IS true, because if you set the fastest core at 4.6 GHz and supply it with the identical voltage precision boost uses, it holds the boost clock all day, with the expected performance uptick.

What is strange is that it doesn't get there when using the boost despite the identical voltage being used. So what is that seems the boost algorithm currently considerably overvolts in low current loads, but keeps the clocks conservative. Setting overdrive in any way accomplishes nothing.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This behavior doesn't do anything to preserve the life of the chip, since the voltage is the same regardless.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The question becomes what is the life of the chip. Over the last 15 years you really haven't heard anything, or anything at all, about "normal" overclocked chips (as in for normal usage and not contests) failing at a high rate, but at the same time we are now getting into extremely dense and complex chips with a lot more things in them than just CPU cores creating many more points of failure, not to mention process nodes jumping so fast that you don't get a feel for the safe voltage range.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

That's fair enough, but as I noted above the voltage I used to hold 4.6 GHz is identical to the voltage already applied by PBO. I see the identical voltage numbers in Ryzen master, just when I set it manually an apply a single core workload, 4.6 GHz is held. When precision boost is allowed to do the boosting, the core maxes out at 4.5 or so.

Furthermore, as I indicated in the first post, the chip doesn't need 1.45V+ to hit 4.5 GHz in a single core workload, that can be done manually at 1.3V without any hit to performance. So if the algorithm is trying to protect the life of the chip, I would think applying vastly more voltage than is needed for the amount of work being done would be the first thing to go. But that seems to be what precision boost has decided to do.

Precision boost should boost until either: The max boost clock is hit, thermal threshold is reached, PPT limit is reach, TDC or EDC VRM current is reached or the voltage exceeds what the FIT settings for the silicon deem safe. In a single core boost, none of the limits appear to be met except possibly the FIT limit of the silicon. The algorithm simply won't put more than 1.5V in on low amperage, and says this is as far as I can get with the voltage I have. It also explains why PBO does nothing, there is no additional voltage to add. That would be all well and good, except it isn't true. Apply 1.48V manually during a single core workload and you can easily hit 4.6GHz and hold it. The speeds and performance PB needs 1.45V+ to achieve can be done at 1.3V manually, so something is definitely amiss here. All the explanations online of it protecting the chip seem to fall flat because the voltage is still being applied.

There are noted problems with the current AGESAs that I have posted on in other threads. For example, if I set my RAM speed via the XMP profile, precision boost breaks and hard caps all cores at 4.25GHz. I noticed, that when using the XMP profile, northbridge speed and infinity fabric speed do not scale with RAM speed automatically like they should up to DDR4-3600. You can set them to the correct rates manually, but boosting remains locked at 4.25GHz. After a bit of investigating, it was clear that the XMP profile only set the RAM clock speed on the main UEFI page, there is also a place to set RAM timings in the AMD Overclocking section. Setting RAM clocks there, and matching them to the main UEFI page, caused the infinity fabric and northbridge to scale correctly, and also restored the normal boost behavior.

Who is to say there aren't more conflicting settings? Most of the RAM timing/precision boost settings show up in three places: Main UEFI. AMD Overclocking, AMD CBS. Which has precedence over the others?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am just imagining the rage against Intel if they pulled something similar. With AMD, it is just assumed incompetence, instead of malice.

All AMD had to do was use the word "approximately" or the phrase "up to". Or here is a thought, test the actual product with proper bios for specs, not a cherry picked CPU with a half-baked bios.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I think a better option would be to have implemented an option in BIOS to choose between "Extreme" and "Standard" boost clocks from the start, with the disclaimer that "Extreme" may reduce the life of a chip. I have a feeling in AGESA 1.0.0.4 this may be the case especially with this getting attention from the major reputable tech sites.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Based on the data I have, the best hypothesis I can come up with is that AMD implemented what seems to be a "per core power limit" Voltage in and of itself does not generate heat, only when a current is applied to do work. It is possible, that since the core dies were shrunk to 7nm, the internal heat spreader isn't able to diffuse heat away fast enough if the amount of work being done is too high.

The precision boost algorithm is designed to increase voltage and amperage as long as those limits aren't met, but what if that creates a tiny area of concentrated heat that damages the silicon? The only way to regulate that would be a "per core power limit" as regulating voltage and amperage in the boost algorithm would also affect all core boosting.

As the voltage rises, the clock speed is lowered to keep the wattage and thus temps within the threshold. If precision boost overdrive is implemented, more wattage and amperage are sent in, meaning wattage has to be cut even further to limit heat. In effect, the precision boost algorithm keeps raising the voltage and amperage as the package temperature allows, but the core wattage limit then has to keep cutting the clock speed to keep highly localized temps in check.

We can't know for sure that something like this is going on, but it currently tracks with all the observed data. If true, then we are likely stuck with this boost behavior as there are no UEFI settings to alter these limits. Even if you delidded your CPU and improved heat transfer, the limits would still be in place.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have the MSi X570-A PRO and It seems to be a good choice as this board with my R5 3600 and DDR4-3200 runs well.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

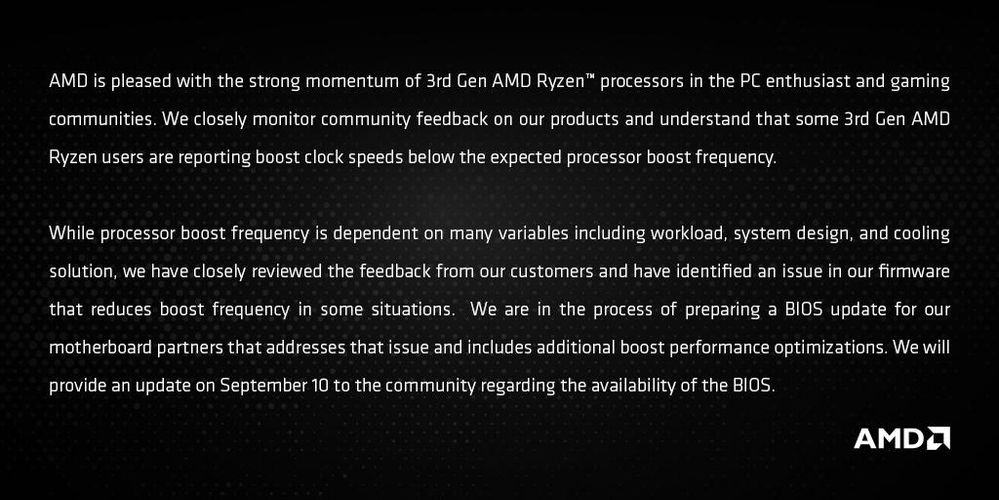

And now, from the AMD twitter feed.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

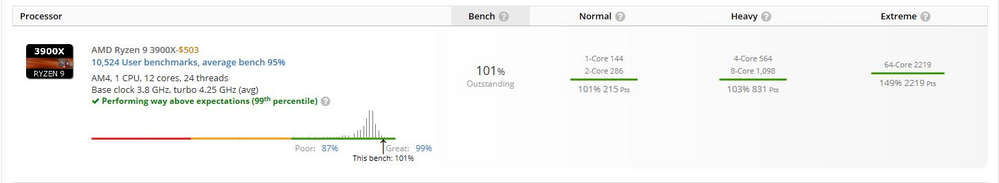

Just a quick update. I upgraded to a UEFI released on overclock.net by user "The Stilt" The UEFI was modded to include the SMU binary code for boosting that will be in the official release 1.0.0.3ABBA UEFI.

This pretty much had the effect that rhallock noted in the blog post in the gaming section of this community. I saw my boost clocks increase by about 50MHz on average. Not earth shattering, but I did see 4.6 GHz appear in a Cinebench R20 run, but it did not hold that level.

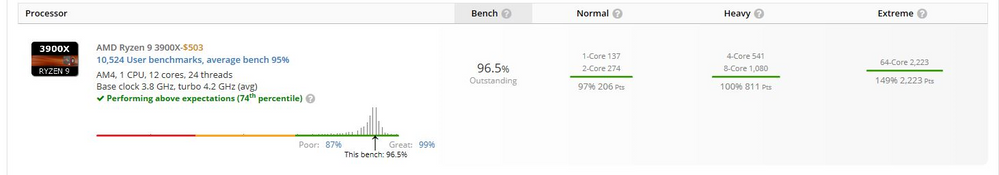

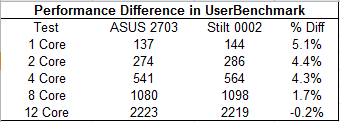

I also ran userbenchmark with the old 2703 official UEFI and the modded UEFI.

If I put the results in table form, they are pretty interesting.

The new SMU microcode has a bigger impact the few cores are in use. I saw a 4% or more increase in score from 4 cores to 1. 8 cores was below 2%, while 12 cores was within margin of error.

So while the new boosting code does impact performance, it didn't have any impact on the all-core boost for me. All core boosting is likely limited by other factors such as current or voltage.