- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

What is the specific meaning of the GPU_NUM_COMPUTE_RINGS environment variable?

What is the specific meaning of the GPU_NUM_COMPUTE_RINGS environment variable? And Where can I find anything about the restrictions on setting this environment variable?

The figure is from Opencl-programming-guide.

And What is the relationship between compute queue and hardware queue?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

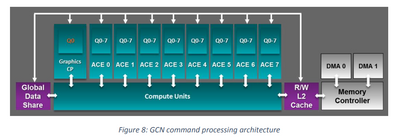

As mentioned in the document, a hardware queue can be thought of as a GPU entry point where commands or tasks are submitted to a GPU. There are mainly three types of queue available - compute queue, graphics queue and copy queue. The graphics command processor handles graphics queues, the asynchronous compute engines (ACEs) handle compute queues, and the DMA engines handle copy queues. Each queue can dispatch work items without waiting for other tasks to complete, allowing independent command streams to be interleaved on the GPU’s Shader Engines and execute simultaneously. For more information, please refer section "HARDWARE DESIGN" in Asynchronous-Shaders-White-Paper-FINAL.pdf .

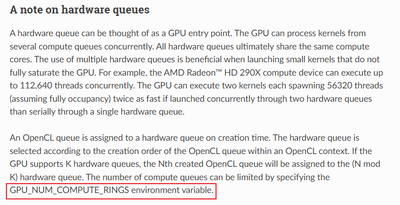

When an OpenCL queue is created, it is assigned to a hardware queue which is mostly a compute queue. The compute queue is selected according to the creation order of the OpenCL queue within an OpenCL context. The GPU_NUM_COMPUTE_RINGS environment variable can be used to limit the number of compute queues available for the selection.

Thanks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for your apply.

And I still have a question. How is the OpenCL queue assigned to multiple ACE-managed Hardware queues?

Just as shown in the following picture. GCN supports up to 8 ACEs per GPU, and each ACE can manage up to 8 independent queues.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As mentioned in this AMD OpenCL Programming Guide:

"The hardware queue is selected according to the creation order of the OpenCL queue within an OpenCL context. If the GPU supports K hardware queues, the Nth created OpenCL queue will be assigned to the (N mod K) hardware queue."