This week at Supercomputing ’20, AMD unveiled our new AMD Instinct™ MI100 accelerator, the world’s fastest HPC GPU accelerator for scientific workloads and the first to surpass the 10 teraflops (FP64) performance barrier.

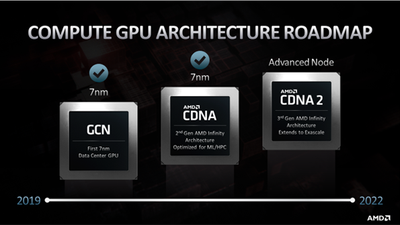

The foundation for this new data center GPU is AMD Compute DNA (CDNA) architecture – our first compute-optimized GPU architecture. As opposed to a one-size fits all approach, the new AMD CDNA architecture was specifically designed for high performance, efficiency, with the feature needs of data center compute.

Here is a look at what makes our new AMD CDNA architecture shine in HPC and ML applications. For additional technical details on the new architecture, view the full AMD CDNA whitepaper.

AMD CDNA Compute Units with Matrix Core Technology

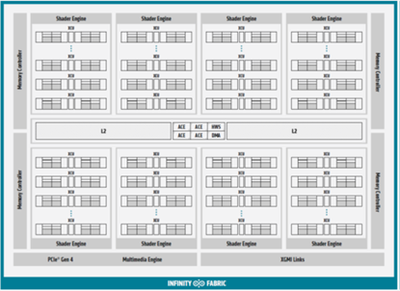

The AMD Instinct MI100 GPU features 120 enhanced compute units (CUs) organized into four vector/matrix SIMD units. Each AMD CDNA vector/matrix SIMD unit is rearchitected and enhanced with a Matrix Unit that executes a new family of wavefront-level instructions to significantly boost computational throughput and efficiency for key numerical formats (e.g., INT8, bFloat16, FP16, or FP32), while the primary vector units handle remaining data types.

Unlike the gaming-oriented AMD RDNA™ family, the AMD CDNA architecture removes the fixed-function gaming logic for rasterization, tessellation, destination graphics caches, blending, and even the display engine. This frees up area and power to invest in additional compute units, boosting performance and efficiency.

Cache and Memory

Most scientific or machine learning data sets are large with repetitive data references measured in gigabytes or terabytes of bandwidth per second. For the MI100, the last level L2 cache is 16-way set associative and comprises 32 slices (twice as many as in the Radeon Instinct™ MI50) in total for an aggregate capacity of 8MB. Each slice can sustain 128B/cycle for an aggregate bandwidth over 6TB/s across the GPU.

The AMD CDNA architecture is targeted for the most demanding workloads, and the memory controllers and their associated interfaces are designed for maximum bandwidth, power efficiency, and extreme reliability. The memory controllers drive 4- or 8-high stacks of co-packaged HBM2 memory operating at 2.4GT/s for an aggregate theoretical throughput of 1.23TB/s – 20% faster than AMD’s prior generation accelerator, while keeping the GPU power budget constant. The MI100's robust hardware ECC helps protect the 32GB of HBM2 memory and enables mission critical applications to operate at massive scale.

Communications and Scalability

While systems running small workloads may use one AMD Instinct™ MI100 accelerator, larger and more demanding tasks such as training neural networks require more powerful systems. The AMD CDNA architecture uses standards based high-speed AMD Infinity Fabric™ Links to connect up to four GPUs for greater bi-section bandwidth to enable a highly scalable system. Unlike PCIe®, the AMD Infinity Fabric technology supports coherent GPU memory, which enables multiple GPUs to share an address space and tightly cooperate on a single problem.

Real World Results

The AMD CDNA architecture offers amazing compute capabilities, and when combined with AMD’s open source ROCm™ software stack and ecosystem, delivers a compelling resource for some of the most important scientific and machine learning applications.

During testing for the upcoming exascale Frontier system, Oak Ridge National Laboratory tested their science codes on the MI100. Some of the performance results ranged from 1.4x faster to 3x faster performance compared to a competitor node. In the case of CHOLLA, an astrophysics application, the code was ported to AMD ROCm in just one afternoon while enjoying 1.4x performance.

My thanks to all our talented AMD engineers who worked on this new architecture and the new MI100 accelerator. We are proud of this first-generation AMD CDNA architecture and the value it can bring to businesses and researchers. And we’re already working on the next generation CDNA 2 architecture as we move forward on the path to exascale.