- AMD Community

- Communities

- Red Team

- Gaming Discussions

- Multi monitor borderless window gaming question

Gaming Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Multi monitor borderless window gaming question

I've been a PC gamer since the turn of the millennium and for a couple of years now I've moved to a multi monitor setup, a 21:9 and 16:9. Once you go ultrawide to play games, truly there's no going back. I do however wonders about my hardware choices through the years. I've been using Ryzen for 2 years now, but Nvidia was always my go to solution for GPU. I've always chose the GT/X/RTX x80 series since I went multi monitor.... Lately I have a question, is it necessary?

All of my monitors are 1080p. I only have enough horizontal space to fit a maximum of 29" ultrawide and 24" monitor. When I experimented with a triple 22" 1080p monitor setup, I noticed that my old 980 is already idling at 50% utilization so I surmise that more pixels needs more power. I play all sort of games, mostly building simulation, but due to having a habit of doing many things at the same time, I usually play on my main ultrawide and watch streaming videos on the other monitor. So with my use case, I usually set my game to borderless window for uninterrupted switching. Oh by the way, I locked my frames at 60FPS whenever possible.

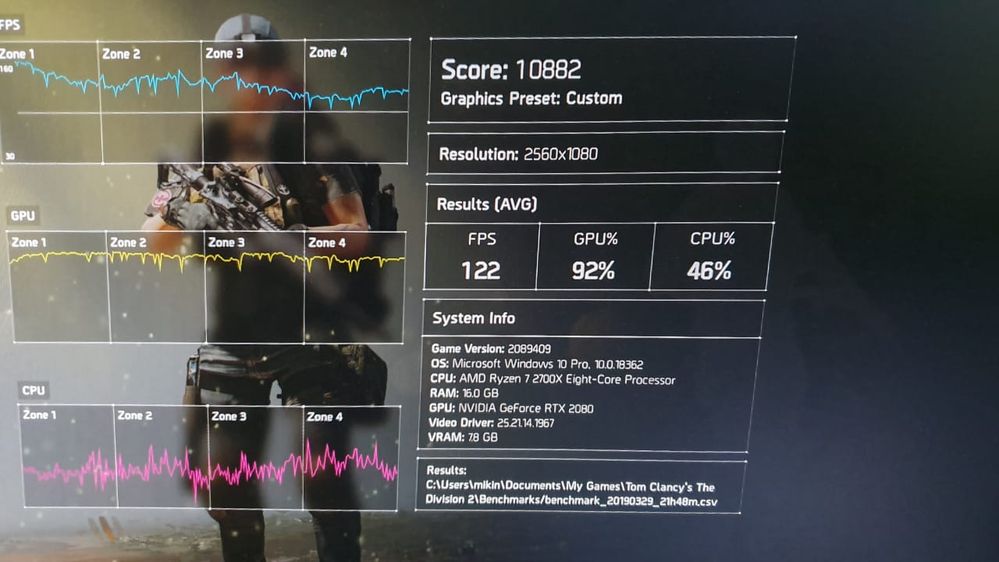

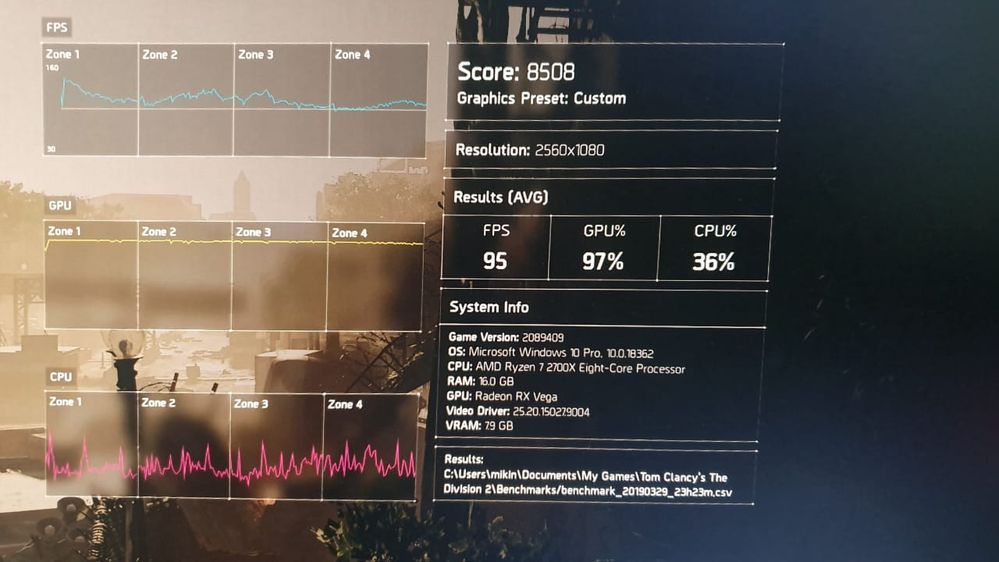

Do I really need RTX 2080 to drive my setup? I do play AAA games as well. Currently playing Division 2 and Anthem, with the second monitor running youtube sometimes when I'm grinding for loots. So curious if simply using even a Vega 56 fits my use case since the GPU is now readily available new and second hand (ex mining) at good price. I'm not liking Nvidia pricing path as of late, and I wanted to switch to an all AMD rig for quite sometimes now.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

If you are simply gaming on one 1080p monitor and just watching videos on a second screen then a low end GPU like an RX580 8GB or GTX1060 6GB should be fine for 1080p gaming. If you use Nvidia Dynamic Super Resolution to render and run a game at a higher resolution (2K/4K) to get higher quality graphics on your 1080p screen then that is a different story. AMD equivalent to DSR is called Virtual Super Resolution (VSR). In addition you might want to look at Nvidia Multi Monitor equivalent to AMD Eyfinity Technology. Since you have missmatched monitor sizes that is unlikely.

I have a triple matched 1080p monitor setup on one of my PC. I used AMD Eyefinity to turn the triple monitors into one big screen. Nvidia GPU have similar capability. I originally drove it with a single Sapphire HD7970 OC 6GB. It is a great experience provided the GPU is powerful enough to drive (3 x 1920) x 1080 Pixels = 5760x1080 resolution = 6220800 pixels at the Graphics options you want to set. Since each individual screen is only 1080p you will likely want to push up the antialiasing settings to high or maximum settings and they will increase the memory requirement.

Whilst the Sapphire HD7970 OC 6GB had a large amount of VRAM the GPU itself was simply not powerful enough to drive some AAA games at > 20-30 FPS.

In the end I purchased a low cost R9 280x 3GB to try out crossfire.

I could not get a 6GB version, and since the Sapphire HD7970 OC 6GB is a 2.5 slot card it would not fit in my case in the second PCIe slot. As usual with AMD GPU I was unable to buy another one anywhere anyhow.

In that case with an HD7970OC6GB / R9280x 3GB GPU I was able to improve FPS performance by up to 1.8x on some AAA games but the missmatched 6GB/3GB VRAM was an issue as the total effective VRAM with DX11 Croassfire is the lowest of the two GPU - 3GB in this case.

There is not much gaming benchmark information available for multi monitor setups but one thing I used to do was compare the total number of pixels in the multi monitor setup to bench mark data for the following cases:

Assuming you are running (3 x 1920) x 1080 Pixels = 5760x1080 resolution = 6220800 pixels

Then for comparison 4K resolution = 3840 x 2160 = 8294400 pixels = 1.33 times more pixels to drive.

2K resolution = 2560 x 1440 = 3686400 pixels = 0.59 times less pixels to drive.

1080p is obviously 1/3 the number of pixels to drive.

Bye.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks a bunch for the thorough explanation. I actually went out and buy a second hand triple fan PowerColor Vega 56. The seller claims it's a gaming only use so probably will fare better than the ex mining ones, but oh well, the warranty already expired anyway.

This being my first ever Radeon graphics card ever... Well, my first ATi graphics card ever even, the experience out the door is just crazy. Color calibration for my second monitor is out of whack, and with new to Radeon Overlay and Radeon Settings, suddenly everything felt daunting. I have never used any forms of overlay on my Nvidia setup, never installed GeForce Experience, so kind of confused with the many options.

Testing the Vega 56 on my dual monitor setup gives me both pleasure and scorn. First thing first, the Vega 56 scores 20% less than my Zotac RTX 2080, for 1/3 the price! That's crazy good value! BUT... That coil whine... Really hurts my ear... I have to request tips and trick from my best friends who are AMD nut and they told me to tinker with FRTC and Chill. I got the coil whine under control with setting FRTC to 60 FPS (matching monitor refresh) and activating the chill function. Turns out I did spent too much on my home/office/gaming PC... All of my games works fine delivering solid 60 FPS all around.

I don't mind much about the fan noise, but seriously, I could swear my old 1080 Ti FE is much more quieter than the PowerColor Vega 56 on gaming session.

All in all a good purchase, and finally for the first time of 2 decades of PC ownership, I have a complete all AMD build, and it's not so bad. Now I have interest in Navi 10 (given coil whine issue and fan noise is addressed).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Using FRTC I can understand.

Using Radeon Chill no - I would not use it - If you want to know why I can point you to many posts about it.

Try out Virtual Super Resolution. turn it on then go to Windows Display Settings and you should be able to set a bunch of new higher resolutions for your display.

You should be able to render games at 2K on the Radeon Vega 56 and then they will be downsampled to 1080p on your monitor screen.

The image quality is better and you can usually drop your Antialiasing Settings.

If you want to increase the FPS performance on the Vega 56 GPU, first increase the power slider to the max.

Then increase the memory clock as high as you can using Wattman.

Then increase the GPU Clk.

There should be dual BIOS switch on the side of the card just below the back plate near the display outputs for the card.There is 1 low power and one for performance setting.

If the GPU is too loud you may want to try putting it in low power BIOS mode and just run it in balanced profile.

As for fan noise the only thing I can suggest is check out AMD Settings values and Wattman Settings.

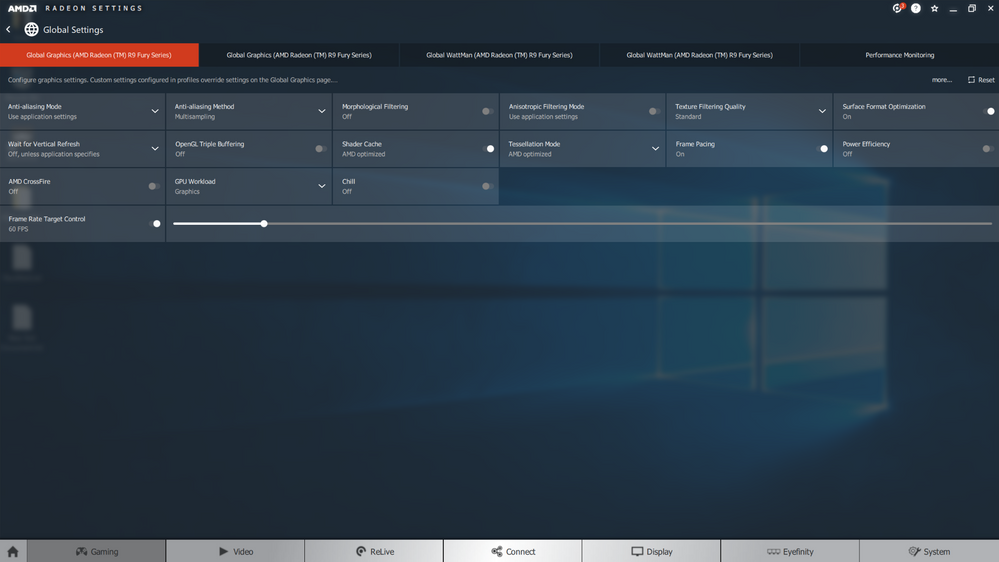

Feel free to post a scren shot such as this:

If you need some advice on setting up Wattman.

The above are for an R9 Fury X. I have an RX Vega 64 Liquid but that is .. not in use at the moment.

But I know how to set it up if you need help.

Good luck with your new GPU.

Bye.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have specific quirks though, I don't like wasting power and prefer undervolting even. I prefer lowering my power usage so whatever acts to hit a lower power usage but still maintain 60FPS is my main target. So overclock is a big no, even though I appreciate the allure of the act. I'm also okay without VSR, never used the equivalent technique on my RTX 2080 anyway (going back to low power target notion).

My only issue with the card is the coil whine, quite awful, especially on unlocked FPS. Again setting FRTC to 60 FPS make coil whine slightly manageable.

I'll try disabling Chill, I do encounter some frame hitching with Chill on when playing The Division 2. Thanks for the suggestion.

P.S. I just realized that I can change the fan curve on the global wattman setting, lol, what a noob.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes you can change the fan speed settings in Wattman or if that isn't good enough for your particular GPU you acn resort to third party tool like ASUS GPUTweak II which you can set up just to control your fan speed using custom fan curve. Usually third party overclocking tools not recommended for use with AMD Radeon Settings but in this case it might be worth a try.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Make sure you set these buttons otherwise GPUTweak II can overrideyour Wattman Settings at launch: