- AMD Community

- Communities

- PC Graphics

- PC Graphics

- 6900xt not 100% use at all games just using 70%

PC Graphics

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

6900xt not 100% use at all games just using 70%

the usage of my gpu is about 70% but i want get more fps with 100% usage how can i fix this can sb help me plz

my system :

rx 6900xt avg temp at games 70c(2000RPM)

cpu : i9 9900kf avg temp at games 60c

motherboard : ASUS TUF Z390 gaming plus WIFI

2t ssd NVMe ( all games instaled )

480 g ssd ( for windows )

16x2 ram 3000mhz cl 16

windows 10 64bit

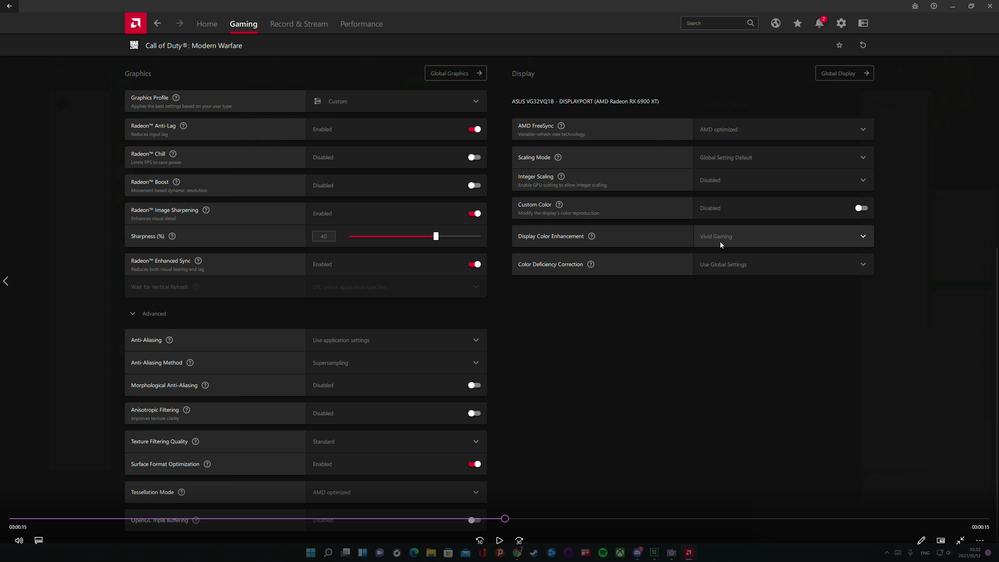

PSU 800w Radeon Software Adrenaline 21.9.2

monitor 2k (2560x1440p) 165hz DP port

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

That's normal... You're bottleneck is simply somewhere else, GPU won't run 100% all the time.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

thanks for reply , i have a more question : bottleneck for PCIe gen 3 ? or ....

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

No. Nvidia hasn't really pulled the rug from under RTX 3090 owners here at all. It's simply filling the void between the RTX 3090 and 3080 both.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In games using directx 12 and vulcan, there will be no problems, in games on directx 11 and below, the video card will be underloaded, but not everywhere, depending on the complexity of the scene, take the same game: immortal fenyx rising, in the open world FPS and loading rx6000 or rtx3000 video cards in the region of 70-80% even in 2k resolution, but as soon as you go down into the dungeon where there are few small details and textures (polygons), the maps will work at full (they will not be limited by the directx api itself 11, and the central processor) The same thing happens in assassins creed odyssey (origins), but not in Assassin's Creed: Valhalla since there is direct 12 (it can draw very very many polygons of textures). Also, AMD software, namely Adrenaline, does not correctly read the load from the video cards, msi afterburner does it correctly (but you need to activate the universal GP load monitoring mode in the settings beforehand). This is evident in all far cry games except part 6 there is directx 12 if I'm not mistaken)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I agree with your response, it is likely that OP is experiencing this in a DirectX11 or OpenGL game. But, from my experience this issue is more pronounced on Radeon GPU's than Nvidia GPU's in DirectX11, half of the time by drastic margins.

AMD Radeon does benefit more than Nvidia in games that are implemented with DirectX12 from scratch, but games that wrap DirectX12 over DirectX11 (Deus Ex Mankind Divided, Total War Warhammer, ~Serious Sam 4) perform even worse than their DX11 counter-parts on Radeon.

I have both a RX 480 and GTX 1060 I test this with.

Edit: as far as my reading went on this over the years, it is apparently due to AMD not supporting command lists in DirectX11, whereas Nvidia does, but AMD does support context switching in DirectX11 from what I remember. The gist of it is, that DirectX11 is for the most part single-threaded due to Radeon Drivers, whereas Nvidia does very good multi-threading of the DirectX11 runtime/driver by allowing other CPU cores to build draw-calls and link them to the main submitting CPU thread, whereas Radeon drivers has one CPU core building the draw calls and having the same core to submit them to the GPU.

Kind regards

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, that's right, and nvidia helps with this with their cuda cores + optimization by the manufacturer with drivers, I think so))

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

thank you but warzone not DX 12 ?!?!? because i have this problem at warzone ,Of course we don't forget that warzone 40g update and coming 40 bug to game 😄 but at humankind that vulkan and control that DX12 100% use

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I know this is frustrating, but as long as you are not dropping below 60FPS (this happens to me in Unreal Engine 3 DirectX 9 games, which AMD has broken since after 17.7.1 where I get 20-30FPS with 10% GPU utilization where I used to get 80FPS and they have not fixed it for 5 years after hundreds of reports). But, I am guessing you always get above 80FPS with that configuration.

Furthermore, 165Hz is already very-very smooth and responsive, even with VSync enabled. What I would suggest if I had that system, set Chill Min = 100FPS & Chill Max = 100 or 120FPS and set Frame Rate Target Control = 100 or 120FPS and enable Enhanced Sync for the game.

With frame-rate higher than 80FPS you can rather do things to improve input response than to aim for higher FPS (you do not always need higher FPS to get better input response) which is also why AMD developed Radeon Anti-Lag. Therefore, as an alternative to the above you can set FRTC = 120, Enable Anti-Lag and Enable Enhanced Sync. You should also get very good input response then.

@Geforsikus_2021yes Cuda Cores are just the name Nvidia gives to the cores on their GPU, just as Radeon names their cores Stream-processors. Furthermore, AMD also adapted hardware accelerated physics with their development of stream processors years ago, but only some games have used them (For example, Tress FX in Tomb Raider and Deus Ex are AMD's "hairworks", and I think Deus EX MD also does some cloth physics on GPU). Apparently, Radeon supported their own type of PhysX through OpenCL, but unfortunately game Devs did not use them:

Just wish AMD could improve older DirectX11 performance and after 5 years fix the Unreal Engine 3 DirectX9 bug they have reintroduced.

Kind regards

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

its ok ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @MacArthur

Yes, then in Global Graphics, look for Frame-Rate Target control and set it to 120FPS for example, to ensure a consistent frame-rate which will result in less jumps in framerates. Hope this helps, you can also try the chill method I have described if this is not smooth enough.

Edit: I wrote all of this with the assumption that you have a FreeSync monitor.

Kind regards

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

yes my monitor have freesync

best regards 🙏

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

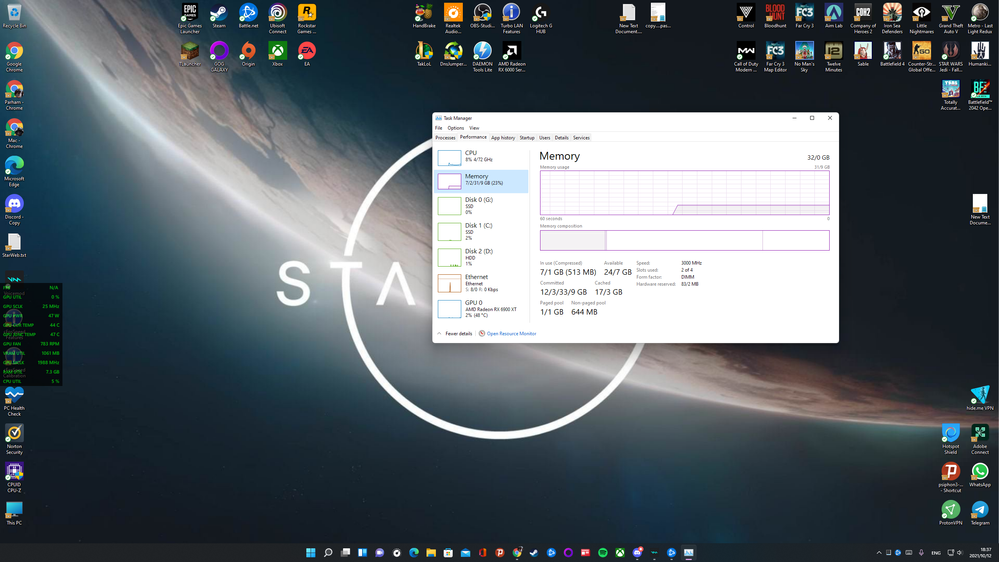

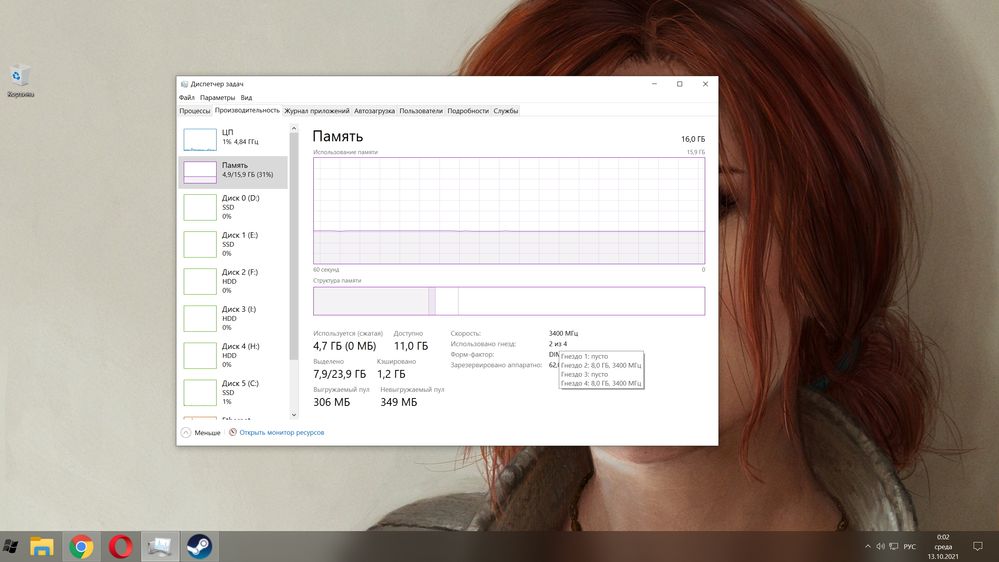

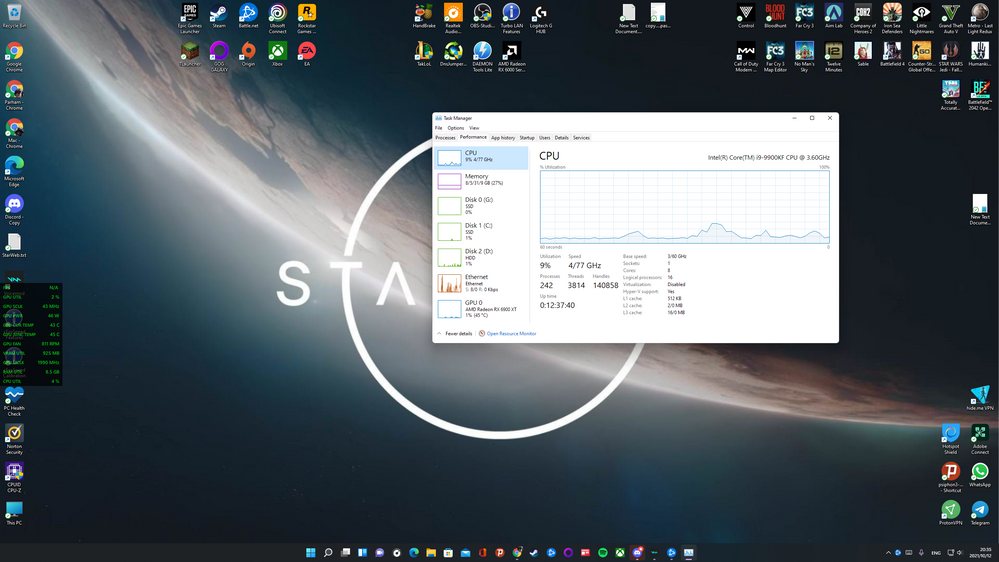

How do you display monitoring in the game? adrenalin or msi afterburner? I'm watching a video in 2k with i9 10900k with an rx6900xt video card, it loads into warzone 90%+- 5%. i9 9900k I think it will work the same way, not worse for sure. On the street you should have 160+ fps indoors up to 180-190, in 2k on ultra settings. If the FPS is lower, then there is a problem somewhere, do you have RAM installed in the 2nd and 4th slots in the motherboard? Open the Task Manager (performance => memory) How many sockets are used (hover the mouse should be 2 and 4 for you) If not, then the single-channel mode works. Regarding the settings in AMD Adrenaline, I only turned on amd freesync + there, limited the frame rate to 115 (monitor 120hz), the rest of the settings were not touched.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

using MSI afterburner and AMD adrenalin and ....

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

have you checked the gpu power draw while having bad performance? mine would just sitting at 60w and doing poorly.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

20+- 200w

max at 100% use 255w

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You also have windows 11, you shouldn't have upgraded, considering that there are quite a lot of performance problems in games. In the Task Manager, hover the mouse over (as in the screenshot) Slot 2 of 4 is used (it is on the numbers 2 of 4) and a window will pop out which sockets are currently being used. You should have: slot 2: 16gb, 3000 MHz and slot 4: 16gb, 3000 MHz, if so, then that's not the problem. Is the problem only in warzone? Try to disable all options in amd adrenaline, except freesync, and 160 fps limits, since you have a monitor at 165hz, and freesync only works in the range of, for example, 30-165 in your case.(but you can not limit it, then there will be frame breaks when the fps is above 165 frames / sec) I haven't played warzone myself, I can install it for the sake of interest, it will be installed by morning. and I'll throw off the fps photo (I have i9 9900k + 16GB of RAM, + rx 6900xt. + windows 10 ltsc), I will switch to windows 11 when the problems related to performance in games are corrected.) Check if you have the Virtualization-based Security component turned off, it is enabled by default. (to check in the search, type: Kernel Isolation => open the security settings and turn off the memory integrity and restart the PC.) And try playing warzone again.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It is true that the RAMs are in slots 2 and 4

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yesterday I installed warzone, crashes constantly with various errors, everything is fine in the game menu, here are some screenshots I could make (ultra settings without rtx, 2k) The video card is loaded to the brim with 90+%, what games do you have from ubisoft? Is assassin's creed valhalla installed? how does she behave? I don't have enough online games to compare with yours, diablo 2 resurrected is, I'm more single-player games)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

hi bro your playing old map at warzone and FOV 80 my FOV is 120

and I already had valhala 90+- use and avg 110 fps ultra 2k

and whats your FPS at 4k ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In 4k, I did not try to run the warzone game (due to the fact that it constantly flew out during the jump from the plane, sometimes when I had already landed, I deleted it) when I was training, the game did not crash. My monitor is 27 inches 2k, I don't see the point of raping the card in 4k, since I want it to give out 100+ fps, if there was a 4k 60hz monitor, then it would be possible to play 4k). Smart Access Memory is disabled, I turned it on about 2-3 months ago, then turned it off (few games use it well except in assassin's creed valhalla and warzone, forza 4.) Especially so that it would work, you need to update the bios, and when updating, all vulnerabilities are sewn up and processor performance drops, so I returned the old bios and disabled Smart Access Memory. If you have no problems in other games, it is possible that the settings in amd adrenalin need to be reset and gradually turn on one by one checking the load on the video card. Maybe it's just possible that the monitoring program is lying, I turned on the universal gpu monitoring mode in the msi afterburner settings, he used to lie and showed me a floating load on the GPU (then 30%, then 50%, then 70%) Now everything is stable. I don't play warzone, just for the sake of interest, I installed it to check how my game works, tried and deleted it because it crashes constantly with different errors, alas.)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

welcome to the bug zone if you survive....

tnx for all replys i hope my problem fix soon 🙏

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @MacArthur

Higher Field of View will tax your CPU & GPU more, since the CPU will have more Draw Calls to submit, since there are more polygons in the scene. Have you tried to see if their is a difference in performance with the default FOV?

Furthermore, AMD does not seem to keep our videos any more. The best alternative is to link your youtube videos in your post with the video attachment option and then selecting "From the Web".

Kind regards

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can I ask, why does it matter that in a CPU heavy game like Warzone with lots of other players and units in one map, that your GPU utilisation drops from 99%? It's perfectly normal in a game like this, particularly as you decrease resolution and image quality settings.

Your CPU, the game engine, the API used by the game, is only able to render so many FPS depending on the settings you use. As you lower the resolution/image quality settings, this strain is increased on those components and reduced on the graphics card.

As you increase the resolution, the reverse is seen. This is expected and perfectly normal.

Using a faster CPU such as a Ryzen 5000 series and Smart Access Memory will help increase GPU utilisation.

I watched your videos and the game looks to be running well and smoothly, so what is the issue here?

Here is some Warzone footage I captured using lowest settings at 4K resolution. Call of Duty Warzone - Testing for Stuttering with Low Settings + 6900 XT + 5950X - YouTube

I can test the same at 1440P if required, the only thing that would change is my GPU utilisation would drop a little. But that's normal and expected.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Matt_AMD

I liked your video, awesome system you have! I would like to weigh in as well and am not speaking for the OP.

I agree with what you said 90%, which is why I suggested to the OP that he experiment with Chill and FRTC to hit the sweet spot between smooth and high enough frame-rate, but possible more responsive gaming instead of aiming for maximum frame-rate.

But I would like to acknowledge that there are strange scenario's; sometimes bugs in our releases (I have seen you guys sometimes have different experimental driver versions than us); that results in not even one thread/core maxing out, but leaving the GPU underutilized and sometimes with the addition of stutters or uneven frame-pacing.

But I do agree that your gameplay look solid with 100% utilization for the most part and it would be interesting to see if the OP gets the same type of performance at the same settings as yours.

One last note, AMD Performance Overlay does not always update at the speed which Rivatuner Statistics Server does, therefore not showing major dips as often.

Kind regards

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I had the overlay set to update at 0.75 which means it should update just over once a second. I typically use HWINFO64 and Rivatuner for the overlay at 0.5 a second, but on this occasion I was using Radeon Overlay.

I should add that Radeon Overlay can be set to update faster than 0.75, the lowest it can go is 0.25 which should be more than enough for most folks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

can i ask you whats your GPU cooling ??!!! 47c 800 RPM !!!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It has a 360MM radiator for cooling so that's why the temperatures and fan speeds are low.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

😮

😲

(Surprised face emoji not working)

😮 No wonder the temps are so low! That's impressive with a liquid cooler attached to the GPU. The Sapphire cards looks nice these days, my first Radeon card was a Sapphire Radeon R9 280 3GB Dual-X, now I am trying to take care of my MSI RX 480, because five years went too fast and it has a lot of potential, except for some software bottle-necks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Another question, then why is the user @Geforsikus_2021 with rx6900xt and i9 9900k 100% used?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You are using Windows 11, there are some known performance issues at the moment, so that is probably not helping you.

https://www.amd.com/en/support/kb/faq/pa-400