Hello, I am a Ph.D. student in Poland at the Silesian University of Technology. And I wanted to start a general discussion on the classifier on the video stream improvement idea. I called it the "Multi-GPU & Multi-SET". Basically people use Multi-GPU and the "Syncing" for Convolutional Neural Networks. But nobody in my opinion tried multi GPU to classification. So what it is about? Well, it is about Multi-GPU detection and "Combining". You can imagine You Only Look Once (YOLO2 or YOLO3) models, right? For example, one is trained only to detect cars and people... another on the COCO set, another on VOC set... and so on. Now, when you have separation on detecting "specialization" on this AI/ML models, why not to combine and run on Multi-GPU? Many researchers work on the subject to make CNN works on as many classes as possible... but has someone tried this Multi-GPU approach? I think the answer is not. So, can you discuss this with me here?

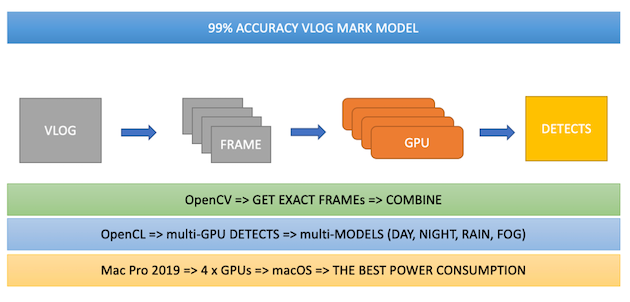

Some visual diagram of this idea is here:

I really wanted to test it on the Mac Pro - Apple but I do not have enough money/budget... so I did a Ph.D. Hanna (Hackintosh) is Ready – iblog.isowa.io and it runs Multi-GPU on my GitHub - sowson/darknet: Convolutional Neural Networks on OpenCL on Intel & NVidia & AMD & Mali GPUs... fork like shown here:

I have a post with nested video (sorry it is very long, 55 minutes) here:

PhD Progress from May 27th 2020 Update Keynote – iblog.isowa.io

Btw, I improved clBLAS to calculate GEMMs in Multi-GPU mode here:

GitHub - sowson/clBLAS: a software library containing BLAS functions written in OpenCL for https://g...

With the Pull Request that is waiting to approval:

Multi-GPU for GEMM for macOS and GNU/Linux by sowson · Pull Request #350 · clMathLibraries/clBLAS · ...

Thanks to that I was able to run on 2 x Radeon VII and macOS Catalina - Apple for the research.

There is one more thing... GPUs should not be connected with AMD Infinity Fabric Link because it combines the GPUs memory... and the model: OpenCL: Context of Multi-GPUs => Multi-Queues => Multi-Kernels simply does not work.

So let's discuss ;-).

Thanks!