OpenGL & Vulkan

- AMD Community

- Communities

- Developers

- OpenGL & Vulkan

- Bug with glsl sampler array

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Bug with glsl sampler array

Hi,

I'm about to implement some sort of megaTexturing and I've ran into a bug.

I have 4 pieces of 2D RGBA8 textures with 1 mipmap layers. Size is 16k * 16k. In total they need 8GB of space. I allocate these textures with glTexStorage2D (with glTexImage2D the results are the same, tho).

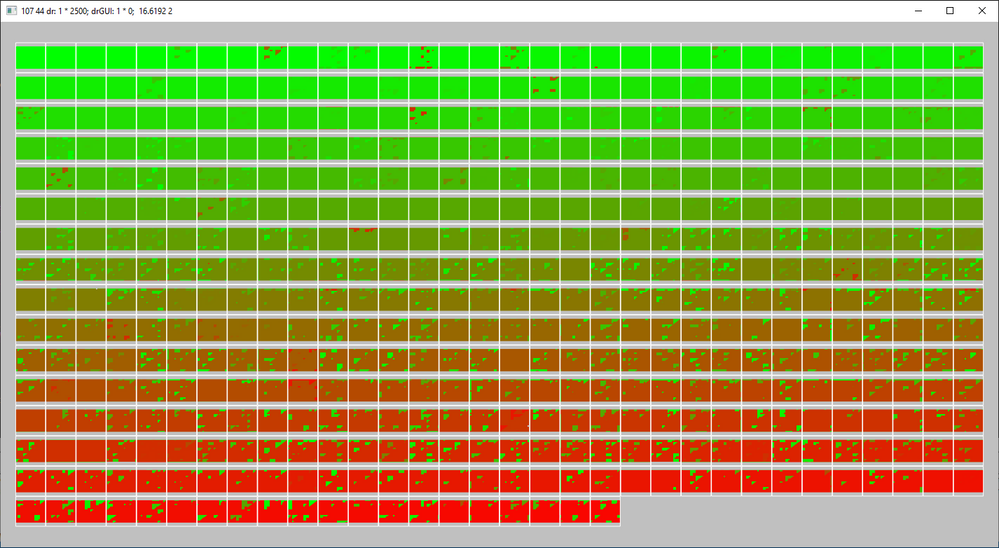

I use the 4 very big textures to allocate 500 pieces of 1600x1200 bitmaps on them. I upload those with glTexSubImage2D. My test images are each of a single color for testing reasons, the first image is Green and the last one is fading to Red. So when I allocate these images sequentially, the green ones will be on MegaTexture#0 and the red-ish ones will be on MegaTexture#3. Total size is around 3.8GB.

I have a geometry shader which is producing the quads for the 500 images, and decides which megatexture to use on that quad (flat int megaTexIdx), and calculates the texture coordinates to that particular bitmap inside the given megaTexture. It does this by sampling a 5th small texture with all the data that is needed to locate the bitmaps inside the megaTexture. This part is working perfectly.

In the fragment shader I have the texcoords and the megaTexIIdx like this:

in vec2 fTexCoord;

flat in int megaTexIdx;

The 4 megatextures (actually there are 16 in this array, but only 4 is used, the remaining is mapped for megaTex0) are declared in an uniform array:

uniform sampler2D smpMega[MegaTexMaxCnt];

Then I do the actual sampling:

vec2 texSize = textureSize(smpMega[megaTexIdx], 0);

vec4 texel = texture(smpMega[megaTexIdx], fTexCoord/texSize); //note: fTexCoord normalized manually here, it's works fine

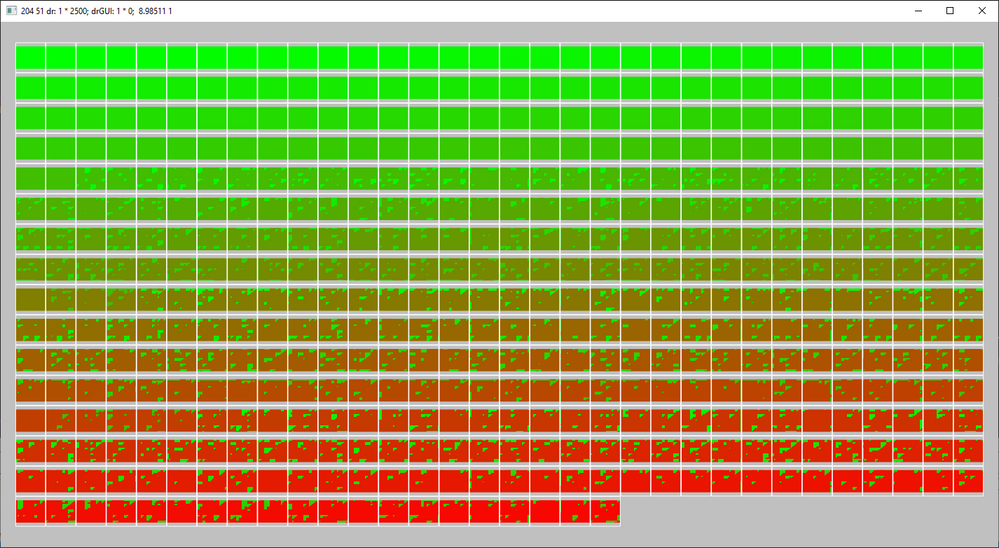

And finally I put the texel variable to the fragColor and got the following result:

We can see all the 500 pictures here. All of them has a single color and only the first ~260 is correct, those ones are residing on MegaTexture[0]. Pictures 260..500 has these artifacts: those are samples from megatexture[0], not from the later megaTextures.

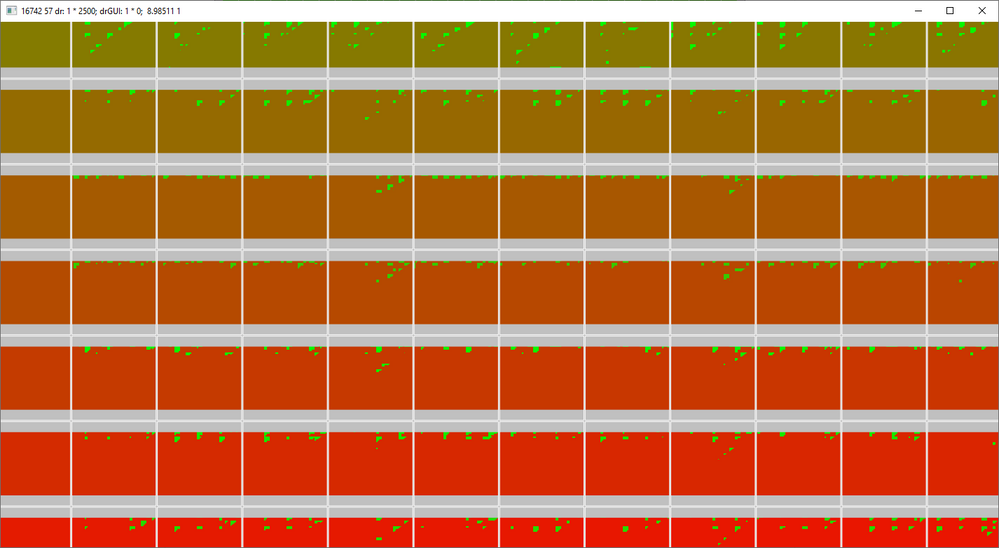

On this closer view we can see that the bugs are located mostly on the top side of the quads and also especially on the first trialgle of the 2.

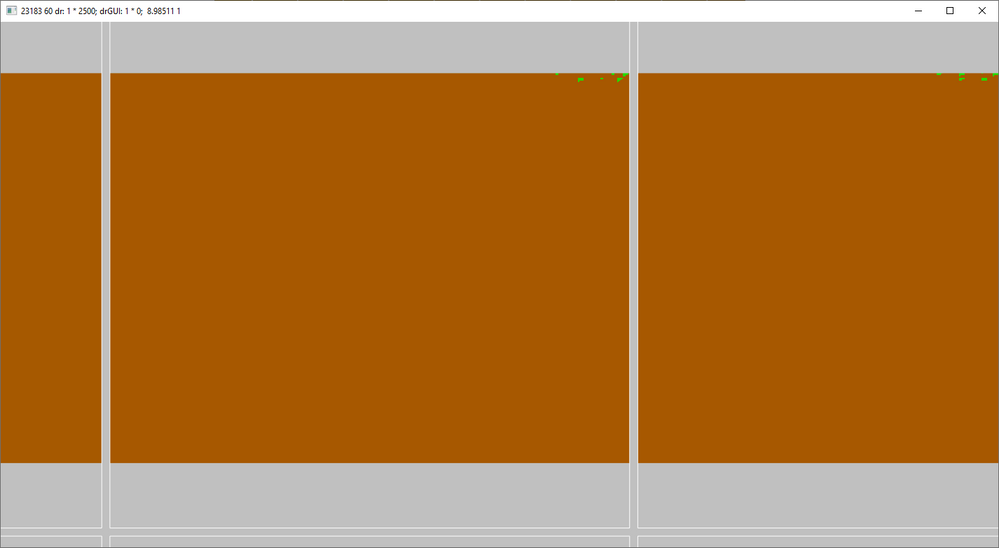

Zooming close and almost all bugs gone, but it's still bad. (And flickering based on the current random state of the GPU streams I guess)

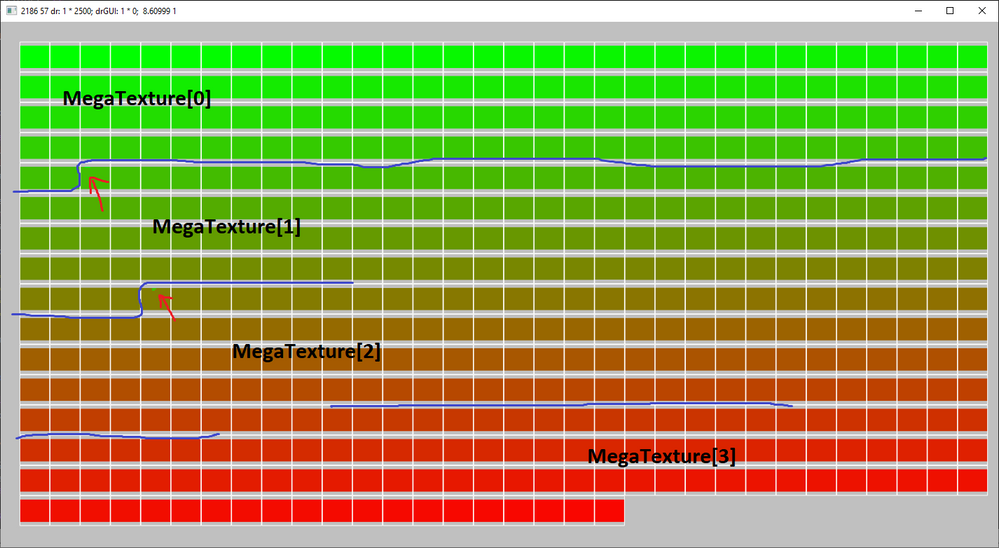

When I use nearest sampling instead of bilinear sampling, there will be less errors, but the result is still not perfect:

vec4 texel = texelFetch(smpMega[megaTexIdx], ivec2(floor(fTexCoord)), 0);

I marked the bug locations. They are at the megatexture 'transitions'.

For the worst case I have to turn bilinear ON, and also allocate the pictures in a random order (maximizing the number of those 'transitions') Here's the result:

I have no knowledge about the graphics use of the GCN chip, but I think it can be illegal to sample from different samplers in the same 'workgroup' or 'wavefront'.

I can only guarantee that in one primitive I use only one texture. But the artifacts are happening at adjacent primitives and when I use bilinear sampling on that uniform sampler array the locations of the bugs are overwhelming.

My question is: Is there a proper way to do this? To access 4GB of texture data from a single draw call? Or is it illegal/undefinied behaviour? How they do it the game called Rage for example? If I use only 1 16k*16K texture, which is 1GB of data, everything is fine, the problem is when I use 4 of those textures and multiplexing then dynamically.

Note that: I'd like to use multiple textures because I wanna handle them dynamically. So I start with a 1024x1024, then it can grow to 2048x1024, and then 2K*2K, and so on. These resize operations are costing exponentially high when doing on large textures, that's why I want to have many smaller ones.

Tested on R9 Fury X,

memory: 8GB HBM,

Radeon Software Version 18.12.3

Workload: Graphics

Sorry for the long description, Thank you in advance!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi dorisyan , could you please help to resolve the above issue?

Thanks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi realhet, Thanks for your report, I will investigate this issue as soon as possible.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

hi realhet, could you please provide a minimal code that can reproduce this problem?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Yes, I will extract it on the weekend. A little problem, that it will be in dlang, but the GLSL shaders will be the same anyways.

Thanks in advance

(note: I learned that there are sparse 2d texture arrays.

But this array of independent shaders would be better because it lets me allocate 4 large textures with differend sizes and color channels, one for color RGB textures, one for the RGBA ones, and one for 16bit heightmaps, etc.

And without this bug, I could access (by index) all of them from a single shader.)