General Discussions

- AMD Community

- Support Forums

- General Discussions

- Im selling my AMD cards

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Im selling my AMD cards

or trading for NVIDIA cards.

I need drivers who works and AMD doesn't have.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Might want to check the nVidia forums before you do, it's not all smiles and rainbows over there.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

$1 bid to start you off.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Good luck, i can give u 5 dollars.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

darn you are cheap! I will offer $5.01! ![]() Seriously though what does he have? He didn't say! Gonna be hard to sell or trade it.

Seriously though what does he have? He didn't say! Gonna be hard to sell or trade it.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

He is just a trolling guy, with his computer full of bloatware and crying about the driver.

Just the usual stuff. ![]()

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

HAHAHAHAHAHA! No.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I want to do the same thing.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

What are you thinking of selling?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You can try and sell your AMD Card on Ebay or Amazon Marketplace.

Example: I had an AMD HD 6850 GPU card and upgraded to a AMD HD 7850 GPU Card. Didn't need two cards so I went to Amazon.com and opened an account to sell my HD 6850. Within two days I had a buyer in which I sold the card too.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm trying to selling my cards but nobody wants AMD. That's guys preffer 1050ti before RX570

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

advertise you AMD GPU card with the price that you want for it on Amazon Marketplace. I am sure that there are as many buyers looking for AMD products as there are for Nvidia.

Here is Amazon FAQ about selling products on their website: Sell Your Stuff at Amazon.com

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I guess you are excited by the RTX 2080Ti, 2080, 2070 etc.

I don't blame you.

It looks like it will be the most interesting GPU launch since the GTX 1080 ~ 2 years ago.

Those cards are still beating RX Vega 64 AIB cards, run at lower power, overclock better, have smaller form factors.

I am sure I will get flak for saying that and people will say "DX12", or "Vulkan" - well, there are not many of those games out there.

Nvidia have caught up with Vulkan Performance based on last set of benchmarks I see and ran.

Nvidia are also catching up in OpenCL 2.0.

I will be watching the Nvidia Event on Twitch in 17 hours: Twitch

I really do not want to spend my money on Nvidia GPU's but they have better cards even with their existing 1000 series and the Nvidia cards I run and test have better / more stable drivers on Windows and Linux.

I think AMD were very lucky that the existing discrete RX Vega Cards are very good at Ethereum Mining.

Right now it looks like some retailers can't give the RX Vega 64's away ... PowerColor RX Vega 64 Red Devils are now selling for 400-450 new with over 100 worth of free games. I was tempted to buy one at that price but then where will I fit that thing. It is 2.75 slots high. It blocks other PCIe ports.

I am better off sticking with a pair of R9 Nanos.

It is even too big and takes too much power too to fit in PowerColor's own Thunderbolt 3 eGPU box.

Maybe the price is tanking because of the Nvidia RTX card launch, maybe people are hanging back to see if GTX1080Ti prices start to drop.

Really AMD need to do something to get back into being competitive in high end gaming.

I think the last time they were, was with the R9 Fury X / Fury / Nano cards which is where I have stayed.

The GTX 1080Ti is already 30% faster than an RX Vega 64 AIB card.

I have not seen any GTX2080Ti or 2080 benchmarks leaked yet, however just based on the reported additional CUDA cores and clock speeds alone, I think they might push RX Vega 64 down into mid range performance in comparison.

Bye.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

![]() Nothing like giving a glowing positive report on AMD's competitor's GPU Cards in a AMD Forum

Nothing like giving a glowing positive report on AMD's competitor's GPU Cards in a AMD Forum ![]()

just teasing you

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I am only giving honest feedback based on what I see and I experience.

I really do not want to spend my money with Nvidia.

Not because of problems I have had with their GPU's.

It has got to the point now that staying with AMD Cards has hurt the project I am working on.

Even if I had waited for the late RX Vega launch I think it took until March 2018 year before AIB Vega 64 cards had proper working drivers and BIOS.

I am really hoping that AMD can launch a Vega 64 on 7nm with better HBM2 soon.

I do need some more modern AMD GPU than R9 FuryX/Fury/Nanos, so I am looking to purchase one Powercolor RX Vega 56 Red Dragon if the price is low enough. Looking at benchmarks they are not much faster than an R9 Fury X. However they do have 8GB of Vram and High Bandwidth Cache is of interest to me. Also some new architecture features are of interest to me for programming reasons. If I could still buy an RX Vega 64 Liquid card new at a reasonable price I might.

I am not purchasing any more second hand GPU, even though R9 Nanos are now selling for £/$/Euro 200 - you might want to take a look at those yourself.

I am keeping my AMD cards FYI.

Bye.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

"PowerColor RX Vega 64 Red Devils are now selling for 400-450 new with over 100 worth of free games."

Where is this?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This may be of interest - if it is true:

PNY accidentally publishes RTX 2080Ti XLR8 Landing Page - all for $1000 USD | ProClockers

So based on that, lets see if I can predict out how much faster that card could be versus a GTX1080TI, then we could work out where that will likely stand versus an RX Vega 64 Liquid, which is faster than any RX Vega 64 AIB card but ~ 30% slower than a GTX 1080Ti.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

OK so getting back to this PNY "Leak".

4,352 CUDA Cores,

11GB of GDDR6 on a 352bit bus,

USB Type-C,

285W TDP

Real Time Ray Tracing.

$1000 US Dollars.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

GTX 2080Ti

Memory Bandwidth 616 GBPs

| Core Clock | 1350 MHz |

| Boost Clock | 1545 MHz |

It is a 2 slot high card.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

OK I think this new GTX 2080Ti will be up to 21.5% faster based on Cuda Core count assuming perfect scaling.

The GTX 1080Ti TDP is 250 Watts, this 2080Ti is slightly higher at 285W, so depending on the cooling solution the core clock may well run slower/ lower than GTX1080Ti.

The PNY card shows core clock of 1350Mhz and boost Clock of 1545 MHz.

Many GTX 1080Ti aftermarket cards run higher than that.

The GTX 1080 Ti reference clock is 1480 MHz and boost clock is 1582 MHz

So downscale due to lower core clock and boost clock.

Downscale on pessimistic side based on core clock value = 0.912

Down scale on optimistic side based on the boost clock value = 0.977

That makes the GTX 2080Ti 10.8% faster than a GTX 1080Ti based on the pessimistic boost clock value (that low boost clock may only be needed if RTX /Tensor cores are being run/powered up).

But let's stick with 10.8%. faster than GTX 1080Ti.

I will think about the likely overall performance improvement based on better GTX 2018Ti memory bandwidth of a factor of 616/484 = 1.27x improvement.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You are so smart.....

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Here is my guestimate on how much faster the RTX2080Ti Will be.

Cuda Cores Factor = 1.214963707.

Pessimistic estimate using lowest GTX2080Ti core clock = 0.912162162

Pessimistic estimate for performance increase due to higher memory bandwidth = 1.05072727273

Performance improvement versus a GTX 1080Ti = 1.164462113

Performance versus an RX Vega 64 Liquid = 1.513800747 times faster.

So I reckon that the GTX 2080Ti will be at worst, 51.4% faster than an RX Vega 64 Liquid.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

OH yah, well what's the air speed velocity of an unladen swallow? ![]()

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Don't know ...

Are you watching the Nvidia event? --- They are just talking about the 2080Ti now.

Still don't know how fast it is yet ...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

No, Gotta link?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Erm ... not sure I should do that.

Last time I pointed to it above, this thread got locked and I could not see it or access it.

I thought I was on the naughty step.

The event is on Youtube and Twitch.

There were about 12.5 Million people viewing it on Twitch which is pretty amazing.

It just finished.

I did miss the start of the presentation but it is heavily discussing the RTX (Ray Tracing) and the AI blocks on the GPU.

They have come up with a new Performance Metric based on how many ray tracing operations can be done on the new 2080Ti versus previous 1080Ti and below generations. No mentioin of AMD cards from what I see, however it is strange for Nvidia to launch the 2080Ti along with the 2080 so maybe they worry about new AMD cards on the way.

I guess we will have to wait for benchmark measurements to see how fast these cards really are.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

African or European?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Anandtech just posted this: https://www.anandtech.com/show/13249/nvidia-announces-geforce-rtx-20-series-rtx-2080-ti-2080-2070 …

It has some Single Precision Perf data.

The RTX2080Ti = 13.4 TFLOPs.

The GTX1080Ti = 11.3 TFLOPs.

That is an 18.58% performance improvement, which is not far off my pessimistic16.4% estimate which assumes card runs at 1350MHz core clock.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

GTX 1080Ti in Comparison.

3584 CUDA® Cores

11 GB GDDR5X on a 352-bit bus.

1582Boost Clock (MHz)

11 GbpsMemory Speed

484 Memory Bandwidth (GB/sec)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Don't take this the wrong way, but maybe you should be posting all this data on the RTX 2080 at Nvidia Forum. Since most or all the Users there have Nvidia GPU cards and might be interested in upgrading later on.

By you posting there concerning your research on the RTX 2080 it will give those Users an idea of the Specs of the new GPU card.

By posting here at AMD Forums you might change some upset AMD Users to decide to switch Nvidia GPU cards which is not a good thing for AMD based on your positive review.

Just my own opinion.

I realize that you are just giving your opinion of the new Nvidia GPU card, but it sounds more like an advertisement for Nvidia at the same time.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I have not been doing a review, I am just interested in seeing where these new Nvidia cards are at versus AMD Vega 64.

Bye.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

:sighs:

NVIDIA Architecture doesn't linearly scale due to how it works., the same is true for Intel Architecture but they're very close in basic design.

Now as a key point-of-note:

Frequency x CoreCount x 2 (Ops/Cycle) = Floating Point Operations / Second

i.e.

1582 x 3584 x 2 = 11,339,776 MFLOPs or 11.34 TFLOPs [GTX 1080Ti]

1542 x 4352 x 2 = 13,421,568 MFLOPs or 13.42 TFLOPs [RTX 2080Ti]

This is a +15.5% Peak Performance Difference.

Of course is we compare Base Clock., we get 10.61 TF Vs. 11.75 TF... which is only +9.7% Baseline Performance difference.

Keep in mind that GTX 980Ti to GTX 1080Ti was a 50-55% Performance Difference.

We can only assume how well this will actually Clock... remember that it is possible to coax 2050MHz Boost Frequency out of a GTX 1080Ti AIB.

Yet remember we're talking about a balanced Heat Dissipation Profile., so while 12nm (14nm++) does provide the potential for Frequency Improvement (as showcased by Ryzen 2nd Gen), to achieve this AMD didn't change the Die Layout or Dissipation Profile.

In fact, I'd wager that the Higher (OC) "Founders Editions" that also cost more... are likely to be very close to the Maximum Overclock.

Keep in mind here that while the AIB "Stock" is 1350MHz Base / 1545MHz Boost while 1635MHz FE Boost.

Of course there is an argument to be made here that this doesn't mean anything given the GTX 1080Ti is capable of up to 2000MHz over the (effectively 1600Mhz Stock Boost) … but in this case I'm not so sure, as the Titan V tended to tap out at 1780MHz and bare in mind that these were heavily binned chips; as there was very little variation between Review Frequencies or those on the Futuremark Database.

Remember that the Titan V, has a very similar Boost Clock.

As such I'd be surprised if you can get more than 100-150MHz Stable OC on the RTX Series.

The result being is we have the £1,100 RTX 2080Ti [14.73TF] Vs. £670 GTX 1080Ti [15.62TF] … and here's the thing, if we actually compare the potential performance of the £750 RTX 2080 [10.60TF] and £470 GTX 1080 [8.87TF] or £570 RTX 2070 [7.88TF] Vs. £380 (£420) GTX 1070(Ti) [6.46TF (8.19TF)]

Well it just ends up looking worse and worse.

Especially given *all* of the above 10-Series are capable of hitting 2000MHz, very noticeably improving their performance.

There is absolutely Zero Guarantee (like Volta) that Turing will actually clock too far beyond it's Stock Boost.

How does that stack up against the RX Polaris or Vega (GCN 2.0 Architecture)? Well, given that the RX VEGA 56 is effectively on-par with the GTX 1070Ti in *most* titles and the RX VEGA 64 is effectively on-par with a 1080 with Overclocking again in *most* titles. There will be select titles, such-as those using Epic' Unreal Engine 4 (which is heavily co-developed / produced by NVIDIA today), where sure... AMD Graphics just gets thrashed.

Still in most other Scenarios it holds it's own.

And with these Cards *finally* back to MSRP in *most* Regions., this also means Price-to-Performance they're really a coin-flip decision between the NVIDIA equivalents. This means performance wise they're also going to be relatively competitive with the RTX 20-Series as well., and what's more important to keep in mind is that while the GTX 10-Series struggles (but at least is capable of using) Radeon Rays (unlike RTX, which requires specialist Hardware) it doesn't on AMD Hardware... *ANY* GCN 3rd Gen or Better, which is what most people have.

Navi almost certainly (going by the patents filed) is also going to have notable acceleration not just for Radeon Ray workloads, but Machine Intelligence as well as Brute Force Traditional Pipelines... i.e. we could actually see Navi return Terascale 3 DirectX 11 performance to the GCN Architecture., without compromising the Asynchronous Compute.

NVIDIA might be betting heavily on Real-Time Raytracing., AMD isn't... and frankly this makes more sense, but when you bet on "New" methods; you then have to convince Developers to actually adopt it., which history shows, they don't. Not unless you pay them... A LOT.

I might see about putting my nose to the grindstone with Radeon Rays over the next month or so, see if I can't coax my RX 480 to achieve some comparable results to what NVIDIA RTX is showcasing because frankly I actually believe it's more than possible to achieve some similar results... and would be fun to achieve what NVIDIA will no doubt try to claim is "Impossible" without their Revolutionary new Hardware.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Off topic.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Not to whom I was replying to and frankly the base topic of "I want to trade my AMD Card for an NVIDIA Card" on the AMD Support Forums is a pointless topic as there will simply not be any takers... even if they're Performance Cards unless he's willing to trade down, then he's simply not gonna have any takers.

Where-as a comparative against the "Next Gen" NVIDIA, is actually somewhat pertinent and likely more interesting to those on these forums.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

"and would be fun to achieve what NVIDIA will no doubt try to claim is "Impossible" without their Revolutionary new Hardware."

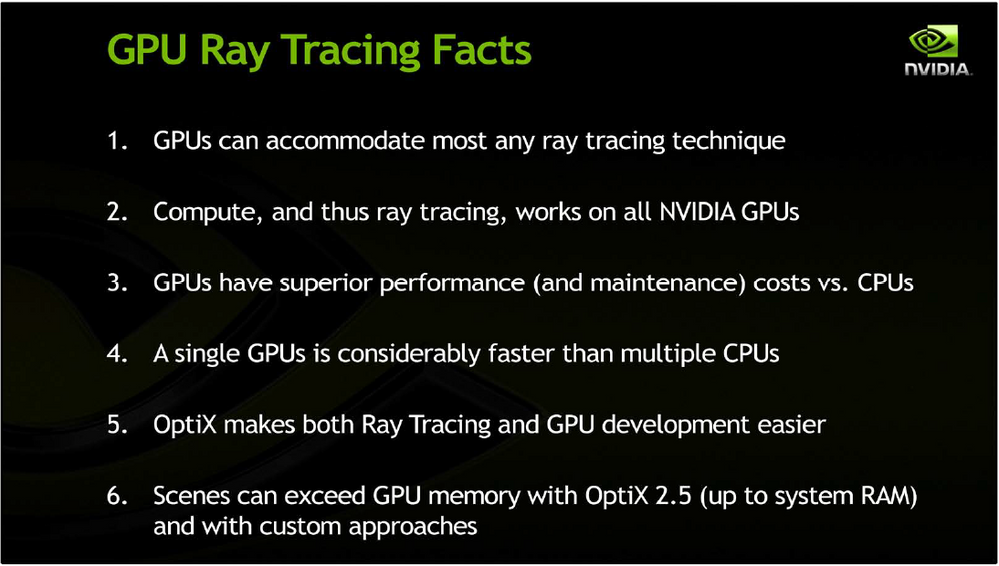

I think it is already pretty widely known that it is possible, using more standard compute approaches. Interestingly, NVidia themselves had talked about Ray-tracing on Kepler using compute. Here is a slide from their SIGGRAPH 2012 slide deck.

So what happened? I think I have touched on it on this forum before, but most of what NVidia does with gaming hardware is through the lense of maintaining different product stacks between professional (scientific and modeling) hardware and gaming. They learned that lesson the hard way during the Fermi/Kepler days, when the original Titan Black was purchased as an entry level professional card effectively cannibalizing the much higher margin Quadro segment. After that, NVidia effectively scaled back the compute functionality in Maxwell/Maxwell 2/Pascal etc, as to maintain the distinction between professional and gaming cards, while maintaining they're bottom line in both market segments. Since the compute functionality was dropped, so was ray tracing and we never heard about it for six years.

As DX12, Vulkan appeared, NVidia developed Gameworks. DX12 and Vulkan both can utilize the higher compute functionality to generate spectacular graphics effects. Gameworks is designed to achieve those same effects using more standard gaming hardware. So rather than change their hardware and again make their gaming cards more attractive to professional buyers, they try to steer developers into using other approaches to enhance graphics which would allow NVidia to maintain a differentiated product stack.

With the launch of DXR, Microsoft is bringing ray tracing to the DX12 API. This can be done, by NVidia's own admission, via compute. The RTX cards then, are just more of the NVidia modus operandi, which is NVidia developing a specific thing, to do something that can be done with more traditional compute hardware. There is already and AIDA64 Ray-trace benchmark that utilizes an FP64 engine for ray-tracing, so why not go that route? Because an RTX core isn't going to work with professional software, so again the product stack is maintained. And if NVidia didn't put out and alternate solution to do ray-tracing, then developers might start to do it via compute (as NVidia suggested in 2012).

As you noticed, my blurb above is framed in such a way as to portray NVidia's interest as protecting their professional card profits. That may be the case, but you could also argue, effectively I might add, that creating separate product stacks makes the cards unattractive to professional users and keeps the gaming hardware in the hands of the intended audience

Regardless of specifically why NVidia chose the path they did, the outcome is that games tend not to run as well on AMD hardware. Since AMD is a smaller company, they can't afford to make a different die for each market space. Vega, ultimately, is a single die designed to serve all different market segments, which it does do surprisingly well. But with all the extra compute cores on those dies utilized for professional software, they generate far more heat in traditional gaming workloads. AMD based cards would actually fare better if game developers would utilize that compute hardware just hanging out on the die. But that is exactly what NVidia doesn't want, if developers start to use it, they would need to add it back in to stay competitive which it turn would once again blur the lines between professional and gaming market segments.

And so the fight has been for the developers. AMD has opted to put their hardware in consoles and NVidia uses Gameworks. AMD hoped that developers would develop a game for consoles first (and they do) and then port directly to PC. But usually, developers care so little about the PC port that they farm that out to a separate studio who operate on a compressed time table for release (see Mortal Kombat X, Arkham Knight PC releases). Those secondary studios then have to utilize tools like Gameworks to make the PC title launch on schedule, leaving AMD discrete graphics users with a massively deoptimized title. All that does is damage AMDs mindshare in the PC gaming community, as console users rarely actually know what is inside their machine.

But regardless of intent, the end result is undeniable. NVidia will actually leverage their market position to hold back the advancement of gaming. We could have had ray-tracing via compute six years ago, but NVidia's desire to maintain product differentiation hamstrung that effort. Only now that ray-tracing could advance down the compute avenue without NVidia do they throw their weight behind a different solution. And of course, the RTX solution only works on their new lineup of cards, whereas a compute based solution would work on their older hardware and AMD hardware as well.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Nvidia has so much clout that everyone listens. Well they worked hard for it (riva TNT vs. S3). But AMD also did their homework and work hard: https://gpuopen.com/announcing-real-time-ray-tracing/, https://www.dsogaming.com/videotrailer-news/amd-shows-how-you-can-add-real-time-ray-tracing-effects-... .Vulkan and OpenGPU push was not appreciated even though it was suppose to simplify the process.https://gpuopen.com/announcing-real-time-ray-tracing/

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

RE: :sighs

Thank you.

RE: This is a +15.5% Peak Performance Difference.

I do not understand where that % figure comes from.

Here is how I calculated the percentage difference:

((13.4 - 11.3) / 11.3 ) * 100/1

=

(2.1 / 11.3) * 100/1

=

0.185840708 * 100/1

=

18.584% performance increase of GTX2080Ti versus GTX1080Ti according to the figures reported by Anandtech.

I know how to calculate FLOPS.

I based my "guestimate" of gaming performance improvement on increase memory bandwidth and factored in how much a memory overclock helps benchmark performance on previous Nvidia cards I have looked at. I did not talk about overclocking anything.

My guestimate may be way off.

I think GTX2080Ti will be capable of 16.4 % Graphics Benchmark performance improvement versus GTX1080Ti.

How that translates into actual game performance may be lower. It often is.

We will only find out when the benchmark numbers are out.

RE: Well, given that the RX VEGA 56 is effectively on-par with the GTX 1070Ti in *most* titles and the RX VEGA 64 is effectively on-par with a 1080 with Overclocking again in *most* titles

I consider *most* Titles are DX11.

I trust reviewers like Gamers Nexus, Hardware Unboxed etc etc. They look at DX12 and Vulkan Titiles which should be in AMD favor.

They seem to think that GTX1080 still beats Vega 64 and GTX1070Ti beats Vega 56.

I do not know where you get your pricing from, but Vega 56 is still up against GTX1080 here and Vega 64 had generally been up against GTX1080Ti.

Prices have tanked this week though, for a few AMD cards, plus AMD has a free game promotion. Nvidia are also doing game promotions.

Vega 56 AIB cards I am interested in, 2 slot high cards, are very difficult to get. They are on pre order even now.

I suggest you look at this review, and then tell me things are great.

Vega 56 - One Year Later vs GTX 1070 & 1070 Ti - YouTube

I am in process of purchasing a Vega 56.

I saw the above.

I stopped.

I have asked the reviewer some questions.

As for Navi. When is it turning up? 2020? I thought it is a mid range RX580 replacement?

RE: I might see about putting my nose to the grindstone with Radeon Rays over the next month or so, see if I can't coax my RX 480 to achieve some comparable results to what NVIDIA RTX is showcasing because frankly I actually believe it's more than possible to achieve some similar results... and would be fun to achieve what NVIDIA will no doubt try to claim is "Impossible" without their Revolutionary new Hardware.

Go for it.

Please create a separate post, and report the results.

It will be very interesting to see how you will be able to run Shadow of the Tomb Raider with Radeon Rays.

Bye.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Here is a very recent look at Vega 64 AIB cards versus GTX1080.

Can Custom Vega 64 Beat The GTX 1080? 2018 Update [27 Game Benchmark] - YouTube

GTX1080 wins.

Bye.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I just saw this article.

Nvidia GeForce RTX 2080 Ti hands on review | TechRadar

It is looking rather positive w.r.t performance if you ask me.

Bye.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I can post links too!

Don't Buy the Ray-Traced Hype Around the Nvidia RTX 2080 - ExtremeTech