Archives Discussions

- AMD Community

- Communities

- Developers

- Devgurus Archives

- Archives Discussions

- Parallel execution of kernels

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Parallel execution of kernels

I'm trying to execute kernels in parallel. Currently not for real use, but just to understand the possibilities. I'm running on an 460 GPU, windows 10, 64 bit.

My test set-up is that I have a simple kernel that does not do anything interesting, but takes about 800 milliseconds to execute. I run the kernel for a small work-size of 64, which means that it can run on a single CU on my GPU. GIven that a 460 has 14 CUs, it should, in theory, be possible to run a number in parallel. I start by allocating a number of command queues on the host. I then start a number of threads on the host, all doing the same thing:

- create their own kernel instance,

- get one (each one a different) of the preallocated command queues,

- enqueue the kernel,

- call "finish" to get timing on the host of the kernel execution,

- enqueue a read operation to get results back to the host

- call "finish" to get timing about the read back

This way I start a number, typically 4 or 8, kernels simultaneously, each on a different command queue. Looking at the time line with CodeXL confirms this.

What I'm seeing is that I usually get one or two kernels executing in parallel. Although I've occasionally seen three or four, but that seems to be the exception. So my first conclusion is that parallel execution is to some extent possible.

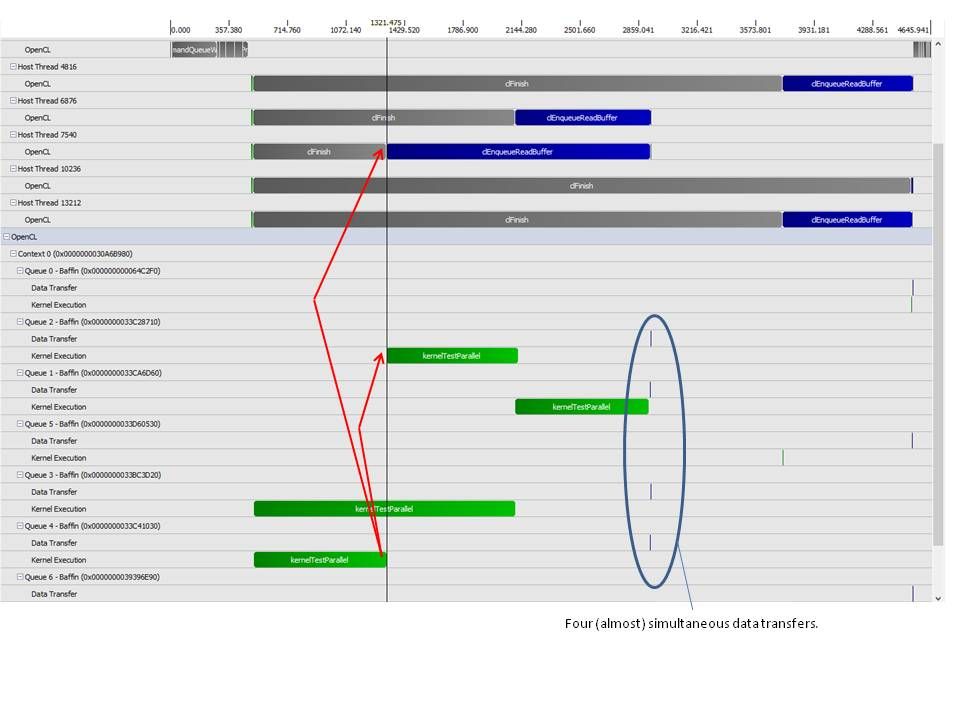

Looking at the CodeXL time line I see that my kernels take their normal 800 milliseconds to execute, or twice as long: 1600 milliseconds. However, according to CodeXL sometimes a kernel executes in almost 0 time, so there might be some error in CodeXL's timing. See attached picture.

I do have a few questions:

- I don't understand where the limitation of two simultaneous kernels comes from.

- I don't understand why kernels sometimes take almost exactly twice their normal time to execute.

- Looking at the attached picture, the enqueue operation for the read-back operation on the host for the kernel that finished first is delayed (the blue line top right). Apparently until something on other host threads or command queues happens, but I don't understand what it's waiting for. As far as I understand things the command queues should be independent of each other.

Any help would be appreciated.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sorry for this delayed reply.

I'm not aware of any such limitation. Also you mentioned that you saw more than two instances of running kernel though they happened occasionally. I think, you may do some experiments with work-size and kernels to check whether you always see similar observation or not.

Regards,

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Dipak,

Thanks for the response. It's good to hear that I'm not chasing an impossible goal.

I've played with work-sizes, but without any clear result. Obviously, if I increase the global work size to a large number (2000), the time goes up and I see little or no parallel execution. I've also reduced the global and local work-sizes to "1", so the absolute minimum, giving maximum possibility for parallel execution, but without effect. I still see 2 parallel threads at most. Three is very rare and it might be that CodeXL is giving an incorrect result, it does show some strange timings every now and then (see the example of a zero execution time in my first post).

My basic assumption underlying all my attempts is that command queues are independent of each other. Is that correct? If so, I see a few things in the attached picture that I cannot explain, but perhaps you can.

The finishing of the first kernel at the bottom apparently triggers two follow-up actions.

One is the blue "enqueueRead" on the host. That read could execute immediately, but is delayed until it's done together with three others in the group marked "four almost simultaneous data transfers". I don't understand that delay. Apparently the enqueue operation is delayed on the host, not on the device?

The second follow-up action appears to be the start of the execution on device queue 2. Apparently that device queue 2 was blocked somehow, but by what?

The kernel I'm executing is this:

"__kernel void kernelTestParallel("

+ "__global float *out){"

+ " int gid = get_global_id(0);"

+ " float sum = 0.0;"

+ " for (int i=0; i<10000000; i++) {"

+ " sum = sum + 0.1;"

+ " }"

+ " out[gid] = sum;"

+ "}"

regards, Hans.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

My basic assumption underlying all my attempts is that command queues are independent of each other. Is that correct? If so, I see a few things in the attached picture that I cannot explain, but perhaps you can.

From programmer's point of view, the OpenCL command queues can be thought as independent of each other. However, internally all the command queues are mapped to a fixed number of hardware queues (depends on the particular device architecture). Actually, these hardware queues act as GPU entry point and can enqueue tasks independently. So, an application with multiple command queues can run much faster on a device with two hardware queues than a device with only one hardware queue as more tasks can be enqueued to the GPU at a given time. For details, please refer the section "2.2.2.1 A note on hardware queues" in AMD Programming Guide.

Regards,

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Dipak,

Again, thanks for your efforts, highly appreciated!

I've studied the manuals but find it hard to translate them into code and correlate them with my trials. I'm using the (1) AMD_OpenCL_Programming_User_Guide2.pdf and (2) AMD_OpenCL_Programming_Optimization_Guide2.pdf, both are dated august 2015. I haven't found anything more recent.

Relevant sections appear to be 1.3.6 from (2), which states the benefits of using more queues. To quote:

"For Southern Islands and later, devices support at least two hardware compute queues. That allows an application to increase the throughput of small dispatches with two command queues for asynchronous submission and possibly concurrent execution. An OpenCL queue is assigned to a hardware queue on creation time. The hardware compute queues are selected according to the creation order within an OpenCL context. [...] Devices in the Sea Islands and Volcanic Islands families contain between four and eight ACEs, and are multi-threaded (thereby supporting more hardware queues), so they offer more performance."

And from (1), 2.2.2:

"The number of Asynchronous Compute Engines (ACEs) and CUs in an AMD GCN family GPU, and the way they are structured, vary with the GCN device family, as well as with the device designations within the family. The ACEs are responsible for managing the CUs and for scheduling and resource allocation of the compute tasks [..]. The ACEs operate independently; the greater the number of ACEs, the greater is the performance. Each ACE fetches commands from cache or memory, and creates task queues to be scheduled for execution on the CUs depending on their priority and on the availability of resources. Each ACE contains up to eight hardware queues [...]. Some of these queues are not available for use by OpenCL. Devices in the Southern Islands families typically have two ACEs. The ACE engines on the Southern Islands families are single-threaded, which means that they contain two hardware queues. Devices in the Sea Islands and Volcanic Islands families contain between four and eight ACEs, and are multi-threaded (thereby supporting more hardware queues) so they offer more performance. For example, the AMD Radeon™ R9 290X devices, in the VI family contain 8 ACEs and 44 CUs."

So I can understand that the number of hardware queues can be the limiting factor. But reading these quotes, I assumed that having multiple ACEs, say N, would imply having at least that number N of hardware queues and possibly more, e.g. 2xN, 4xN.

I've had a hard time finding out the exact specs of a 460. It's probably not an Island? Can you tell me the number of ACEs and hardware queues?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

RX 460 is based on Polaris 11 architecture (GCN 4th generation) and AFAIK, 4 ACEs are there. Not sure about the exact number of HW queues though.

Regarding the ACEs and HW queues, your understanding is correct. Also, you are right about the referred programming guides. Those are the latest version available. Sometimes I too feel that a more convenient way to find the technical details of these new devices would have been helpful for the users specially who are looking for optimization.

Regards,