Archives Discussions

- AMD Community

- Communities

- Developers

- Devgurus Archives

- Archives Discussions

- How to reduce map/unmap overhead on APUs?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

How to reduce map/unmap overhead on APUs?

Hi,

I would like to make use of zero-copy in an APU environment for legacy code.

I intend to use the following code for data transfer:

// Create Buffers, somewhere else in the application

inBuf = clCreateBuffer(context, CL_MEM_READ_ONLY, bufSize, NULL, &err); //input

outBuf = clCreateBuffer(context, CL_MEM_WRITE_ONLY | CL_MEM_ALLOC_HOST_PTR, bufSize, NULL, &err); //output

// get direct pointer to buffer

inPtr = (unsigned char *) clEnqueueMapBuffer(commands, inBuf, CL_TRUE, CL_MAP_WRITE, 0, bufSize, 0, NULL, NULL, &err);

// do something with the data pointed to by inPtr

clEnqueueUnmapMemObject(commands, inBuf, inPtr, 0, NULL, NULL); //unMap inPtr

clEnqueueNDRangeKernel(...)

// access result

outPtr = (unsigned char *) clEnqueueMapBuffer(commands, outBuf, CL_TRUE, CL_MAP_READ, 0, bufSize, 0, NULL, NULL, &err);

clEnqueueUnmapMemObject(commands, outBuf, outPtr, 0, NULL, NULL); //unMap inPtr

Is this the correct way to perform data transfer?

Also for me low invocation / map overhead is more important than peak-throughput on the GPU: The OpenCL kernels will be executed as part of a legacy application, where there is no way to do double-buffered data transfers, so all the calls to map/unmap should be fast. Do the parameters chosen for buffer creation in the code above make sense to this scenario?

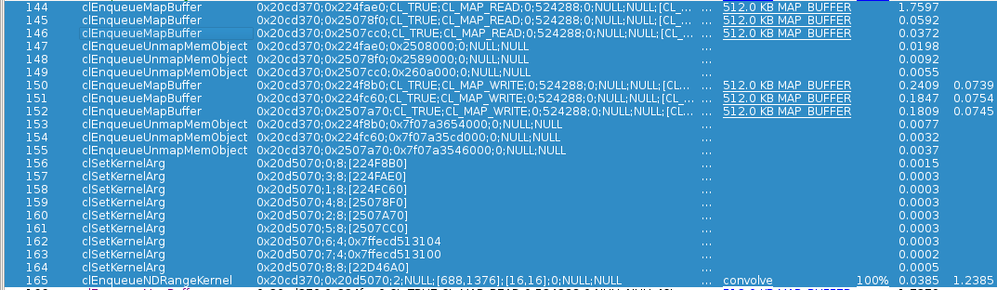

I've created a trace using CodeXL, and map/unmap with code very similar to the above snippit (only with 3 in/out buffers) has quite high overhead compared to the actual kernel invocation:

As you can see, while the kernel executes in ~1.5ms (the first buffer-map is slow, because it has to wait for kernel execution).

However mapping the input buffers is horrible slow (CL_MAP_WRITE), taking 0.18-0.25ms each.

Isn't there anything I can do to reduce this overhead?

The APU I used is an AMD_A10-7800 (Spectre) running Centos-7 with the latest Catalyst drivers.

Thank you in advance, Clemens

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The section "1.4 OpenCL Data Transfer Optimization" in AMD OpenCL Programming Optimization Guide describes varies ways of buffer creation and data transfer mechanisms to optimize the buffer transfer/access overhead applicable to some common application scenarios. I would suggest you to go through that section once.

There are many points to consider before choosing any particular mechanism which is best suitable to your own application. Many times its not so straight forward to do. Normally, better to perform few experiments before taking any final decision.

For example, just want to mention few points regarding the above code:

1) If you want to completely overwrite the contents of "inBuf", its better to use flag "CL_MAP_WRITE_INVALIDATE_REGION" instead of "CL_MAP_WRITE". Because, it can save one memory copy overhead.

2) If you want to fill "inBuf" from an existing host buffer, you may set the content during the buffer creation itself or even use the same memory as pinned host buffer.

3) As "outBuf" is created on the host-side, depending on situation the access time of "outBuf" from the kernel may be longer compare to any similar device-side buffer. So, you may actually observe slower kernel performance or even lower overall application performance.

Regards,

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The section "1.4 OpenCL Data Transfer Optimization" in AMD OpenCL Programming Optimization Guide describes varies ways of buffer creation and data transfer mechanisms to optimize the buffer transfer/access overhead applicable to some common application scenarios. I would suggest you to go through that section once.

There are many points to consider before choosing any particular mechanism which is best suitable to your own application. Many times its not so straight forward to do. Normally, better to perform few experiments before taking any final decision.

For example, just want to mention few points regarding the above code:

1) If you want to completely overwrite the contents of "inBuf", its better to use flag "CL_MAP_WRITE_INVALIDATE_REGION" instead of "CL_MAP_WRITE". Because, it can save one memory copy overhead.

2) If you want to fill "inBuf" from an existing host buffer, you may set the content during the buffer creation itself or even use the same memory as pinned host buffer.

3) As "outBuf" is created on the host-side, depending on situation the access time of "outBuf" from the kernel may be longer compare to any similar device-side buffer. So, you may actually observe slower kernel performance or even lower overall application performance.

Regards,

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi dipak,

Thanks for your suggestions, especially the CL_MAP_WRITE_INVALIDATE_REGION hint.

I took your recommendation and experimented a bit to find a good combination of flags - thanks to AMD's excellent CodeXL it is very easy to observe what is going on. For my use-case (low-bandwith kernel), host-side buffers in CPU cacheable area seem to work best (CL_MEMREAD_WRITE | CL_MEM_ALLOC_HOST_PTR).

I am also quite curious about map-free SVM buffers, although I understand the comfort they provide comes at the cost of throughput / bandwidth.

Thanks & best regards, Clemens

PS: Thanks again for CodeXL, especially for providing such an excellent linux version.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In general, a proper data-transfer methodology over multiple independent devices is to provide "rotating buffers", that is, instead of having a single set of input/result buffers you have two (three? Four?) and start working on the n+1-th while you wait for mapping (nonblocking) the n-th so you don't force a full CPU-GPU sync.

In my experience this makes the mapping even higher latency but higher bandwidth. Most importantly, you waste no GPU time.

Games have been doing that for decades.

Good News! Drivers internally apply some of those tricks for you. CL_MAP_WRITE_INVALIDATE_REGION is one of the most long-lived hints about buffer management. Hopefully it will improve your situation.

I'm honestly surprised mapping takes so long on such a recent APU, I think I have seen similar performances on my AM3 system.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi maxdz8,

I used to do the same "rotating buffer" technique on digital-signal-processors, the downside is however, that it can be sometimes hard to integrate such an approach into existing software where the whole application-design relies on synchronous execution. That was the reason why GPUs didn't seem very attractive for our use-case, however due to recent advancements in APUs it looks like things have changed ![]()

Thanks & br, Clemens