Archives Discussions

- AMD Community

- Communities

- Developers

- Devgurus Archives

- Archives Discussions

- Max memory allocation Restriction

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Max memory allocation Restriction

Hi,

There is a max buffer allocation limit (maximum memory allocation in clinfo) and its different for different devices. Can anyone explain why do we have this constraint?

Moreover, if my maximum memory allocation limit is 200540160, then i can allocate a 128MB buffer of unsigned ints (33554432*4 bytes). Now if I initialize another buffer of the same size, I will have to transfer it to GPU memory once the processing of 1st buffer is complete?

Is there a better alternative solution because i have an input array of tera bytes and breaking that into 128MB chunks and processing it one after the other is very slow.

Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I may not have the solution but I can share my experience with you.

Since you are working with terabytes of data I'm assuming you're running a 64-bit application? I have an algorithm I'm working on that produces a very large solution space depending on the input and by large I'm talking gigabytes of data. Initially, the max memory allocation limit on my HD 7750 was similar to your figure above, but now it is 536,870,912 bytes. I'm working with the latest Catalyst drivers and AMD APP SDK 2.9 on Windows 7.

Anyway the interesting thing is that in the 64-bit version of my application, I am able to create a buffer size of about 1.6 GB (512*256*3072*4 bytes integers)! Somehow the runtime intelligently allows this buffer to reside in host memory rather than move it to the GPU frame buffer which would have caused the application to crash since I have definitely exceeded the limit. And besides the HD 7750 has only 1 GB frame buffer. I did not specify any special flags for the buffer, just the simple cl::Buffer constructor and CL_MEM_READ_WRITE flag and that was all. Maybe you could try this and see how far you can push it, I'm guessing it will depend on how much host RAM you have. I shared my findings in a post here http://devgurus.amd.com/message/1300871#1300871 before but this seems new and behaviour is not documented.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Wayne, thanks for your reply.

I installed the latest catalyst driver and 2.9 SDK on windows 7 but when i tried to allocate (2^26 * 4 bytes i.e 256MB) buffer (more than CL_DEVICE_MAX_MEM_ALLOC_SIZE), i got the same CL_INVALID_BUFFER_SIZE error while buffer creation (-61). I am using 64-bit platform and visual studio 2010.

So I guess I have to initialize multiple buffers. any idea how can i use multiple buffers. Let say i have 2 input and 2 output buffers of 128MB each. should I send both simultaneously to my kernel or should i run 2 separate kernels? either of these are not good solutions if my input increases to gigabytes and not tera bytes because my one buffer has the max size of 128MB. to cater 1GB alone, i need 4 input and 4 output buffers. Looking forward to suggestions. Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi ifrah,

Just to clarify, apart from using a 64-bit OS, is your Visual Studio project also 64-bit? You can check and change this from the project properties to ensure that it is being compiled for a 64-bit platform.

Which GPU are you using and what does clinfo say about the max memory allocation limit? You know as I mentioned earlier, I don't know why this thing works for me so let's try to re-create as similar setup as mine as possible.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes Visual studio project is also 64-bit. I am using A10-5800K APU and according to clinfo, max memory allocation limit is 200540160 bytes.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Okay I see. I have the exact same APU on a couple of my machines but I have not tried the code I was referring to on it. I will do that tomorrow when I get to the office in the morning and get back to you to see if the same behaviour applies to APUs. I will test with a very simple and straight-forward code and if it succeeds I will share this with you for you to try as well.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi ifrah,

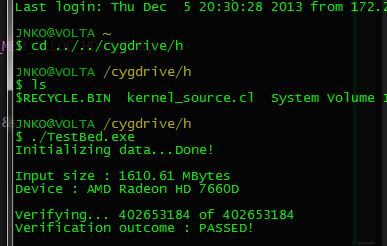

I have managed to test a simple code on the APU. I simply made 256 * 512 * 3072 work-items return their global IDs, which gives us around 1.6 GB of data for our buffer to store. Below is a screen shot of the result from the machine with the A10-5800K APU. I have also attached the exact code and kernel that produced this result. If you want the full Visual Studio project I can send that to you as well.

Now the interesting thing is that this code will only run if we include the CL_MEM_ALLOC_HOST_PTR flag (line 103) when creating the buffer. In my original project, when I initially encountered this behaviour, I didn't have to include this flag and the runtime automatically handles the buffer allocation. Anyway, as you might already know, the CL_MEM_ALLOC_HOST_PTR flag tells OpenCL to use host memory so this might be your solution.

Please let me know how everything goes. Hope this helps in some way ![]()

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey thanks a lot Wayne for all the help you provided ![]() it helped a lot!

it helped a lot!

So by using alloc_host_ptr, the max buffer size is restricted to *less than* max_mem_allocation size of cpu which is 1/4th of the total RAM available (~ 4GB = ~16GB/4 on my system) which is indeed more than 128MB (1/4th of total memory of gpu).

The max_mem_alloc size on my cpu is 4146315264. I got invalid_buffer_size error when I tried to allocate 2 buffers of 4GB each (using alloc_host_ptr flag) on cpu device and worked fine when allocating buffers of smaller sizes (1GB, 512MB etc) which is the correct behavior. Now, when i was allocating these 2 buffers (using alloc_host_ptr flag) on integrated gpu, i got accessing null position error when the size of buffers was 2GB each. when i was mapping host input array to buffer, i got this error. any ideas/thoughts that why is it so?

Now the question of how to use MULTIPLE BUFFERS for input and output still stands.

Let say i have 2 input and 2 output buffers of 128MB each. should I send both simultaneously to my kernel or should i run 2 separate kernels? either of these are not good solutions if my input increases to gigabytes and not tera bytes because one buffer has the max size of 128MB. to cater 1GB alone, i need 4 input and 4 output buffers. Looking forward to suggestions. Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I'm glad I was able to offer any little help that I can.

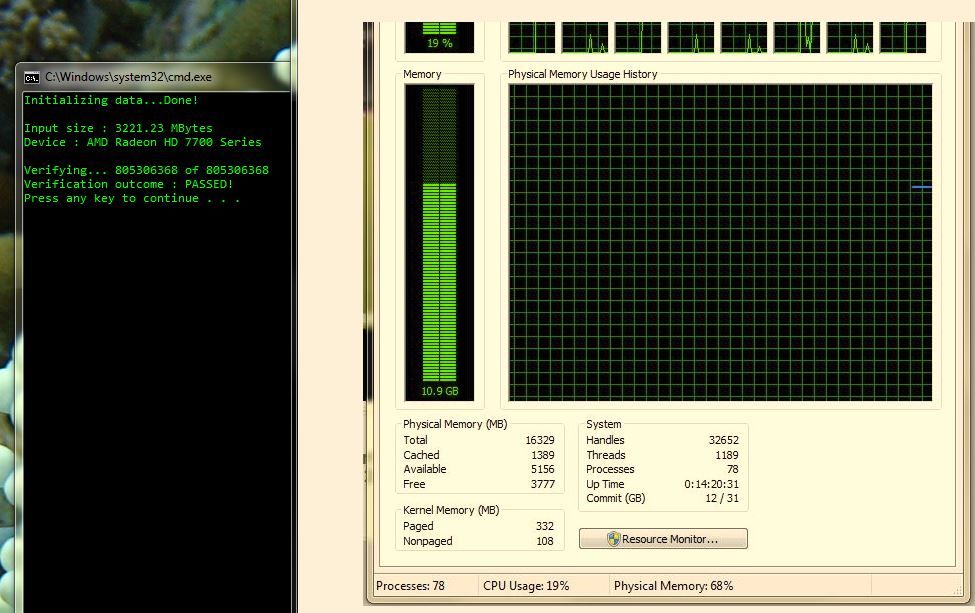

Firstly, I think you are running out of address space (not entirely sure). That is the reason why you need to make sure that your Visual Studio project is set to target a 64-bit application. This way you can allocate more memory. Below is a screenshot of the same sample program as before but this time working with a buffer size of just around 3.2 GB, including the verification array on the host side so we are looking at over 6 GB as you can see from Task Manager. I suggest you check your Visual Studio project settings under Properties -> Configuration Manager and make sure it is really a 64-bit project because by default you projects are built as 32-bit.

Secondly, on the question of multiple buffers, you don't need to create so many buffers. Buffers are kinda like vehicles, just create one that is big enough to hold whatever data you need to pass to the kernel and then keep re-using it by reading/copying data back and forth between host and device. Say for instance you need to move 1024 MB of data to the kernel in, say, 4 chunks. Create a 256 MB buffer, copy the first 256 MB of data to the buffer, run your kernel and then repeat for the next 256 MB until you are done. It's kind of similar to the situation where you try to cache data from global memory to local memory, but this time you are working between host memory and GPU global memory.

Hope these make some sense and offer some help ![]() please try the Visual Studio project settings first and let's see how that goes because with a 64-bit application you should be able to allocate beyond 2 GB.

please try the Visual Studio project settings first and let's see how that goes because with a 64-bit application you should be able to allocate beyond 2 GB.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

what is access speed to this memory? because as this buffers resides in host memory they become limited by PCIe bandwidth.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks again ![]()

I have verified that my visual studio project is set to target 64-bit application. The example you posted does work with buffer sizes smaller than 4GB. The difference of this example with mine is that I am filling the input buffer on host side with random values and not in kernel.

The exact scenario in which i am getting error is that I allocate a 2GB array on host side, fill it with random numbers, then allocate a 2GB buffer with read_only and alloc_host_ptr flags, map it, do memcpy with host array, unmap it. Now during memcpy, I get an error of accessing 0x000....0 location and I am not sure why.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This thread on Big Buffers http://devgurus.amd.com/thread/159516 may also be of some help. I often use large contiguous buffers on multiple 7970s that use 2.5GB++ of the card's 3.0GB physical memory without overflowing to the PC's memory, which can cause significant slowdown. The easiest way is to set the environment variable GPU_MAX_ALLOC_PERCENT=90 although I use 95. This allows very large single buffer allocations >2GB).

There are some caveats to make it work correctly, though these are not rules AFAIK.

1. Use the basic read/write flags, this results in physical GPU buffers.

2. Make sure the PC has a lot of free (equal?) matching memory (after the OS, and your own app have allocated theirs).

3. It's sometimes useful to do a dummy kernel run once (i.e., with early return statement) before other buffer operations, results in a clean buffer layout ...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Have you tried to do the normal read/write instead of the map/unmap to see if the behaviour is the same? In the sample I fill one vector on the host side and the GPU fills the other one and then I compare their values. This is just dummy operations to use the huge buffers on both ends. Try to do read/write rather than map/unmap to see if it helps otherwise I'm afraid I won't be able to offer much help ![]() unless it is possible and convenient for you to provide a test case so that I can try to re-create it at my end?

unless it is possible and convenient for you to provide a test case so that I can try to re-create it at my end?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes you are right that by using alloc_host_ptr, performance degraded even for APU.

@wayne

Yes i did normal read/write and on writing to my input buffer, i got CL_MEM_OBJECT_ALLOCATION_FAILURE error. I will provide a test case soon

the way you have used contiguous memory, i will try to use that (can you share a sample code for convenience?) Unfortunately, the gpu devices i have (A10-5800K and HD5870) do not have a lot of memory. Without setting GPU_MAX_ALLOC_PERCENT parameter, clinfo gives global memory size of HD7660 in A10-5800K as 802160640 and max memory allocation size as 200540160 (for 5870, these values are 1073741824 and 536870912 respectively). by setting GPU_MAX_ALLOC_PERCENT = 95, i am getting 1016070144 as global memory size and max memory allocation as 254017536 which is not a significant improvement (for hd5870, 1073741824 and 763048755 respectively).

GPU-z gives memory value for 7660 as 512MB and for hd5870 as 1GB. the value for global memory given by clinfo is fine for 5870 but don't understand how come its 802MB and then increased to approx 1GB for GPU in APU. any memory more than 512MB will be allocated on host memory?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Unfortunately, the gpu devices i have (A10-5800K and HD5870) do not have a lot of memory. Without setting GPU_MAX_ALLOC_PERCENT parameter, clinfo gives global memory size of HD7660 in A10-5800K as 802160640 and max memory allocation size as 200540160 (for 5870, these values are 1073741824 and 536870912 respectively). by setting GPU_MAX_ALLOC_PERCENT = 95,i am getting 1016070144 as global memory size and max memory allocation as 254017536 which is not a significant improvement (for hd5870, 1073741824 and respectively). GPU-z gives memory value for 7660 as 512MB and for hd5870 as 1GB. the value for global memory given by clinfo is fine for 5870 but don't understand how come its 802MB and then increased to approx 1GB for GPU in APU. any memory more than 512MB will be allocated on host memory?

Clinfo shows for the 7970s, before setting GPU_MAX_ALLOC_PERCENT = 95,

global memory = 2,147,483,648

max memory allocation = 536,870,912

after setting to 95 %

global memory = 3,221,225,472

max memory allocation = 2,804,154,368

Not so different (relatively) from the HD5870. Each gpu requires a certain amount of memory for the opencl system and for video processing. That size does not scale with GPU memory size.

I'm not so familiar with the APUs, but specs for A10-5800K (7660) just state shared memory and the sharing may be adjustable, look at this thread discussing A10-5800K and using GPU_MAX_HEAP_THREAD. http://devgurus.amd.com/thread/160288 Good luck.....

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi ifrah,

That's great I'll take a look and see if there's anyway I can offer any help.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi ifrah,

The sample you provided works fine on my system, however, I was able to re-create your problem by increasing the input size. After toiling around, I observed that this issue of CL_MEM_OBJECT_ALLOCATION_FAILURE occurred when multiple buffers of considerable size exist. Even when you keep creating just one buffer inside a loop the problem still exists. The moment I created just one big buffer (~3.2 GB) the program worked fine.

Being that I'm not an expert in OpenCL yet, I really don't know the reason for this and I will certainly try to find out more because this has been a good learning exercise ![]() unless some expert here chips in

unless some expert here chips in ![]()

So based on your test case, I believe you are just adding 1 to every value in the input array and comparing GPU and CPU results. I have re-created your code in C++ but I kept it straight to the point without the profiling and extra loops. Hope you don't mind me using C++. I also added a similar kernel to yours ("addition"). So basically, the code achieves a similar goal to yours but uses only one huge buffer instead. I have decided to attach the full Visual Studio project that I have been using for your problem so take a look. It contains some useful comments though it's a very trivial code. Code does not really emphasize quality e.g, I borrowed your method for generating random numbers, naive floating-point verification and I hard-coded loop unrolling so please ignore these for now.

Sorry, although I am not able to solve your problem, I hope the logic I used in this trivial program will give you some idea in tackling your original problem.