Archives Discussions

- AMD Community

- Communities

- Developers

- Devgurus Archives

- Archives Discussions

- Texture reads return garbage for CLImage2D [with m...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Texture reads return garbage for CLImage2D [with minimal example]

Hello everyone,

first I should say that this is a cross-post from http://www.khronos.org/message_boards/viewtopic.php?f=56&t=5456

Since I didn't get an answer after two weeks I figured I could post here as well.

Basically I'm having trouble with texture reads (read_imagef) when the texture size is 960x540 pixels and the format is CL_RGBA and CL_UNORM_INT8 or CL_UNSIGNED_INT8. I get correct results for any other texture size. Also note that the problem only appears on ATi cards on Windows. Any other configuration works (e.g. ATi on Linux, nVidia on Windows/Linux).

I made a small example that reproduces the problem on all ATi cards I could test with: FirePro V7900, HD6900, HD5870. You can get the source here: http://pastebin.com/ZAHMHu5B

Can anyone reproduce this problem?

Could this be a driver bug and if so, who should I contact?

Let me know if you need more specific information.

Kind regards,

Gregg

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The problem was fixed. However I'm not sure it will be available in the next public release. I'll see if I can promote a fix into some earlier release.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

"on ATi cards on Windows",

win7?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, the OS is Windows 7 64bit. The problem exists for 32- and 64bit executables.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Do you use the latest driver? If not, how about upgrade to it?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for taking the time to run the example.

Actually your output is wrong (as it is with all ATi / Win7 configurations). The expected output would be regular 256x256 boxes with gradients in it. It should look like "expectedOutput.png" in the original post. See the createCLTexture() function for details. At least the wrong output is consistent with the one I produced.

I used the latest drivers on the FirePro, but apparently not the latest APP SDK, that's why I got the 1.1 headers.

Still it looks like this is a wide-spread problem and exists in the newest APP SDK as well.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Gregg

Can you please let me know the version of OpenCL you are using? it should be available in the properties of "amdocl*.dll" in your system32 or syswow64 folders. I made a test for RGBA16 for that size and I am not seeing any corruption on our staging.

--

Saleel

AMD Stream SDK

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Saleel,

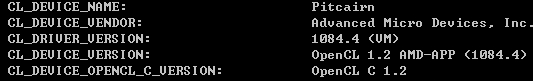

this is OpenCL 1.1 AMD-APP (938.2)

Philippe

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Saleel,

Yes, the problem only occurs with UNSIGNED_INT8 or UNORM_INT8, not with floating-point data..

I made further investigations and I could narrow down the possible problem sources.

First of all, to reproduce the problem it is sufficient to create an image2d (MEM_COPY_HOST_PTR) and immediately read it back to get the wrong output. No kernel execution needed between.

Secondly I encoded the x,y coordinate of each pixel into the RGBA8 values and dumped the read back data into a textfile. From the read coordinates it becomes clear that there is a problem with the image_row_pitch in clCreateImage2d. Each row has an offset of +64 pixels (e.g. row y has an offset of y*64). This is interesting because the problem happens only on half full-hd resolution which is w=960. And 960 + 64 = 1024. So I assume the loaded texture data is internally padded to 1024 pixels but copied directly.

However copying data directly makes only sense if the input width ist a multiple of 1024 which is not the case. However 960*540 is dividable by 1024, so maybe the driver checks if W*H is divisible by 1024 instead of the width only..

---

Test were done on an ATI HD5870

Catalyst 13.1

AMD APP SDK 2.8

Edit: Attached Sample/Results

HTH

Gregg

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi greg,

Thanks for reporting the issue.

I will try to reproduce at my end soon.

As of now, the pastebin seems to be easiest way to share your source/executable.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You can attach files by using the "Advanced editor". As I am typing, "use advanced editor" link is just right above this text.

(on the right hand top end of the basic editor)

Also, if you try to "edit" your posts, the forum opens it up in the advanced editor by default.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi greg,

I was able to reproduce the problem on an ATI HD5870, Catalyst 13.1, AMD APP SDK 2.8. I will forward it to AMD team.

I had also tried on a HD7xxx device and the code produced expected results there. You might wanna check that.

Thanks for reporting the issue.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Himanshu,

do you have any news from the AMD team?

Will this be fixed in an upcoming driver release? I need it to be fixed as I cannot find a halfway sane workaround.

Regards,

Gregg

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The problem was fixed. However I'm not sure it will be available in the next public release. I'll see if I can promote a fix into some earlier release.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, I met the same problem on HD7850, Can I have the answer how to fix the issue?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you try with the latest beta driver releases?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Btw, when I run "oclVolumeRender " inside Nvidia GPU SDK samples on HD7850, it seems linear interpolation doen't work. Maybe some OpenCL attributes are not properly supported by AMD graphic card?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

hxmingzzz wrote:

Btw, when I run "oclVolumeRender " inside Nvidia GPU SDK samples on HD7850, it seems linear interpolation doen't work. Maybe some OpenCL attributes are not properly supported by AMD graphic card?

I beleive 1084.4 is catalyst 13.1 driver. Probably you can once check with 13.3 beta.

Can you give some detail as to what attribute is not supported on AMD GPU, that is supported on NVIDIA GPU? I will try checking this sample soon.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks Himanshu, I have found the reason why I got such an error. When I wrote image I set blocking_read flag to CL_FALSE, but in my loop I changed image data in ptr. On NV device it seems not sensitive with this flag and I can get the right result whatever I set the flag to CL_TRUE or CL_FALSE, however on AMD card the image data was not written into device when I set the flag to CL_FALSE.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Good to know that. Great you fixed the issue yourself ![]()

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for your attention so many times.![]()