Archives Discussions

- AMD Community

- Communities

- Developers

- Devgurus Archives

- Archives Discussions

- 2 kernels together on C-60 or incorrect results of...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

2 kernels together on C-60 or incorrect results of APP Profiler?

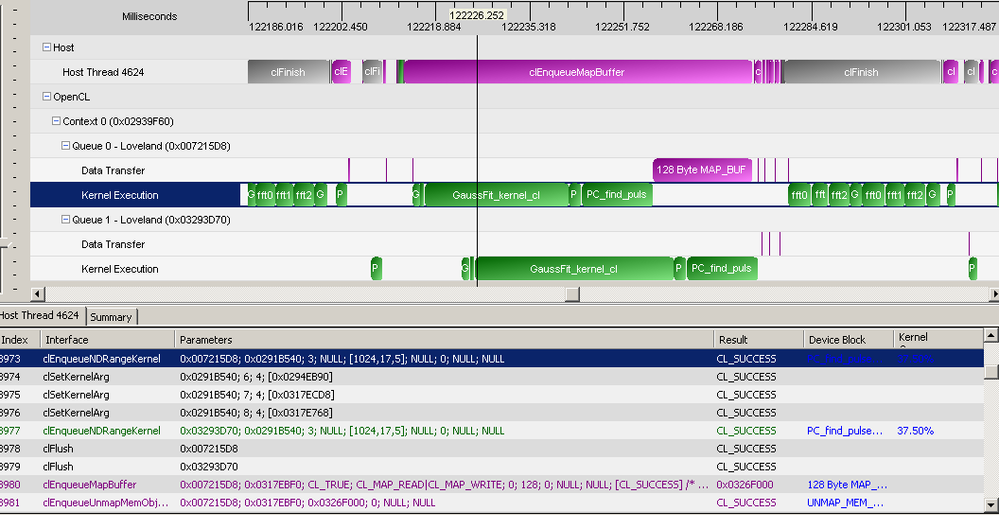

I'm trying to achieve better GPU usage via multiple queues usage.

Checking results with APP Profiler 2.5 I saw very interesting picture.

Does it really mean that C-60 APU is able to run 2 kernels simultaneously or it's just error in APP Profiler timing ?

APP Profiler 2.5 used as VS plugin, Catalyst 11.12 (mobile version) used. C-60 based Acer Aspire One netbook.

BTW, build that uses 2 queues instead 1 is slower ![]() . Maybe because some non-OpenCL runtime based differencies, still need to explore this more.

. Maybe because some non-OpenCL runtime based differencies, still need to explore this more.

And please, update your SSL certificate. It's quite annoying to add exception in Mozilla each time I want to login on this forums from different PCs...

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The GPU timestamps are incorrect. Concurrent kernel execution is not supported. I noticed you are using a relatively old driver, you can try with the latest driver? Profiler uses GPU timestamps returned by the runtime/driver.

You are trying to achieve double buffering, there are 2 ways. SDK sample TransferOverlap shows one solution using zero-copy. Another way is to call non-blocking data transfer API which utilizes GPU DMA engine. GPU timestamps are not available for neither scenarios. For zero copy, there is no transfer going on. For DMA transfer, timestamps are not available due to hardware limitation, we are trying to solve this issue in the future version of the profiler.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

And another big question about this picture.

Look on first MapBuffer. It takes all time secondary queue needs to complete its kernel sequence.

And I see this constantly. Next MapBuffer is 40ns length just as should be when mapping host-memory based buffer, but first one is too long.

I would expect it (cause it's blocking one) lasting long enough to complete kernel sequence in first queue (cause it's sync point for that queue), but it lasts much longer, until secondary queue finishes its own kernel sequence.

Why so? I expected speedup allowing secondary queue to execute kernels on device while first one blocked with sync memory mapping... but apparently that blocking map blocks both queues...why ? what I miss here ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The GPU timestamps are incorrect. Concurrent kernel execution is not supported. I noticed you are using a relatively old driver, you can try with the latest driver? Profiler uses GPU timestamps returned by the runtime/driver.

You are trying to achieve double buffering, there are 2 ways. SDK sample TransferOverlap shows one solution using zero-copy. Another way is to call non-blocking data transfer API which utilizes GPU DMA engine. GPU timestamps are not available for neither scenarios. For zero copy, there is no transfer going on. For DMA transfer, timestamps are not available due to hardware limitation, we are trying to solve this issue in the future version of the profiler.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for answer, I will try to update driver. Usually I'm afraid doing so because of very high probability of performance drop or some new bug introduction..

I use zero copy indeed for flags transfers (those few bytes map/unmap on posted picture). But these transfers are have to be shown somewhere on timeline, right? You answer supposes that they will be shown in wrong location most of time just due to lack of data about true location. Ok it's understandable, but maybe it would be better to mark such unreliable data in some another color, let say in light red one, to inform users about unreliable data? The power of this tool in visualizing real timeline and such unreliable data being considered as reliable one can kill profiler usability by leading to wrong conclusions...

BTW, did I understand right that any blocking memory transfer regardless of size will not use DMA and can't be overlapped with kernel execution even if multiple queues are used?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

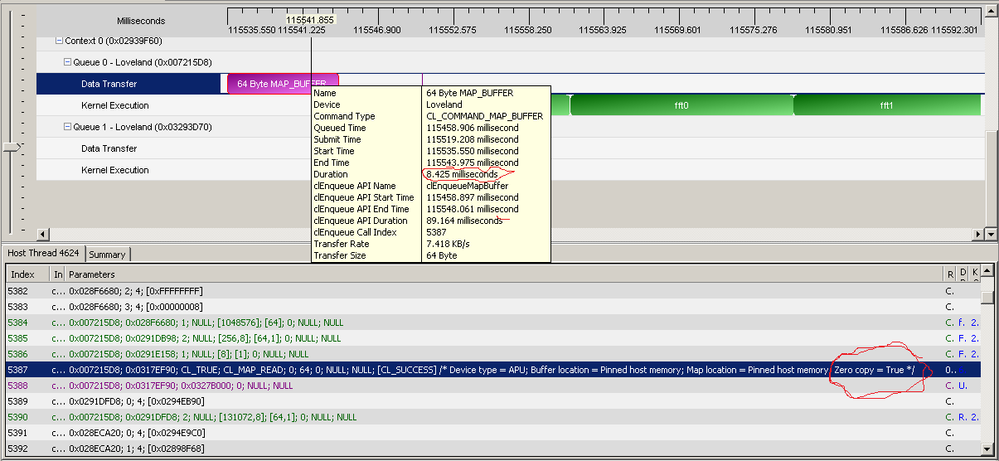

Zero copy timestamps should be correct - End time is equal or close to start time. If you see big map/unmap block, it's not zero copy. The latest version of APP Profiler reports whether a map API is zero copy or not in the API trace view.

For DMA transfer timestamps, if profiling is enabled, Async DMA transfer is disabled automatically, so the timestamps are correct, but you lose the compute transfer overlap benefit. We hope to resolve this in the future version of APP Profiler. For now, to find out the performance gain of using async DMA transfer, you can use CPU timer to measure the duration of a group of CL commands:

start = getTime();

dataTransfer();

launchKernel();

... // more enqueue APIs...

clFinish();

end = getTime();

duration = end - start;

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for info!

That is, one should never see kernel execution and data transfer overlap on the same GPU/context while using current version of APP Profiler. Good to know that.

Regarding zero copy and very long mapping: GPU buffer was created with flags:

CL_MEM_ALLOC_HOST_PTR | CL_MEM_WRITE_ONLY

I expect it to be allocated in host pinned memory, kernel to write to that buffer directly via PCIe bus (w/o GPU buffer creation) and mapping operation to be zero copy cause buffer already in host memory. Buffer size <1KB (128bytes) so there should be no pinned memory shortage for such allocations, right?

But as picture in the first post shows such mapping take very long time (not API mapping call but mapping in device queue!). That is, it's not zero-copy mapping... What can prevent such operation to be zero-copy one? Combination of used flags unsupported?

This buffer used to signal host to re-do some work on CPU so GPU kernel write to this buffer (set flag) in very rare cases, but host checks memory state of this buffer after each kernel call and zeroing setted flags if any. To speedup this I'm trying to use host-based pinned, cacheable memory but your answer shows that it's not zero-copy buffer in current implementation... what I did wrong?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Look on a picture, there is zero-copy mapping (APP Profiler says it's pinned buffer and zero copy path) that takes few ms ![]() to complete.

to complete.

Apparently APP Profiler get wrong timings again.

What can be done in this situation? I can't find any new drivers for this C-60 based netbook.

Perhaps, in APP Profiler release notes should be stated about this incompatibility to save time for other users?...

What is known to work config for APP Profiler 2.5 being used with C-60 APU ? Anyone using it on such device? Or at least on other APU?...

EDIT: and another issue: APP Profiler says buffer was mapped for reaqding, but actual flags combination was: CL_MAP_READ|CL_MAP_WRITE, i.e., mapped for reading _and_writing.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you also try TransferOverlap example from APPSDK? All map operations should be zero copy.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Regarding driver update:looks like AMD doesn't offer drivers for my netbook. Chosing C-50 APU (site has no C-60 option btw, AMD doesn't produce C-60, yeah??? ) and Win7 x64 leads to Hydra Vision soft, codec pack and driver verification tool that 1.1MB size. Sorry, I never believe you created driver of 1.1MB size. That is, no driver to download for C-60 on AMD site.

And Acer's driver package for this netbook is 2011 year old...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Regarding initial topic of this thread: I did update to latest Catalyst 12.8, but APP Profiler 2.5 shows 2 kernels executed together still.

Please, fix this in new APP Profiler release.