Archives Discussions

- AMD Community

- Communities

- Developers

- Devgurus Archives

- Archives Discussions

- Re: Is GPU_ASYNC_MEM_COPY=2 still available in SDK...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Is GPU_ASYNC_MEM_COPY=2 still available in SDK 2.7?

Is GPU_ASYNC_MEM_COPY=2 still available in 2.7?

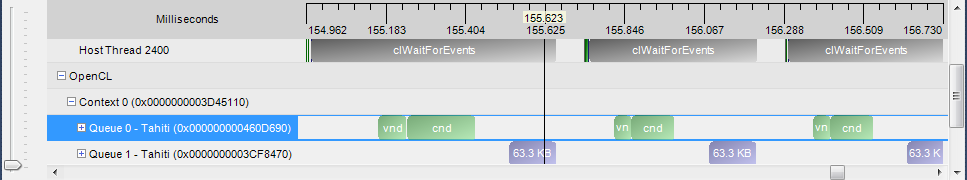

I enqueue a kernel and a buffer-reading at the same time on different queues, but they are executed one after another. I am supposed that they could be done parallel.

the code is like that:

// queue: Q0, Q1

// kernel: vnd, cnd

for(;;) {

clEnqueueNDRangeKernel(Q0, vnd, ... , event0); // vnd produce an event0

clEnqueueNDRangeKernel(Q0, cnd, ...); // cnd run after vnd in the same queue

clEnqueueReadBuffer(Q1, ..., 1, event0, NULL); // buffer-reading wait for vnd(event0) to complete

// wait for buffer-reading to complete

// ...

}

I am supposed that cnd and buffer-reading could be executed together on GPU, and the execution sequence is:

... -> vnd -> (cnd/buffer-reading) -> vnd -> ...

but in fact they are done serially.

my environment:

AMD APP SDK 2.7 + Catalyst 12.6 Beta (8.98-120522a-139735E-ATI)

HD 7970

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I think maybe it can improve the performance, it could not implement parallel.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

the buffer-reading latency is so high!

i use a regular device buffer and call clEnqueueReadBuffer to retrieve data from gpu.

How can I improve this?

Will it be helpful to map it to the host memory? (especially when this buffer is small, typically some bytes)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

GPU_ASYNC_MEM_COPY is automatically disabled when profiling is enabled.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Lihan,

by saying 'profiling is enabled' do you mean that I'm running an application in APP profiler / sprofile, or will it also be disabled if I create a queue using CL_QUEUE_PROFILING_ENABLE?

Thank you

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

if you create a queue with CL_QUEUE_PROFILING_ENABLE, DMA engine is not used, i.e. no compute transfer overlap.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Lihan,

Can you provide an example of overlapping data transfer and GPU kernel computation with setting GPU_ASYNC_MEM_COPY= 2?

I'm trying to find a way to overlap Read/Write Rect Buffer with GPU computation on 7970 (Tahiti) card under Linux.

Thanks,

Shawn

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You can take a look at sample app TransferOverlap. It achieves compute transfer overlap without using DMA engine.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Lihan,

I already checked TransferOverlap sample. It overlap memset()/memcpy() with GPU kernel. My concern is:

(1) If I want to use rectangular area transfer (like transferring submatrix in original matrix), I need to use memcpy() column by column, it will become low efficiently.

(2) This sample didn't use 2 queues and GPU_ASYNC_MEM_COPY, if 2 queues method is effective for clEnqueueReadBufferRect / clEnqueueWriteBufferRect, I can do some similar ways like in CUDA using 2 streams.

Thanks,

Shawn

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

memset()/memcpy() writes from host(CPU) directly to GPU memory in the case of zero copy. It's the same idea as overlapping clEnqueueRead/Write and clEnqueueNDRangeKernel using 2 queues.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Lihan. Are there any updated relating to DMA transfer-compute overlap in SDK 2.8? Thank you

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Right, as you said this sample is NOT using DMA engine utilising CPU instead. In my case I have enough work for CPU to do (in other threads), so consuming CPU for transfer will reduce the overall performance. If on the other hand the transfer could be done by the DMA engine, both GPU and CPU could be doing something else.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks Lihan, disabled profiling in my code and did not notice any improvements, in fact, the code now works slower. In the absence of documenation, working examples and monitoring tools (i. e. profilers) it is extremely difficult to diagnose what's going on inside and why it is not working.

Is this feature going to be included in the official SDK release any time soon?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Lihan Bin wrote:

if you create a queue with CL_QUEUE_PROFILING_ENABLE, DMA engine is not used, i.e. no compute transfer overlap.

Is there any chance that this limitation could be lifted in future versions of the driver? Being able to do profiling with all features (including transfer overlap) is essential when optimizing some kind of workloads (and particularly multi-GPU usage)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, Profiler team is working with OpenCL runtime team to provide better profiling result when DMA engine is on.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

hmm, so i can't see the transfer overlap happening in the profiler output. That is sad.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, it's sad for me too. I cannot use CL_QUEUE_PROFILING_ENABLE to see the feature.