Graphics Cards

- AMD Community

- Support Forums

- Graphics Cards

- Re: Problem with enabling HDR on 7900 XT with QN90...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Problem with enabling HDR on 7900 XT with QN90A tv

Hello everybody,

It is my fist time buying amd gpu and ever since i got it i cannot enable hdr,

Basically when i had nvidia gpu i used game mode on pc and hdr automatically got enabled with Gsync.

But now, I cannot enable hdr anyway what so ever, on gamemode on tv it says freesnyc premium pro but on hdr option it says not enabled,

I tried disabling freesnyc in gpu settings, turned on hdr, still doesn't work.

I cannot get my gpu and tv to recognise hdr in anyway what so ever, when i go to play a game everything is so dim and details are missing its redicilous.

any help?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This Windows Tech site gives you a guide on how to fix HDR issues with both Monitors and TV: https://www.windowscentral.com/how-fix-common-problems-hdr-displays-windows-10

This YouTube video shows how to set up a Samsung Smart TV for HDR: https://www.youtube.com/watch?v=wkfb5yc7fAU

This LinusTech Forum User is having a similar issue on a similar Samsung TV Model: https://linustechtips.com/topic/1437829-cant-get-hdr-enabled-in-windows-on-my-new-samsung-4k-tv/

EDIT: This current AMD Forum User is also having issues with the same Samsung TV Model as yours is with VRR: https://community.amd.com/t5/drivers-software/samsung-qn90a-vrr-hdr-6900xt/m-p/476681#M145055

EDIT: From the last link above the User found out that lower the TV's Frequency he was able to get HDR to work on his TV set. Turned out his GPU card didn't support HDR above a certain Frequency (Nivida GTX 1080).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey! first of all i would like to thank you for helping me,

the post noted on amd forums i've read and checked.

My tv supports free sync premium pro, when i go and see input signal on my tv while gaming it says free snyc premium pro,

on windows i have my hdr enabled,

while im on desktop my windows hdr is not enabled, right now i only have 2 games that i was able to try and check resoults.

on COD MW 2022, basically no way to enable hdr sadly. I've tried both 10 and 12bpc colour depth, also tried changing my resolution in game because some people said that this helps but it doesnt work.

on ac valhalla: i've managed to get hdr working, but only with 10bpc colour depth, using hdr option in game that says Free snyc premium pro, when i go to check signal with my remote it says " free sync premium pro" "HDR" "UHD" "3180x2160"

I also checked the dxdiag from the forum post mentioned above and everything checks out.

I am using HDMI 2.1 cable ( 48Gbs ).

Would like to say also that I've played games on this tv with my previos gpu ( nvidia's) and also on my gaming laptop few times, both of them were supporting hdr with out a single problem.

Again thanks for help! I really appreciate it.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am not a gamer so some other Users that are Gamers might be able to help you set up your TV set to run HDR Games correctly.

If you do find a fix please post back. Thanks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for help,

Also tried putting nvidia gpu back and hdr works perfectly..

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Well that does show the issue is either with your AMD GPU card or AMD Graphics driver.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hopefully there will be driver update sooner or later, i got 13 days left for refound without reason noted, if it won't be fixed untill then i shall just refound it

thank you again for your help!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for the post.

We've become aware of this issue regarding HDR not displaying correctly on this TV when paired with AMD GPUs and are currently investigating.

When I have more information to share, I'll update this discussion.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for this Matt, I was in the process of returning my 7900XTX for an nvidia card because of this issue. If you are working on a fix then perhaps I should hold off. It is worth noting that the TV detects the HDR signal correctly if you disable freesync in the driver panel. Turn freesync on and it displays premium pro in the TV menu but doesn't recognise the HDR signal.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thats weird.

I sadly couldnt manage to get hdr work at all if i have Game mode ON. Even trying to fix it with CRU ( editing free sync) and by turning free sync off, HDR in my case is not detected.

Only way ive gotten HDR to work is to go to gamemode ON then OFF and hdr works, sadly free sync and all other features get lost and it is unplayable like that.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dear Matt.

First of all I would like to thank you for helping us and looking into this topic, and sorry for such a slow reply.

I have tried some other troubleshooting's that ive posted on my newest post few weeks ago, where i have went trough everything done in as much detail as possible.

Link to the post mentioned.

https://community.amd.com/t5/drivers-software/qn90a-7900-xt-hdr-not-working/m-p/585955#M169524

On my post - last link posted ( samsung community forum) is my link. There was one person with exact same tv, different gpu. While he could use CRU to get hdr to work ( he posted full video in comment), i have done same thing ( posted video with exact same steps) but sadly this did not work for me .

Thank you again for all the help, it is much appreciated.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Matt, just wanted to let you know I am experiencing the same issue with RX 6700 XT and Samsung Q70A. So, it seems that AMD/Samsung TV combo has issues.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

And that's something to be fixed since the TV is Freesync Premium Pro ready, and that's an AMD technology, the best they have to compete with GSYNC, and it is obviously not working as expected.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks, I'll pass that information along about the other Samsung TVs mentioned in this discussion.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you Matt.

Xbox Series X, and PS5 are both working great with this TV, which means in game mode 120Hz, HDR 10 and VRR are all working together without issue, using the same HDMI 2.1 cable as I was using with graphics card. So, it has to be the drivers.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Matt,

Any further update on this? I imagine it is not difficult to replicate the fault?

Thanks for your help thus far.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

About 3 weeks ago problem was replicated by amd support team, after i uploaded video explaining provle., Sadly no updates as of right now.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Matt_AMD , same problem here. Using a 50inch QN90B (the 2022 model of the @DiabloSouls 2021 TV, which has 144Hz instead of 120Hz). I have it paired with a 7900XTX.

So my config is 3840x2160 @ 144Hz, 10bit, 4:4:4 Full RGB through a certified HDMI 2.1 cable.

But... Same issues as described here. TV shows Freesync Premium Pro as active when I enable Game Mode on TV (because I want to use VRR), but peak brightness (nits) is lower than TV is capable to and gamma is locked on 2.2 instead of using ST.2084, which becomes greyed out on TV as well as Game HDR (HGIG). As a result, some games look awful, so dark or washed out

Before the 7900XTX I had an RTX 3090 and had no issues so far with HDR on this TV, so please release a fix. If I could help, I registered to AMD Vanguard to join any kind of private Beta, as I want to help with my PC and TV to make all tests possible if needed.

CRU workaround posted here did not work for me.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you provide some examples, screenshots of on vs off and details of the games and settings used and I'll pass that along for investigation.

I'm not sure if you saw our previous statement on this behaviour, I believe it was on page 7 or 8, but I'll add a snip it here in case you missed it.

I'm seeking answers on some of the questions you posed to me a page or two back as I'm not sure. I'll update the thread once I have more information to share.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey. Did u even see the video i posted of RE4? HDR isnt working ! I have sent video 3 weeks ago when i replied to tou and you keep ignoring facts? U didnt even replay in 3 weeks time just darknes, no explanation why doesnt hdr work just yeah it works. I dobt know if the team testing issue is blind. I wont be testing subject i have done 100s of hours of troubleshooting and writing emails , test it and get a person who isnt almost blind so he confirms.

D4 your HDR brightness 50/50,on max settings is dimmer than diablo 4 without hdr with all settings off and brightnees 20 /50. Fires are 10 times darker and everything looks like ****.

https://we.tl/t-2YuciWNsFw link to we transfer video showing settings + gameplay of what I mean. Now do take 1minute of ur time in next 2 weeks and actually do watch the video and do something regarding issue instead of asking consumers to do troubleshooting yet again. If pic image would be good and it would be working this topic would not exist. New link bcs by the time u did something video expired. https://we.tl/t-WE2nrRcGaC

thiis issue is 3 years old and u didnt do anything all u say is that if tv loses 50% of nits its because its working right? Amount of new games supporting fspro is non existent so this superior image is total crap. We should get atleastordinary free sync option if you guys dont have a clue how to fix it.

We should be getting photos etc of issue when in 3 months of fixing there wasnt one explanation why we cant play games normaly , no answers and nothing done by side of amd when you guys are legit in job for stuff like this so us better we spend time doing this while issue hasnt even been worked on

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I've passed on your pictures that you posted for investigation, but the original link you shared had expired. Nonetheless I've now forwarded this on as well for consideration. I'll update the thread again when I have more information to share.

Regarding Diablo 4, please provide a video showing the difference between the two examples (with AMD FreeSync Premium Pro enabled and the settings we suggested on the TV Vs the SDR default settings with HDR off) and I will pass that on too.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

here is comparison of SDR and HDR in gamemode ON. Direct colour loss + brightness is dimmer even though sdr is set to lower brightness and no contrast enhancer.

Again my camera isnt good, difference in real life is day and night.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yo matt.

It has been 2 Months since issue was recognised by support team. It is very hard to believe that in 2 months there isn't any news at all regarding this issue.

We the consumers should be notified at what is happening, if this problem is even fixable. I have had GPU for 5 months as of right now and it just doesn't work. I have wanted to do refund, but retailer doesn't allow. Right now I am stuck with GPU that doesn't have working HDR, problem that AMD has since 2021, yet you guys don't give any updates at all. There are a lot of people who use GPU with Samsung tv, all of them have same problem.

There weren't any updates in 2 months, it is not an known issue on driver details. I would like to know where we stand, if you guys are unable to fix problem let us know and let us refund GPUs because this is becoming rediculous. No replays on email / no update on forum posts regarding this issue

As it appears I got my hopes up too much about issue getting fixed, looks like this issue is gonna take a long time to resolve if it even is getting worked on, but I think since this is affecting a lot of consumers around the world ( since it isn't only QN90A tv that has this issue) that issue should really get worked on.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

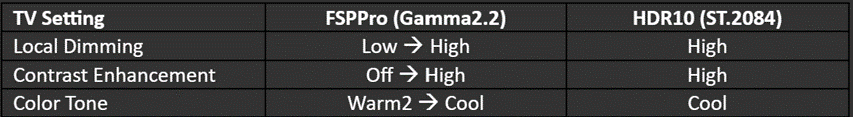

Thank you all for your patience while we investigated the difference in behavior when using HDR on this display, based on the graphics card being used. I can now provide you all with an explanation as to why this occurs.

FreeSync Premium Pro is an AMD HDR solution that primarily focuses on color accuracy, adhering to our certification standards. These Samsung TV models support other HDR modes that offer image vibrancy by adjusting some color settings on the TV, such as contrast enhancement. To preserve our color accuracy, we asked manufacturers to disable these color settings by default for AMD FreeSync Premium Pro. However, it is still possible for users to customize their color settings when using AMD FreeSync Premium Pro to better match the vibrancy of HDR10. For users who prefer color vibrancy over accuracy, try using the suggested settings below on these Samsung TV models.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for reply.

I am uncertain i understand what is meant by this message. Is HDR working as it is supposed to with free sync? Because i still dont understand why using FSPPro were locked to 2.2 gamma that makes screen look very bad. I also am sure that peak brightness is almost 50 % lower when using frree sync premium pro.

But i really Do wonder, how can samsung tv and amd have different HDR, my tv says that FSPPro is supported, while answer says that from amd is not same as samsung.

Is there a work around this issue because customers that have 6xxx series gpus can force FSP and get HDR to work. Difference is night and day. sadly i do not agree that gamma 2.2 is right one since everybody knows that gamma for hdr is st 2084 ( when i uploaded video both gaming mode FSPPro and gaming mode off HDR had same brightness, contrast, local dimming, contrast enchancer, gamma, yet when using HDR game is a lot brighter compared to FSPPro "hdr" + it was recorded using game that has native free sync premium pro support, when playing games that dont have it game looks even worse btw.) ( Also games look dim, i am 100% sure that my game shouldnt be so dark when using all image enchancement settings on max with brightness on max - QN90a has 1500 nits peak brightness but when using FSPP i have max 300 nits)

I am sorry again if i miss understood the message.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you confirm that you have AMD FreeSync Premium Pro enabled on the TV and in AMD Software > Display tab? And that you have applied the following settings on the TV correctly? That is Local Dimming - High, Contrast Enhancement to High, and Colour Tone to Cool?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

That is correct.

Settings i used for the test pictures above were :

Picture 1: in amd driver freesync not supported ( because tv is not in gamemode). TV gamemode off, HDR in windows ON, local dimming and contrast enchancer on high, color tone cool, brightness 50, contrast 50,color 28 , ingame HDR enabled

Picture 2: in amd driver FSPPro is enabled, HDR on in windows, TV is in gamemode ON ( on game bar i have FSPPro detected, HDR not detected), local dimming and contrast enhancer high, colour tone is cool, brightness 50, contrast 50, color 28, ingame HDR enabled

I have tried to force run Free sync premium ( without pro) but i can't make it work. - it seems that if you have only FSPremium ON hdr works and is detected on TV

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Could you please help to confirm whether toggling the OS HDR option (ON or OFF) and then launching the game will have any effect?

We tried Elden Ring on and we saw a similar de-saturated image with FreeSync Premium Pro, but this only occurred if I launched the game with Game Mode = OFF on the TV and then switched to Game Mode = ON after the game launched. If we reversed the order, then HDR10 will appear de-saturated.

I see you also mention RE4, is the issue limited to just these two games (Resident Evil 4 + Elden Ring) or do you see similar behaviour with other HDR content I.e. games and videos? For example, do HDR videos on YouTube also have this washed out appearance?

Could you share additional images with us? Appreciate it.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sorry. I made mistake with the last picture posted ( elden ring photo), as u mentioned it was correct.

Well it happens on all games I play I suppose. I have played more games since jan, but I just don't know them out of my head.

As seen on pictures linked here screen becomes dimmer by a lot. I would like to add that on every qn90a and a lot of other tv topics, folk are saying contrast enhancer makes game look ugly and unrealistic and i actually agree. I sadly do not have any nvidias GPU to make direct comparisons of games.

I haven't been using tv linked with pc on anything other than gaming related as i have pc about 8 M from TV. I did a picture from HDR video but difference isn't seen, mainly because I got cheap phone and 3mb picture size limit ratio. I hope anybody else can post difference between games also.

Maybe its worth noticing than when using FSPPro my tv has lower nits detected compared to when using HDR without gamemode ON and without freesync.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Could you clarify what you mean by this statement please? "Sorry. I made mistake with the last picture posted ( Elden Ring photo), as u mentioned it was correct."

Is this confirmation that if game mode was toggled on before launching Elden Ring, then it was not desaturated? I looked at your examples of FreeSync Premium Pro (FSPP) vs Elden Ring and the FSPP colours look better, (more accuarate) with HDR10 looking very over saturated to me.

I looked at the other picture examples and I can see that FreeSync Premium Pro looks to have more accurate colours, whereas the HDR images, particularly the Resident Evil example, again looks over saturated to me. This is normal and expected from the FSPP perspective.

The FSPP image will be darker, so it is never going to be as vibrant as HDR10 in this scenario on this TV when using FSPP. Going back to my post on the previous page, FSPP targets colour accuracy over vibrancy offered by HDR10 on these TVs. This is by design and not a driver bug since we expect this to happen.

I understand if you are not keen on this implementation, but from our perspective this is working as we expect it to.

@DiabloSouls However, if you have any more examples of PC games where you believe the image is wrong when enabling game mode and then launching the game, please share them with me and can pass the examples on for further investigation.

FreeSync Premium Pro is not available on consoles, that is the difference.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Mat,

So you are telling us that FreeSync Premium Pro is working as expected, showing "more accurate colors" then HDR10? The issue here is not in saturation or brightness. The issue here is in dynamic range! The issue here is that your driver for some reason is not displaying high dynamic range (HDR) when in "game mode" but is locked in SDR mode!

Kind regards,

Gordon

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It might work for them but for me this isnt HDR. FSPPros superior "HDR" is few light years away from normal HDR in terms of image quality. And i would like to add again that contrast enhancer destroys quality of picturs, if AMD can take time and try out HDR of nvidia gpu combined with mentioned TV, they would instantly see difference, but sure it works as expected, picture is better on FSPPro, just not for almost every single person in this thread. When using FSPPro blacks get eaten, compared to normal HDR when i can see clearly in black spots.

And this is all due to TV not recognising signal from amds gpu. My tv legit doesnt recognise HDR in game mode on even if free sync premium / pro is disabled in driver settings - but i think thats how it is suppose to work right amd?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I meant by mistake that i did infact change to gamemode on while in game, thats why i posted new picture.

I am not going to take this for solution. If i want to use hdr my screen is few times darker. This isnt suppose to happen. Nobody can say that losing brightness and details means better picture.

HDR10 content is so bright because of contrast enhancer. I said in previous post that contrast enhancer should be OFF as it makes game look bad. If i check nits tv settings on windows i nts of brightness. I suppose this is normal?!? I cannot actually belive what im reading.

Please use one nvidia gpu and turn hdr on without contrast enhancer take 30minutes of ur time and check difference so we can stop losing time regarding that stuff when u can clearley see that hdr on nvidia looks 100000x better.

And no, i dont have any other game, i dont even play games in my free time due to not having hdr and without game is ****.

As to replay to GordonM : this is the problem not having only free sync premium option for us who think that game looks worse with FSPPro hdr.

If you guys are so certain that this is how ur FSPPro picture should look , give us option to use only free sync premium. This would allow us to have hdr working without any issues as 6xxx series gpu can do all this time!

I stil dont know why would tv that has gamma locked to 2.2 make better picture compared to tv that uses st.2084 gamma option.

Edit : Did you guys even play resident evil 4 with FSPPro on? In first village when there are clouds everything becomes so dark i can barely see anything outside. I am using all settings on HIGH.

https://we.tl/t-2YuciWNsFw link to we transfer video showing settings + gameplay of what I mean.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

if you want your HDR DARKER you need to change to FULL 444 and 12bit color.. and LOWER not INCREASE THE HDR.. because nvidia isnt HDR.. and isnt FULL COLOR it looks blacker..

look imagine old 70's analog TV.. the TV had like what 256 colors.. the red was ALL RED or 0 RED.. but in a real world theres thousands of shades of reds the human eye can see and different shades of brightness. OLD TV ANALOG signal 70's big chunky rabbit ears TV even with more modern used for 30 years SMTP C TV broadcasting standards had a limited colors range and used a specific either 50 whitepoint or NTSC D60 was it? if you use a modern samsung or other type of miniLED/LCD panel with a few thousand of nits of brightness or a dolbyvision screen and specify old TV type colorspace of a handful of colors and max brightness.. the daylight sun glare reflecting off car winscreens or water or whatever is about 10,000nits HDR its what our TV's are intended to use.. many samsung displays are like 4k nits most blurays are mastered 1000nits.. most LG and oled displays and phones were like 700 nits tops especially laptops the more modern ones u pay premiums for 1000nits -2000nits HDR displays.. but just 1000 nits display if its set to old TV one of 8 reds and max brightness its super laser beam to the face hurts your eyes bright.. its the secret to nvidia pretending their absence of hardware 0 bit depth 0 radiation 0true light not math not infinite not additive or divisble infinitely fake tracing of rays instead of actual reality real light radiation like AMD has whats known as "80's video game cartoony brightness and amped colors and sort of richer blacks because its not a precise or specific black its all just max black or what is called CRUSHED blacks and COMPRESSED FAKE GARBAGE... HDR means trees shadows can still see more details being visible not be a black shadow you only see black in.. understand? its weaker gentler blacks that preserve details.. see how AMD has INFINITELY MATHEMATICALLY INFINITELY better image quality and is sharper and more detailed? that means its better at HDR.

i must further elaborate that when the human eye seeing in some 300million colors 10bit display pixel mechanisms 1.2billion shades of color are all your tv pixel switching mechanisms require till the end of time probably for the human eyeballs. But AMD supports higher than that and the software photoshop setting 64bit color or 32bit color and the project file workspace settings and publishing or printing settings and so on.. is what you specify and allow for higher or more color data like HDR metadata is what you are ADDING to the project file or file format in the OS and display driver when gaming you select 12bit RGB 444 it is completely unrelated to your display panels FILE FORMAT the same way you can output AC3 live dolby digital re-encoded audio as surround sound but you are playing back a .wav or PCM soundfile or an mp3 or an AAC file.. you are OUTPUTING to an AC3 DOLBY HARDWARE DEVICE IN DOLBY AC3 FORMAT separate to your mp3 files stereo workspace and project file or wave audio.. understand? your OS and games can game in 12bit full RGB 444 and your TV can output in YCBCR game mode freesync with 10bit pixels on the display panel color switching mechanisms with 1024 R 1024 G and 1024B.. understand you MUST enable 10bit display panel output in ADRENALINE at the very bottom of the tiny words advanced tree toggle of the global graphics settings. .

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

you wanna set sony games like devil may cry 5 and RE village to all ultra max but maybe set the shadows to one down from max by using a text file to memory clamp or texturessize 0.002 shadowsquality0.5 or something else it virus like malware slams your VRAM and u get the max quality textures full quality and details and use the tricks of the true light to sort of pinhole camera lower the vram usage with the imagequality2. change the config.ini file in the game directory so its shadowscache uses CPU and not GPU.. add maybe skincache and other things and specify CPU. and you game a fair bit better. but the games normalmeshes arent normal.. you may want to manually specify tessellation and model complexity and it will look same or better but game .. better? set the display to sRGB and uhh i guess if you go 4k it doesnt game in 1080p with its 4k textures things like hair will be blocky pointy pixels.. u must then use the fake colorspace so set your adrenaline to ycbcr444 10bit or 12bit 4k as sony uses a high speed uhh probably not hardware color correction but i'd imagine it would be or could be or should be hardware theyre a camera company.. to adjust for it so when me I using config files to defaulting and correction and conversion for all things into fullcolorvolume true color max HDR is actually not as needed for sony stuff but still lock in aperture minus a few infinitybillions to adjust the camera as the default is sabotaged by novideo cards company as they hide the fact they not true light and cant aperture.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Allright.

Can you start replaying MAYBE???, you guys at AMD act like this isnt a problem, u guys needed 2 months to say " it works" w

While its obious that you didnt even test anything as resident evil is so **bleep**ty its better to use phone as screen rather than FSPPro tv.

I work eveey day and when u guys answer I always answer in same day, and its not even my job to do it. While you guys are employed to help people you guys need whole week for what? So u can just say something pointless again and dissapear for whole week again?

Get ur **bleep** togother, Games are not playable on FSPPro but how would u guys know u havent done q SINGLE thing in 3 months time. Only thing was it works - NO it doesnt work. Check freaking video i posted on same day as u aanswered. Either say u are usleless and u cant fix the problem that is here for more than 3 years or send mesage to sapphire radeon regarding my rma.

I wont spent more time trying to answer ur dumb questions when u cant clearly take 1 minute of ur 8 hour shift to try to help us, either it works as it suppose to in this case i DEMAND to refund my gpu because in my life i havent seen worse picture quality, support regarding high end products ( i am sorry regarding all amd gpus!!!!!!) Or you guys dont even know where problem is and u guys cannot fix in which way i again DEMAND refund.

I have had gpu for almost 6 months in 6 months there was 1 update that isnt usefull at all, is total nonsense that just confirms that nothing is getting done regarding problem every user has. I think there should be written notice on every AMD gpu stating that hsr is bad and if somebody is getting new gpu for HDR gaming that they definetely shouldnt use AMDs gpus !

Also best feature from FSPPro is that when i turn hdr on gamemode my tv instantly loses almost 800 nits of brightness. Guys domt you worry this is workimg as it should. SDR game on 20 brihhtness on tv is brighter than HDR game max all settings

Not a single person who commented here agrees that picture is better than hdr yet u act like nobody knows anything except you!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So this is it? No explaination as to why we cannot use HDR on anything? No explanation why we have all HDR settings locked on our TV due to TV not displaying hdr at all?

No explanation why we cannot get HDR Gamma on TV, forcing us to use "HDR" on "SDR" Gamma?

In 2 weeks there is no explanation?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

if you are in under 60 fps or sometimes 60 or disable game mode or switching it may detect HDR and switch to MOVIE or entertainment mode and look brighter and more colorful and use more 'enhancers' for contrast or local dimming etc. but then you dont get the gliding buttery smooth mouselook where you can say in assassins creed move your mouse across the mouse pad and see it zooooom a billion miles an hour in trillions of rotations at super sonic speeds mouse look of envy! the entertainment mode or 'dynamic' viewing or the things like the judder reduction and blur reduction will crank up a notch too. But you possibly had totally different picture settings. You as Matt mentioned maybe also need to shift the default WARM 2 of HDR to cool and adjust the backlighting or other things to be how you prefer. But the middle pic was excellent HDR. your windows 10 or 11 might still be doing AUTO HDR too keep in mind.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Bump?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Mat,

First I want to thank you for your effort.

Then, I have to say that your explanation is not acceptable. The issue is not related to TV settings. BTW Here is the citation from AMD site regarding FreeSync Premium Pro:

"AMD FreeSync™ Premium Pro1 technology raises the bar to the next level for gaming displays, enabling an exceptional user experience when playing HDR games, movies and other content:

- At least 120hz refresh rate at minimum FHD resolution

- Support for low framerate compensation (LFC)

- Low latency in SDR and HDR

- Support for HDR with meticulous color and luminance certification"

https://www.amd.com/en/technologies/free-sync

Now, let me repeat what I've already stated here - I have PS5 and Xbox Series X (which both have AMD graphics chips) connected to this TV and both are working flawlessly, using ALLM (game mode), VRR, HDR, 120Hz refresh rate with very low latency. So the issue is with the drivers 1000%.

Thank you,

Gordon