Drivers & Software

- AMD Community

- Support Forums

- Drivers & Software

- When is RX 5700 XT and HDMI 2.1 update coming?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

When is RX 5700 XT and HDMI 2.1 update coming?

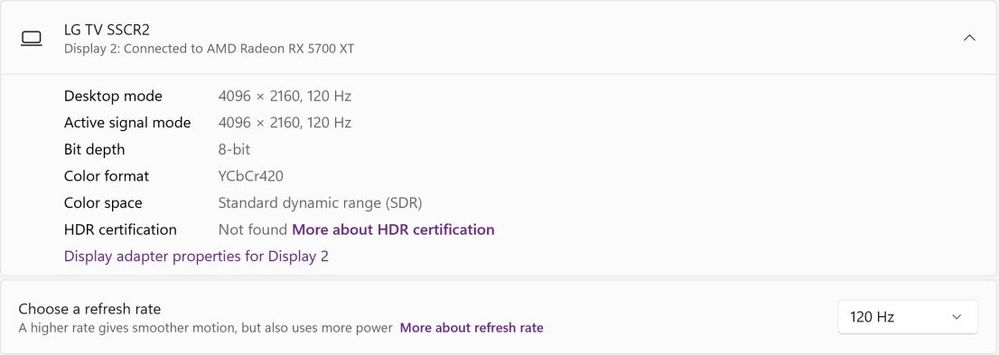

I was wondering if it will be possible to get 4K @ 120 Hz and YCbCr444 out of the this GPU. I have the right cable (8K certified 48 Gbps ). But when I set the desktop to 4K 120 HZ, all I get is this:

8 bit, YCbCr420, SDR. If I set the refresh rate all the way to 30 Hz, then it's 12 bit/YCbCr444/HDR.

I've ready many forum posts on different websites saying that the GPU is only capable of HDMI 2.0b. Then there was mention that AMD would release driver/firmware update that would upgrade the HDMI port to 2.1. I don't know how that's even possible, but what happened? Is this no longer ever going to happen?

Do I need to start looking for a GPU capable of getting the full potential of my TV's hardware?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This is impossible we do not have the 2.1 port we have the ages old 2.0 port on our products

Only the later models might have the 2.1 ports mounted, but i admit not look at that as i not really game much

So for me i was already disappointed that they introduced the 5700 cards with that old port.

But on the other hand the card can not really do 4k well, it is not really made todo that.

sure it can run 4k a bit but it has to be not one of the younger games as it would choke in the graphics.

It can run it 1440p but when i tried a few heavy games you already feel and see its limited.

Now the Asrock card i have the Taichi RX 5700 XT is really performing very good and is often scoring above many overclocked ones which i not even have todo. In 3d mark it ends in the highest regions of performance just at factory settings with my 9900 KF which is constant running at differents speeds

But always ends between all the overclocked systems in performance never in the standard ones.

Anyway if you really want a card capable to run at these TRUE 4K your probably have to wait till they release a card which really can do that, now i admit i have not looked at the 6900 models of AMD at all so it might be possible that cards can do that.

But its so insane expenssive it is not even worth looking at.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi guys,

i've RX5700XT too and im very disappointed about having just 1 hdmi 2.0 and 3 dp lol !

I'm try to connect my PC with samsung Q80A qled, but my TV don t even recognize my PC!!

I've seen that you don't have this problem.... you can see your PC.

Which could be the cause in my case ? Driver are update.....

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

get a display to hdmi cable. you can't have more than one hdmi, but you can have 3 display and one hdmi.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There is hope that it could be not HDMI 2.1 but maybe displayport 1.4 over 2.1 because enabling both HDMI 2.0B and displayport outputs on the one 5700XT card and adjusting hz and refresh rate bit depth and audio and resolution, can lower quality if both used at the same time from interference and bandwidth indicating the displayport 1.4 is the exact same controller as the HDMI 2.0B or shares the same bus. which is why you must always try to use one or the other, not both. HDMI or DP. and disable all audio/video output ports not in use. And try to set them all to the same refresh rate and resolution and where applicable use clone display if its just for audio maybe not extend.

So it may be possible to have a sort of DP 1.4 over HDMI output .. which might be the DSC displayport compression which is advertised on the box of all the cards of getting its HDMI 2.0B to output DP 1.4.. or something like that.. its very curious and interesting why its not enabled on both i wonder.

As for setting it down to 30hz.. and using ycbcr 444 or 420 .. you only need lower it to 30hz to get it to switch to FULL RGB when using RGB. btw all light and all colour are in fact RGB. only RGB. so ycbcr compressed nasty colours are lousy. unless your TV or monitor cant RGB, if you want to ray trace and or HDR.. or game or anything always use RGB. but full RGB 120hz444 HDR only seems doable with 1080p on 2.0b unless our situation changes. which isnt likely. If you enable RSR or FSR or CXR and try to upscale your resolution to 4k from 1080p.. you will be exceeding the bandwidth limitations also probably.

if you are printing use CMYK colourspace.. for everything RGB is how light works so its how computers work. the YCBCR is for 70's photo negatives film reels limited colourspace VHS and analog TV recordings which dont heave true light which is why hollywood gets away with it. also HDMI has nothing to do with hollywood but they are using hollywood copy protection in the port HDCP. its something like sikai tech digital media interface originally and was full bandwidth full spec but was said to be expensive so they release it as 1.0 to 1.3 to 2.0b and 2.1? also 4k gaming is said to be pretty okay on ps4/pro and xbox one/X which are an rx480 with probably 4GB of VRAM.. so i dont see what the issues are with your vastly superior hardware with thousands of times that a rx6000 series card is the same unit of measurement meaning THOUSANDS of times better than a rx480. i believe an rx is a unit of thousands of billions of rays per pixel as opposed to nvidia/intel/microsoft defaults of 8 rays per pixel but that might be per function to be fair. I use ancient ray tracing, more complex better ray marching AND the latest greatest best 1970's ray casting all at once and its pretty good on my 5700xt. how are those light shafts feeling? really shafted huh? are the god rays a bit too burning bright?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I think it is so close... I am so excited to wait even more think about it when it arrived...

#amddrive #merchsummer #graphicoverview