Archives Discussions

- AMD Community

- Communities

- Developers

- Devgurus Archives

- Archives Discussions

- Re: Question regarding : memory management, NDran...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Question regarding : memory management, NDrange() and optimization

Hello,

I am currently writing my thesis about genome assembly, currently i am working on parallel approach based upon the overlap principle.

(each string is compared with all other strings to find the best overlap )

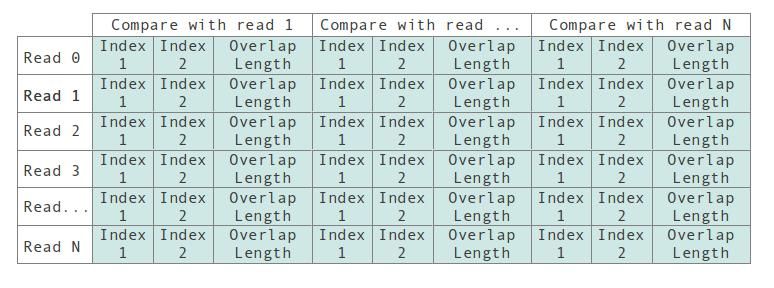

The current kernel i use has one input buffer where all reads are serialized, by use of the get_global_id(0) function, creative index usage and some for loops, each instance of the kernel (thread?), compares his read get_global_id(0) read with all the other reads and records the overlap information in the output buffer according the structure shown above.

Here's my kernel (or see compare2.cl as attachment) :

#pragma OPENCL EXTENSION cl_amd_printf : enable

#define MAX_READ_LENGTH 750

__kernel void compare_string( __global char* input, __global int* output, const unsigned int count, const unsigned int N )

{

//global_id -> get index value of start string1

int x = get_global_id(0); // 0 -> count

int y,k,j;

for(y=0;y<count;y++) // y : get index value of string 2

{

for(j=1;j<MAX_READ_LENGTH-1;j++)

{

if(input[x*(MAX_READ_LENGTH-1)+j] == input[y*(MAX_READ_LENGTH-1)])

{

for(k=1;k<MAX_READ_LENGTH-1-j;k++)

{

if(input[x*(MAX_READ_LENGTH-1)+j+k] != input[y*(MAX_READ_LENGTH-1)+k])

{

break;

}

}

if( k+j == MAX_READ_LENGTH-1 )

{

output[x * count *3+ y *3+0] = j;

output[x * count *3+ y *3+1] = 0;

output[x * count *3+ y *3+2] = k;

}

}

}

}

}

Now with this in mind i have some open-cl questions 🙂

Question 1 : NDRange (from 1D to 2D ?) (easy ?)

Currently i initialize and execute the open cl kernel like this.

//setting up input & output OpenCL buffers

cl::Buffer inputBuffer ( context , CL_MEM_READ_WRITE | CL_MEM_COPY_HOST_PTR , sizeof(cl_char)*MAX_READ_LENGTH*count, input_data);

cl::Buffer resultBuffer( context , CL_MEM_READ_WRITE , sizeof (cl_int )*count*count*3 ); //3 integers per compare

//starting kernel

cl::Kernel kernel(program , "compare_string" ) ;

cl::KernelFunctor kernel_func = kernel.bind( cmdqueue ,cl::NDRange((int)((count/256)+1)*256), cl::NDRange(256));

//executing kernel

kernel_func(inputBuffer,resultBuffer,count,count );

//data retrieval ?

cmdqueue.enqueueReadBuffer( resultBuffer , true , 0 , sizeof(cl_int)*count*count*3, output_data);

My NDRange goes from 0 to N, with N the number of reads there are. Now in the kernel code @line 9 i have a for loop that goes from 0 to N as-well.

Isn't it possible to remove the for loop on line 9 and replace it with get_global_id(1) ? I've tried to this but simply changing line 7 does not work.

cl::KernelFunctor kernel_func = kernel.bind( cmdqueue ,cl::NDRange((int)((count/256)+1)*256,(int)((count/256)+1)*256), cl::NDRange(256,256));

Wich results in this error : OpenCL error: clEnqueueNDRangeKernel(-54) ,not really helpfull.

Anyone a idea how to do this right ?

Question 2 : memory management (concurrent access)

When question 1 is resolved i am going to have some other problems (after a few adjustments), namely several threads could try to access the same global variable at the same time.

My understanding of the barrier function is as follows :

some code

some code

barrier(GLOBAL_MEM)

if( condition )

write to global memory

each thread will execute line 1 & 2, wait for all other running threads on line 3, and than each thread will excute one ather the other (serial ? ) ensuring correct acces to the global memory .

Is this understanding correct ?

Question 3 : general optimization

I am now comparing characters with each other, would this go faster if i was using integers ? (i do have heard about vectors, but any supplemental information is welcome ![]() )

)

I calculate my indexes quite frequently, i assume allocating a private memory space, and storing the static part would result in less calculation ?

(or is this already resolved when compiling ?

Regards, Kevin

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

error -54 mean CL_INVALID_WORK_GROUP_SIZE it is beacuse you specified 256*256 workitems in workgroup. leave it to 256*1 all error code are in cl.h header file.

you can synchronize only between workitems in one workgroup. only way to perform global synchronisation is kernel execution.

yes you can read int and cast it into char4 to better read performance.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If this is a core part of the thesis, then surely it is up to you to find much of this out? Parallel algorithms are challenging: hence no doubt, this being part of a thesis?

So I wont go into specifics on your search algorithm, but in general:

q1: If you're going to 2d, you want to use x (get_global_id(0)) to index in x, and y to index in y, so memory accesses are coalesced if they can be, where the data is stored by rows in y.

q2: global barriers are only needed if you write/read the global memory from the same workgroup. but since you cannot communicate amongst other workgroups using global memory, i've never found a use for this (i've always had enough local memory for such communications).

It will not serialise anything either: if you need unique output indices use global atomics (for amd, use an atomic counter).

q3: Integers (or longs?) will probably be faster, although you have to manage byte-aligning everything and the edge cases yourself, so it can get messy (if you don't need to byte-align, then it will be much faster). opencl has no support for rotating vectors or permuting them dynamically so streaming charN sequences with various byte offsets isn't practical: but one doesn't need to use vectors to get good performance from a gpu anyway. i.e. you're probably better off using int/long rather than char4. amd has some extensions that help with this, e.g. amd_bytealign().