Archives Discussions

- AMD Community

- Communities

- Developers

- Devgurus Archives

- Archives Discussions

- OpenCL 8 GPU DGEMM (5.1 TFlop/s double precision)....

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

OpenCL 8 GPU DGEMM (5.1 TFlop/s double precision). Heterogeneous HPL (High Performance Linpack from Top500).

Pavel Bogdanov, Institute of System Research Russian Academy of Sciences (NIISI), bogdanov@niisi.msk.ru

INTRO

Nowadays heterogeneous computing becomes more and more popular. In november 2011 three of top5 supercomputers had hybrid architecture. In the last version (june 2012) of this list they were pushed out by computers with new IBM BlueGene/Q architecture, built with standard multicore processors. But according to experts, it is impossible to construct an exaflop-class supercomputer using only multicore CPUs, so these computers may have hybrid architecture.

Modern hybrid machines are built with massively-parallel accelerators, such as graphic cards (GPGPU). Intel MIC and others. How can these devices be programmed? At the moment there are two stable programming models: CUDA and OpenCL. CUDA is an NVidia proprietary product and can be used only with NVidia hardware. OpenCL is an open model, it is supported by a number of hardware vendors such as AMD, Intel, IBM, including NVidia, and the code written using this model is highly portable between these devices.

Now the next question: how to use these powerful massively-parallel devices to accelerate existimg MPI/OpenMP codes? The simple approach — to use optimized for these devices numerical libraries — will not work: the amount of changes to the existing code will be large, and it is as difficult as entirely rewrite the code.

One of the possible ways out of this problem is to create a special infrastructure (possibly made as a system-level service), which would on the one side control the devices and regulate access to them and on the other provide a comfortable API for the developer. The main concept of such infrastructure is to minimize the changes which must be made to an existing MPI/OpenMP code to work with OpenCL model.

Our work has two main purposes: to create such infrastructure for heterogeneous computing and to accumulate numerical libraries with standard interfaces, written with this infrastructure. The priorities are: the best performance, scalability to all devices in hybrid machine, easy coding and automatic transfer-overlap when possible.

Further, we'll describe the first version of the infrastructue and three model methods of linear algebra: DGEMM — general dense matrix multiplication, DTRSM — a triangle system of linear equiations solver, and DGETRF — LU-decomposition.

SCHEDULER

Concept

We introduce two definitions: register and instruction.

Register is an arbitrary linear region in a device global memory. So, the global memory is divided into several registers, their number and size are bounded only with total amount of memory and some OpenCL restrictions (such as maximum allocation size and etc).

Instruction is an arbitrary compute kernel on a device. The whole device can be interpreted as a virtual machine which runs instructions on registers.

The devices are operated by a special scheduler. Scheduler initializes the available resources, and provides a developer with following abilities: to control the registers, to transfer data between host and devices, and to launch compute kernels on devices. These abilities are used by the means of control commands. Commands and dependencies between them form a dependency graph, which is a program for a scheduler.

At the moment, the scheduler works statically. It means, we firstly create the whole program, then execute it.

Commands

There are three types of commands: data transfer, kernel execution and auxiliary commands.

LD command reads a rectangular region from host memory and sends it into register. At the moment, it stores the lines sequentally into a small buffer and sends it to device via OpenCL call. If the device is connectd to a PCI-Express slot, memcpy and transfer are performed in parallel. LD command receives as arguments a register and region parameters.

ST command stores data from register to a rectangle region. The algorithm and arguments are the same as with LD command.

EXEC command (an instruction in our terminology) executes an arbitrary compute kernel on a device. It takes registers and scalar control parameters as arguments.

Auxiliary commands are presented with MARK (marker) and BAR (barrier) commands used for synchronization. MARK is an empty command, from which dependencies can be created, and BAR simply waits for all commands it depends of.

Queues

The scheduler consists of three queues: queue of LD commands, queue of ST commands and queue of EXEC commands. Auxiliary commands can be included in every queue. All three queues work in parallel, each queue works in a separate thread, the thread syncronization is performed by standard system-level objects and calls. EXEC queue works strictly in-order, and the next command is not launched until previous command is finished. LD and ST work asyncronously: commands are launched in order they were added, but the next command may start before previous finishes.

TESTING MACHINE

Tests were launched in Institute of System Resarch Russian Academy of Science (NIISI) on the following stand:

CPU 2 x AMD Opteron 6176

Memory 64 Gb DDR3

GPU 3 x AMD Radeon 6990

Operating system Ubuntu Linux, Windows 7

Video driver AMD Catalyst 12.3

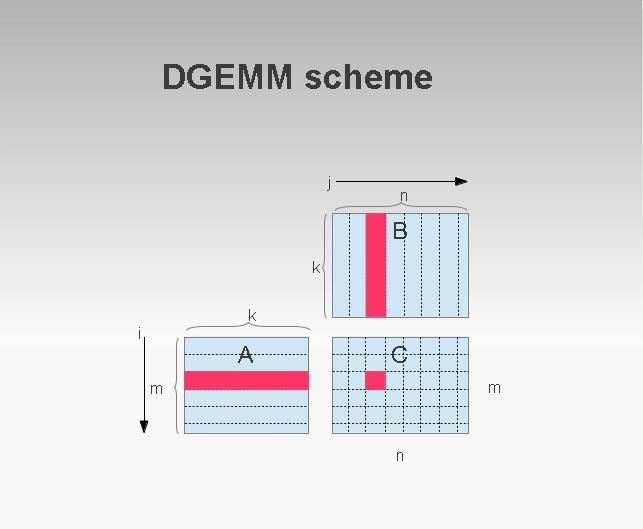

DGEMM

Task

Calculate C = alpha*A*B + beta*C, A, B, C — general dense matrices of an arbitrary size.

Logic

We use a standard blocking algorithm with two levels of blocking. In the host memory matrices are cut into big blocks (in regular case 5184x5184 elems in double precision, which takes approx. 205 MBytes of memory), which are transferred to a device and there are cut into smaller blocks. One thread computes a 6x6 block of the result matrix. The blocks on the host are taken consequentally.

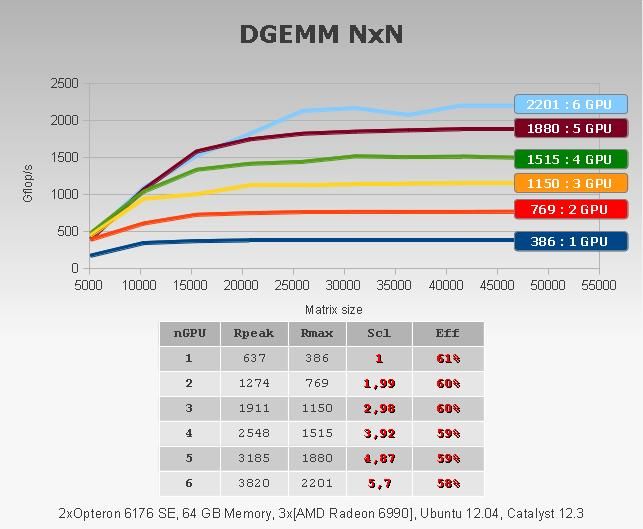

When there are several devices, we divide rows of resulting matrix equally between devices (one device multiplies part of rows of A on the whole matrix B), so algorithm is data-parallel.

In irregular case we use padding: fill matrices with additional zeros to get appropriate sizes.

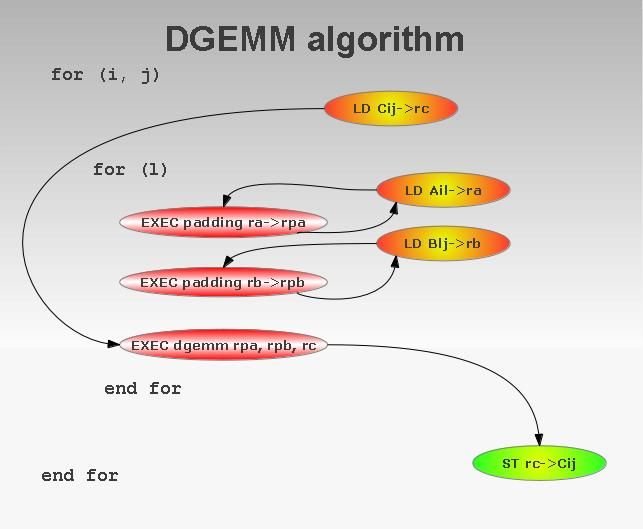

Scheduler program

Algorithm: first, we should divide matrix C into blocks, then write programs, which calculate each blocks, and then join them into one program. If a block from C fits into one register, a program for a scheduler which compute it, looks like this:

LD C → rc

FOR (k = 0; k < nblocks; k++)

LD Ak → ra

LD Bk → rb

EXEC pad ra → rpa

EXEC pad rb → rpb

EXEC dgemm rpa, rpb, rc

END FOR

ST rc → C

Padding depends on LD's, gemm depends on padding. The dependencies on register access are put automatically, EXEC queue is executed consequentally, so no additional dependencies should be done.

If OpenCL driver and a device suppor transfer overlapping, LD commands are hidden behind EXEC dgemm. To hide LD C and ST C we use double-buffering.

So, algorithm requires five registers to work and six to use double buffering.

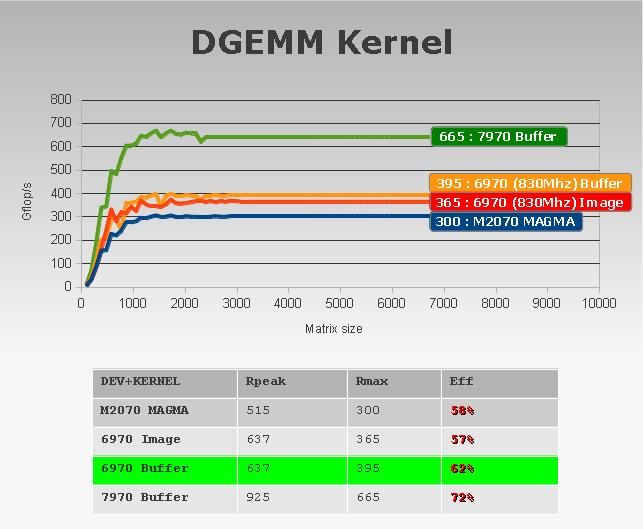

Computational kernel

One thread computes 6x6 block of C (it multiplies six rows on six columns): in a cycle we load 6x2 block from A and 2x6 block from B into registers and multiply them. LDS is not used. Performance: we have to load 24 doubles and make 72 mads on them, so theoretical peak is 75% of card peak. We achieve 63% of card peak on a kernel.

Tuning

There is a question: how to split matrix C into blocks? The goal was to get as smooth curve of performance as possible. Let's assume that matrix has m rows, and regular block has r rows. We'll make m/r-1 rows of blocks (each containing r rows), and the last (r+m mod r) rows we divide into two block rows with equal size. The columns are splitted in the same way. This partition provides the stable performance dependency from size.

Results

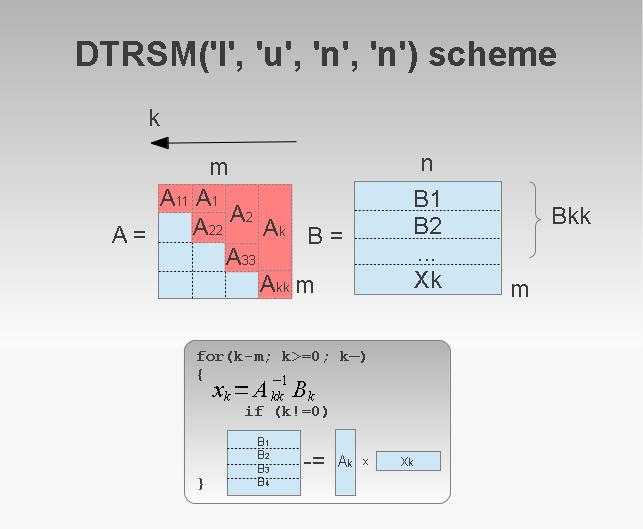

DTRSM

Task

DTRSM one of triangle systems: op(A)*X = B or X*op(A) = B. Result X is placed into B.

Logic

We use the parallel form of the back substitution part of the Gaussian elimination, applied to the blocked matrix. The algorithm is the following:

FOR (k = 0; k < nblocks; k++)

Inverse Akk

Calculate Xk. For example, if DTRSM parameters are LUNN, Xk = Akk^-1 * Bk

if (k != nblocks-1)

Update trailing matrix: Bkk -= Ak*Xk

END FOR

So we see, that DTRSM performance is asymptotically equial to DGEMM performance.

Triangle matrix Akk is inversed in a block way. Firstly, we use a standard algorithm to inverse diagonal 32x32 blocks in-place, and then apply the same method to inverse block matrix.

When we have several cards, we split matrix B equally between cards (data-parallel). All cards do the same matrix inversion, but it is not resource-consuming, so it doesn't affect the result performance.

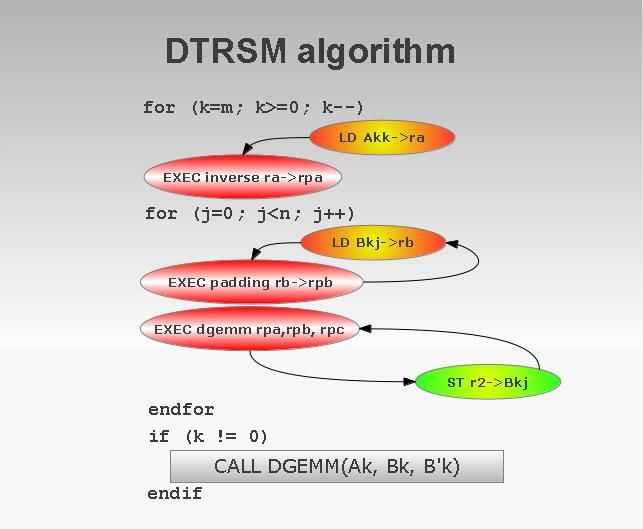

Scheduler program

Akk is inversed on the card, so the original matrix is not changed. Answer Xkl is written on the place of Bkl. In the first cycle we get the inversed block Akk, in the second — part of the answer Xk.

LD Akk → rpakk

EX inverse_diag_blocks rpakk

FOR (l = 0; l < nblocks; l++)

EX dgemm_step1 rpakk, l

EX dgemm_step2 rpakk, l

END FOR

FOR (l = 0; l < npages; l++)

LD Bl → rbl

EX pad rbl → rpbl

EX dgemm rpakk, rpbl, rc

ST rc → Bl

END FOR

Additional dependencies here are not nesessary too: all required dependencies will be set automatically.

If Akk is not the last block, we shoul update trailing matrix via DGEMM.

Computational kernel

Diagonal 32x32 blocks are inversed by a kernel with a standard algorithm (netlib). We use LDS and a «one thread — one row of 32 elements» principle. Other kernels — our dense dgemm.

For triangle matrix multiplication we use optimized for this matrix structure dgemm kernels. From asymptotic point of view, it doesn't matter, which kernel to use, but optimized kernels allow to achieve better performance on smaller matrices.

Results

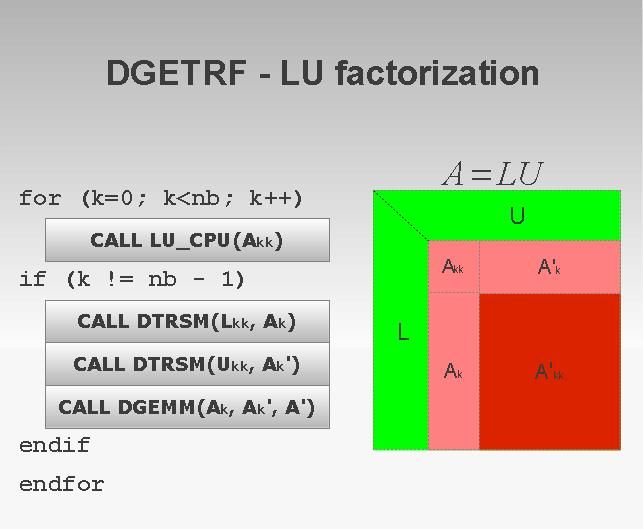

DGETRF

Task

DGETRF performs an LU decomposition of matrix A with partial pivoting: A = PLU.

Logic

As a model task we implemented a simpliest case of LU decomposition: decomposition without pivoting. Our goal was to investigate, whether our approach can be applied to such problems. This method can be used with the narrow class of matrices, but it's theoretical performance is almost the same as performance of gneral method.

Algorithm can be written as a sequience of standard calls:

FOR (k = 0; k < nblocks, k++)

CALL CPU_DGETRF (Akk)

IF (k != nblocks - 1)

CALL GPU_DTRSM(L, L, N, U, Akk, A')

CALL GPU_DTRSM(R, U, N, N, Akk, A1)

CALL GPU_DGEMM(A', A1, Ak)

END IF

END FOR

GPU calls can be done in parallel on several devices, CPU code works consequentially. Overall loss of such use of CPU increases with increasing number of GPUs. Asymptotically, the performance of the call is equal to DGEMM on stripes, but CPU code makes it to converge slowly.

There is a way to hide CPU code behind GPU calculation, but it makes algorithm more complex and is not required for our goal.

Algorithm with pivoting

An algorithm with string pivoting has two major differences from simple method: DGETRF_CPU is called not on a square region, but on a whole block column, and we should switch rows according to pivoting array. Asymptotically, these operations are much cheaper than DGEMM, so they can be hidden behind it.

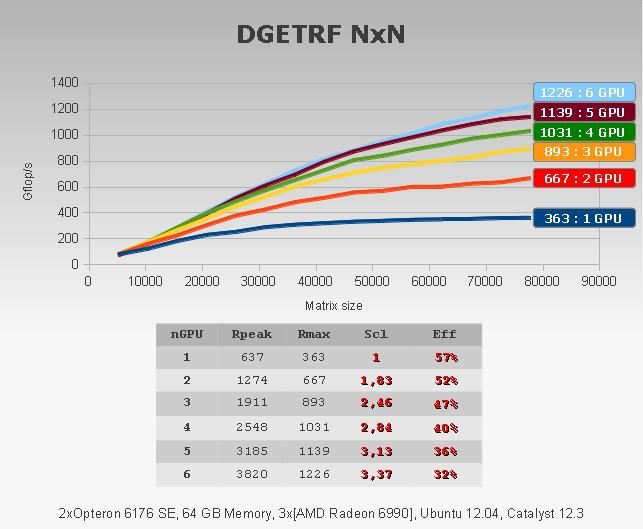

Results

CONCLUSION

In short, we can make several conclusions from our work: firstly, massively-parallel accelerators can be effectively applied for such tasks in mathematical modelling; secondly, infrastructure satisfies the goals, and it can be used to program hybrid nodes.

In future, we plan to develop this infrastructure on one hand, and accumulate numerical libraries written with it on the other. Now we are working on operations with sparse matrices: SpMV and numerical methods using it.

We send our best regards to AMD, and a few requests:

- Please allow us to allocate 100% of GPU space under linux!

- Please make OpenCL driver work with multi-GPU properly with any number of GPUs (at least, 8 or 16)!

- Please make WriteBufferRect work with transfer overlap!

- Please provide full and correct support of OpenCL 1.2!

From Russia with love,

Pavel, Anton.

Message was edited by: anton efremov

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Anton,

Will check this either today (or) coming week,

Thanks for your patience on this....

-

Bruhaspati

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Anton,

I got your program compiled with OpenBLAS.

But unfortunately, that system did not have double-precision capable GPU 😞

So, I will find another machine, move it out and test this.

That will most likely be this Friday...

Thanks for the detailed information, it was very useful..

btw..

Is there a way to run only single precision tests?

Thanks,

Best Regards,

Bruhaspati

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We say hello to our dear heterogeneous computing friends! Today we will discuss the recent news from the battlefield, and unfortunately they are not the cheerful news.

For almost two years we have been obtaining all our results with a dozen old trusty AMD Radeons 7970 GHz Ed. New scientific plans leaded us to a choice of a hardware platform for the next 1-1,5 years. In addition, the new HAWAII cards seemed inspiring. Let's run the benchmarks, do some calculations and make the conclusions!

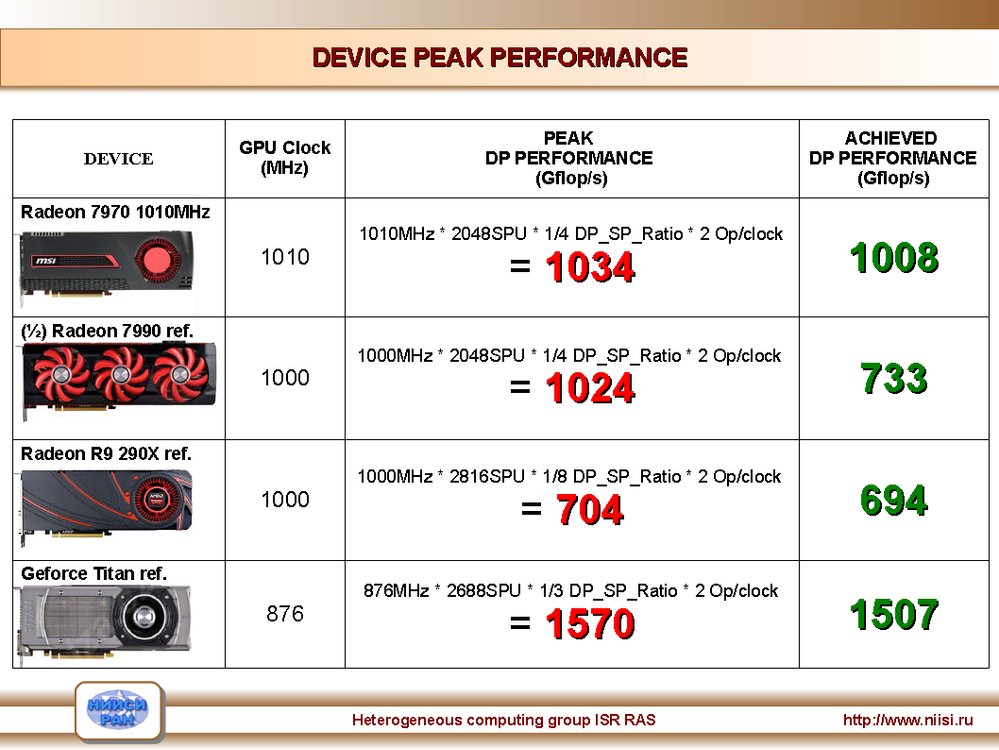

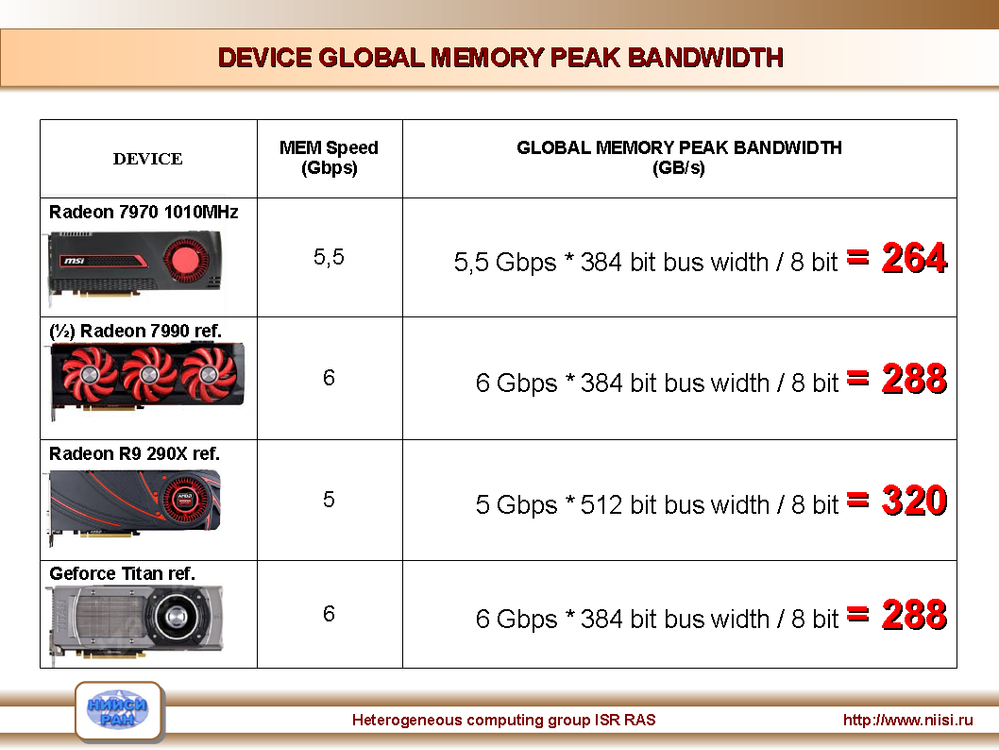

The soldiers of Applied Science are interested in at least two major properties of a device: peak performance in double precision and a global memory bandwidth.

We have tested the following accelerators (+prices in Moscow, written in $$$):

- Radeon 7970 Ghz Ed (1010MHz) (~350$)

- Radeon 7990 Reference (~650$)

- Radeon R9 290X Reference (~700$)

- Geforce TITAN Reference (~1000$)

All AMDs had 12.6 and 14.1b drivers, 331.38 for TITAN.

Here are the theoretical peaks of these devices and the best real performance (we used the synthetic OpenCL kernels from the clpeak project: https://github.com/krrishnarraj/clpeak😞

After that, we launched a mini-stress-test: 100 iterations with 10 kernel launches in each iteration. Resulting performance of an iteration is an average of 10 launches. That's how Marsellus Wallace the performance degradation looks like. Isn't it beautiful?

After looking at these diagrams, several questions arise.

1. It is unclear why one half of 7990 has 733 GFlop/s while 7970 has 1007 GFlop/s? As far as we know, these cards are equal, and they have the same frequencies and amount of SPUs.

2. Why does the kernel performance decrease on Tahiti as time goes by? This issue keeps appearing with all drivers after 12.6, and driver developers seem to do nothing about that.

3. Why the HAWAII card is so slow? The fact that it has DP/SP = 1/8 (compare with 1/4 on Tahiti) made us frustrated. In addition, we notice that our non-optimized DGEMM kernels get 650 out 704 GFlop/s, which is 92% - unrealistic number. So we hypothesize that the chip has full 1,4 TFlop/s performance, which is artificially (by software?) limited.

The next chart contains theoretical peaks of global memory bandwidth:

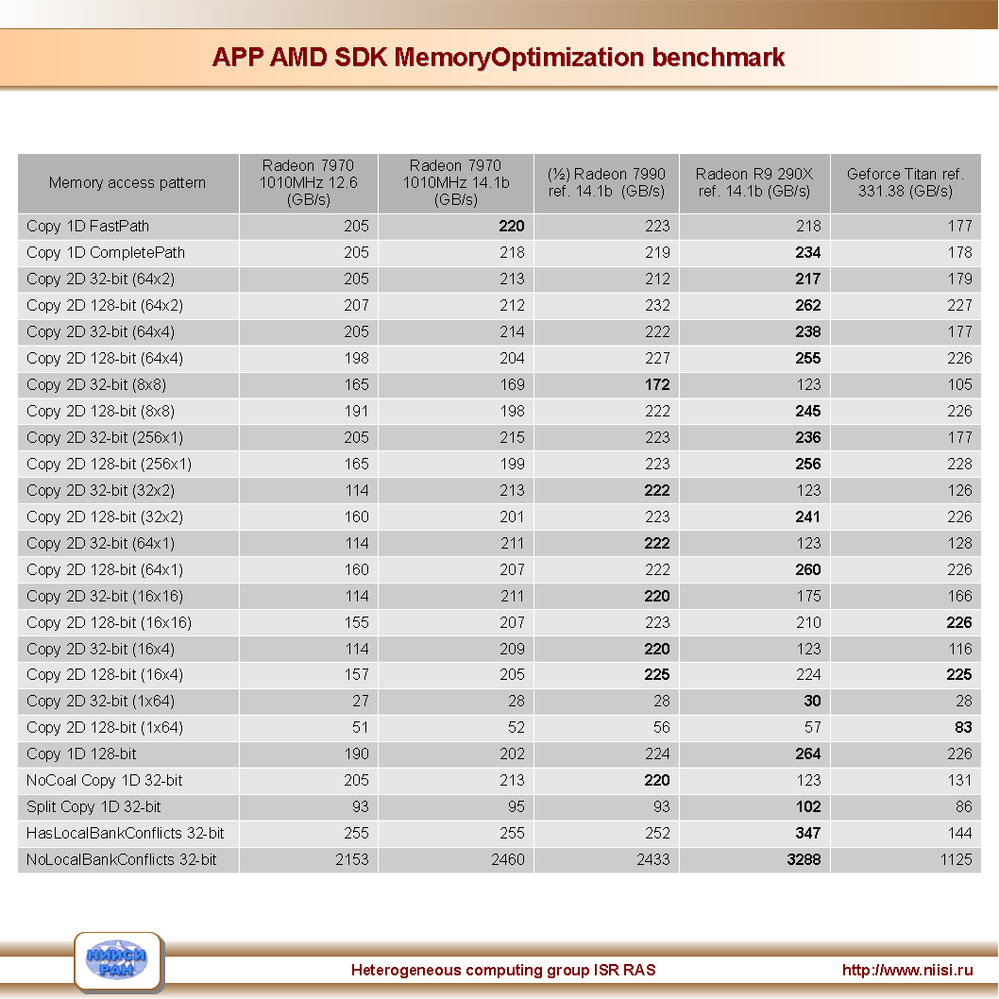

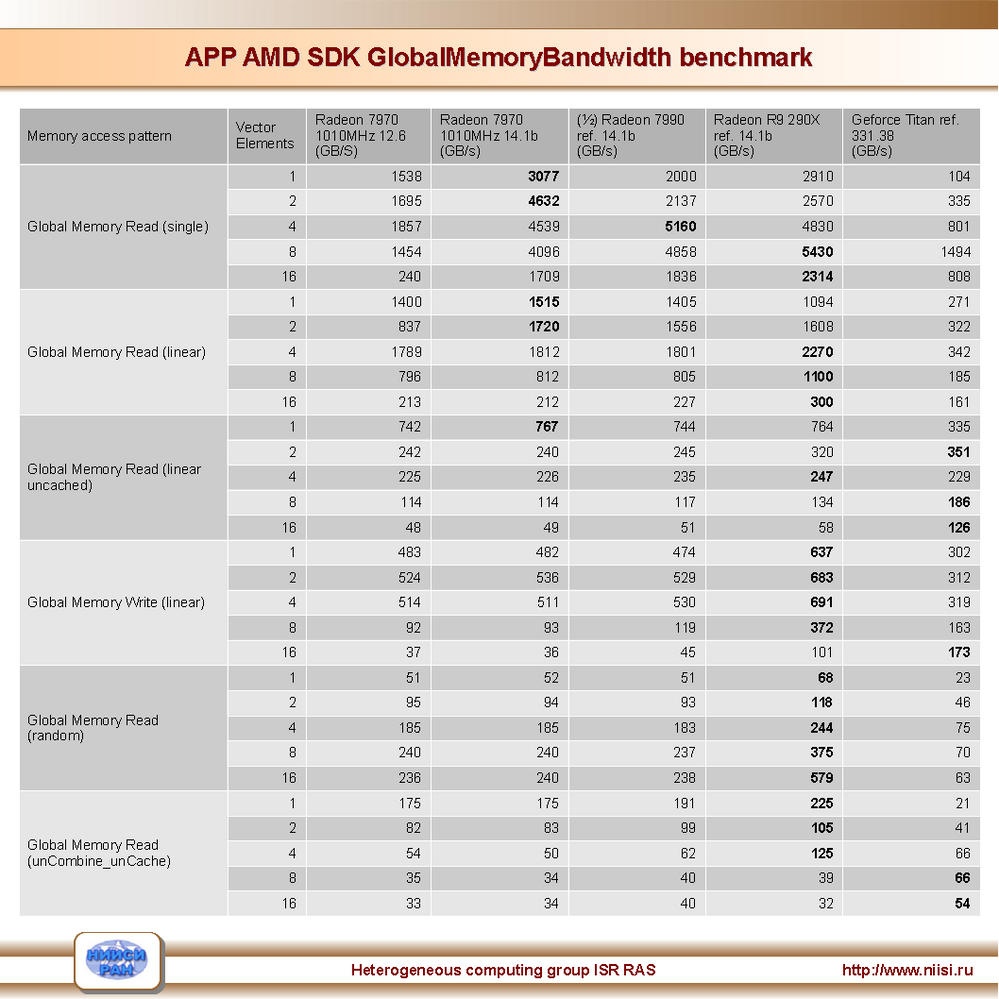

The real bandwidth of all devices was measured with GlobalMemoryBandwidth and MemoryOptimization tests from AMD APP SDK:

Important moments:

1. In some aspects AMD drivers become better and better, and these improvements greatly affect the overall performance.

2. All AMD GPUs demonstrate an exсellent memory subsystem work.

Unfortunately, we have to state that HAWAII is less suitable for scientific computations than Tahiti, and the time of cheap GPGPUs for scientists has ended.

As we think, up to this point AMD hardware was much better than hardware from NVidia. On the other hand, these advantages were neutralized by unstable drivers. At the moment, the AMD drivers became better, but there are some old bugs (like performance degradation) and some new ones, so some codes which worked with 12.6, don't work on the new drivers.

We have also tested the FirePro card (based on Tahiti architecture), and the situation was the same: unstable drivers and a lot of difficulties with multi-GPU systems.

So, the question is: what does AMD plan to do in the computational sector? Previously we had a cheap and fast hardware, which could be used despite the awful drivers. At the moment the drivers are still not fully operational, and soon the FirePro cards will become very expensive (who will buy them?..).

On the other hand, in some performance metrics NVidia devices are not as good as AMD ones, but they have the brilliant software support. We have tested a lot of different NVidia GPUs (GeForce and Teslas) and never encountered serious problems.

Almost all computational problems we investigate are memory-bound, and in this aspect all these GPUs are approximately equal. In the real launches AMD 7970s are usually better than TITANs, so we decided to stay on 7970. But we expect that NVidia will start selling something more fast in a year or so.

There is an opinion (not only ours), that if AMD will not change their slighting attitude to GPGPU sphere, a lot of scientific researches will start choosing NVidia.

From Russia with love,

Pavel,

Anton.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks Anton, I just wanted to show my appreciation for your work and for reporting your findings.

My opencl application is heavily dependent on double precision performance.

A lot of people out there have the attitude that you only need double precision for a few scientific problems.

The fact that every major computer language uses double precision for calculations and float is just a storage format eludes them. Ditto Excel using 16 digits of precision.

It would be great if someone could write a paper as to why double precision is so important for all uses.

Someone needs to invent a bitcoin that depends on double precision (or higher..) performance. (finding locations of a certain pattern in a mandlebot?)

Perhaps this would raise the desire for high DP performance in the general user base.