Archives Discussions

- AMD Community

- Communities

- Developers

- Devgurus Archives

- Archives Discussions

- no significant performance using pinned host memor...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

no significant performance using pinned host memory

Hi,

I make a program using pinned host memory (CL_MEM_ALLOC_HOST_PTR) and pageable memory (DEFAULT).

Code for pageable memory is created by DEFAULT flag and I use cleEnqueueWriteBuffer/ReadBuffer to transfer to DISCRETE GPU.

My question is:

1. Why there is no significant improvement in transfer rate when I use pinned host memory (non pageable) ?? The transfer rate must be greater than pageable memory (standard way) is n't it??

2. The transfer rate (pinned host memory) become bad when I use clEnqueueCopyBuffer for the SECOND time. I use device buffer (not pinned memory) as kernel argument so why the second write transfer become slower??

Here is the code for pinned host memory:

// Allocate device Memory For Input And Output

devA = clCreateBuffer(context, CL_MEM_READ_ONLY , sizeof(cl_float)*size*size, 0, &err);

devB = clCreateBuffer(context, CL_MEM_READ_ONLY , sizeof(cl_float)*size*size, 0, &err);

devC = clCreateBuffer(context, CL_MEM_WRITE_ONLY , sizeof(cl_float)*size*size, 0, &err);

// Allocate pinned Memory For Input And Output

pinned_A = clCreateBuffer(context, CL_MEM_ALLOC_HOST_PTR , sizeof(cl_float)*size*size, 0, &err);

pinned_B = clCreateBuffer(context, CL_MEM_ALLOC_HOST_PTR, sizeof(cl_float)*size*size, 0, &err);

pinned_C = clCreateBuffer(context, CL_MEM_ALLOC_HOST_PTR , sizeof(cl_float)*size*size, 0, &err);

cl_float * mapPtrA = (cl_float *)clEnqueueMapBuffer( queue, pinned_A, CL_TRUE, CL_MAP_WRITE, 0, sizeof(cl_float)*size*size, 0, NULL, NULL, NULL);

cl_float * mapPtrB = (cl_float *)clEnqueueMapBuffer( queue, pinned_B, CL_TRUE, CL_MAP_WRITE, 0, sizeof(cl_float)*size*size, 0, NULL, NULL, NULL);

//Fill matrix

fillMatrix(mapPtrA,size);

fillMatrix(mapPtrB,size);

clEnqueueUnmapMemObject(queue, pinned_A, mapPtrA, 0, NULL, NULL);

clEnqueueUnmapMemObject(queue, pinned_B, mapPtrB, 0, NULL, NULL);

clEnqueueCopyBuffer(queue, pinned_A, devA, 0, 0,sizeof(cl_float)*size*size, 0, NULL, NULL);

clEnqueueCopyBuffer(queue, pinned_B, devB, 0, 0,sizeof(cl_float)*size*size, 0, NULL, NULL);

MatrixMul(devA, devB, devC, size);

clEnqueueCopyBuffer(queue, devC, pinned_C, 0, 0,sizeof(cl_float)*size*size, 0, NULL, NULL);

cl_float * mapPtrC = (cl_float*)clEnqueueMapBuffer( queue, pinned_C, CL_TRUE, CL_MAP_READ, 0, sizeof(cl_float)*size*size, 0, NULL, NULL, NULL);

//memcpy(matrixC, mapPtrC, sizeof(cl_float)*size*size);

clEnqueueUnmapMemObject(queue, pinned_C, mapPtrC, 0, NULL, NULL);

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

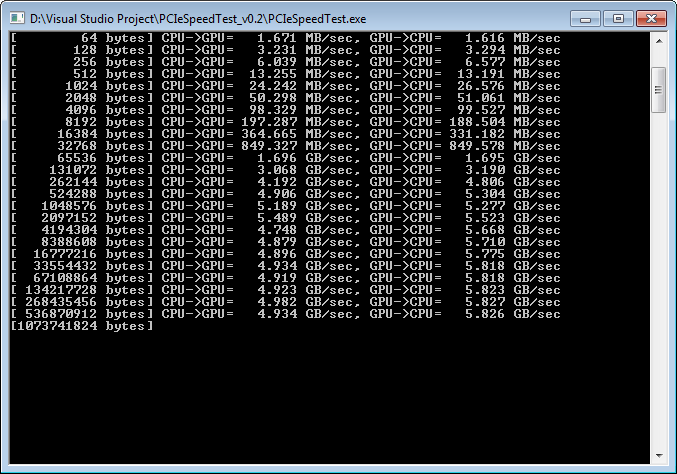

how do you measure transfer times? PCIe2.0 have practical limit around 5-6GB/s so if you are reaching this speed. also pinned memory is limited in size so it is possible that second buffer can't be pinned so OpenCL runtime fall back to slower path.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

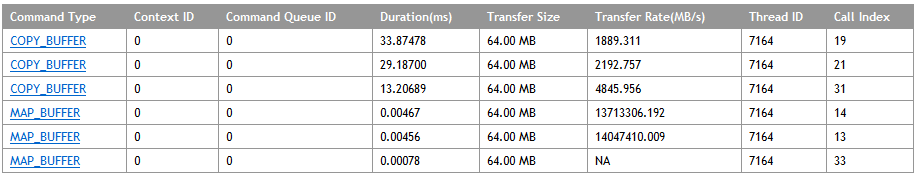

You're right, when the size is big (352,6 MB for a buffer), the second copy is slow but the weird thing is the third copy (READ) become fast again for pinned transfer.

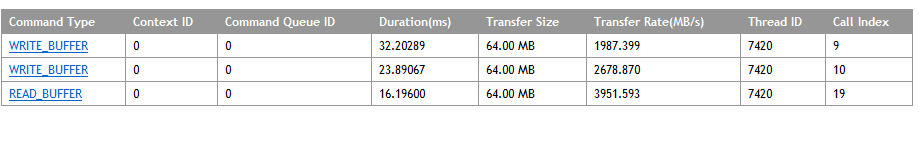

When I try this in standard buffer (pageable memory) with buffer size is 352,6 MB, the result is "weird" like BufferBandwidth.

Each time I transfer host memory, the transfer rate is increase (enqueueReadBuffer > enqueueWriteBuffer2 > enqueueWriteBuffer1). Or it is normal??

When I compare the result from pinned transfer with pageable memory, the result is more likely same.

I get the transfer rate using AMD APP Profiler and execute the transfer just ONE time for each transfer.

I see in some discussion that some of them using iteration for each transfer and kernel like BufferBandwidth example for example each transfer is executed 20 times).

Should I do the iteration and how to do it correctly? I already read the BufferBandwidth but still confused how to implement it in my code.

Can you give me an example based on my code??

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

and what buffer is pointing that third transfer? if to the first buffer then of course it will be fast again. BufferBandwidth example is doing iterations to measure average for benchmarking purpose. of course it is not a normal desired code path. another factor to consider is that allocation and pinning the buffer take some time. so if you use the buffer first time it can be slower as it must first allocate.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for reply,

The third transfer is from third pinned memory (to fill the result) to the third device memory

I fill the first and second pinned buffers with data and copy to the first and second device buffers.

Kernel will be executed using the first and second device buffers and the result will be stored to the third device buffer (just to store the result).

The device buffer will be move to new third pinned buffer (not filled before) and will be read by host.

So, how to increase the speed without using iteration (because it's not normal for my code)??

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I collect the data using AMD App Profiler and CodeXL but the result is same using standard / pageable memory (clEnqueueRead/WriteBuffer with DEFAULT FLAG for ALL buffers)

and pinned memory (CL_MEM_ALLOC_HOST + Map + Copy).

I already use clFlush too to get a synchronization but pinned memory never reach 4-5 GB/sec

I already test the PCIe bandwidth using PCIe speed test. Here is the result:

Here is the result of test using pinned and standard buffer: