Archives Discussions

- AMD Community

- Communities

- Developers

- Devgurus Archives

- Archives Discussions

- Re: clWaitForEvents behaviour

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

clWaitForEvents behaviour

Hi

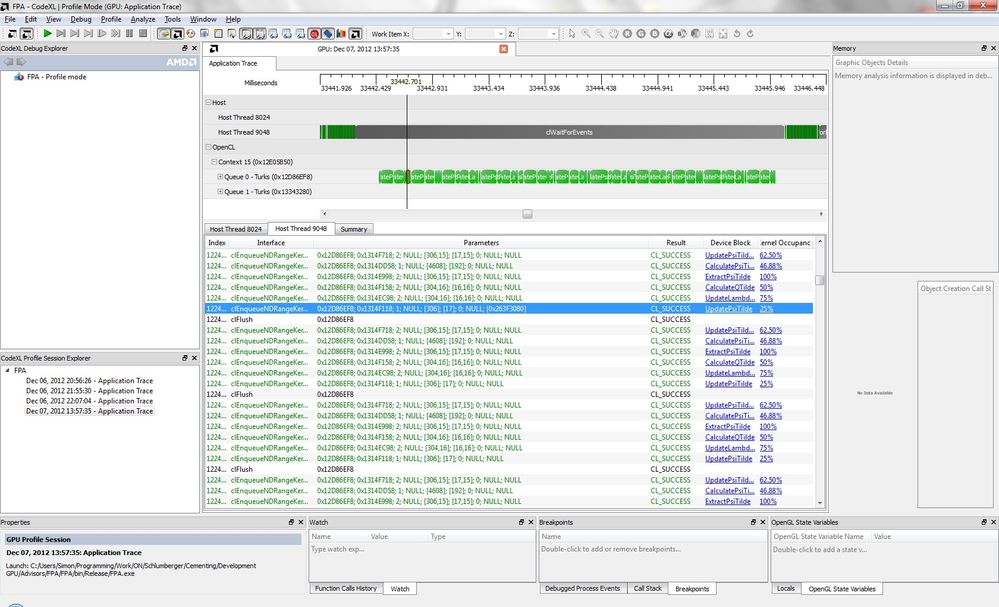

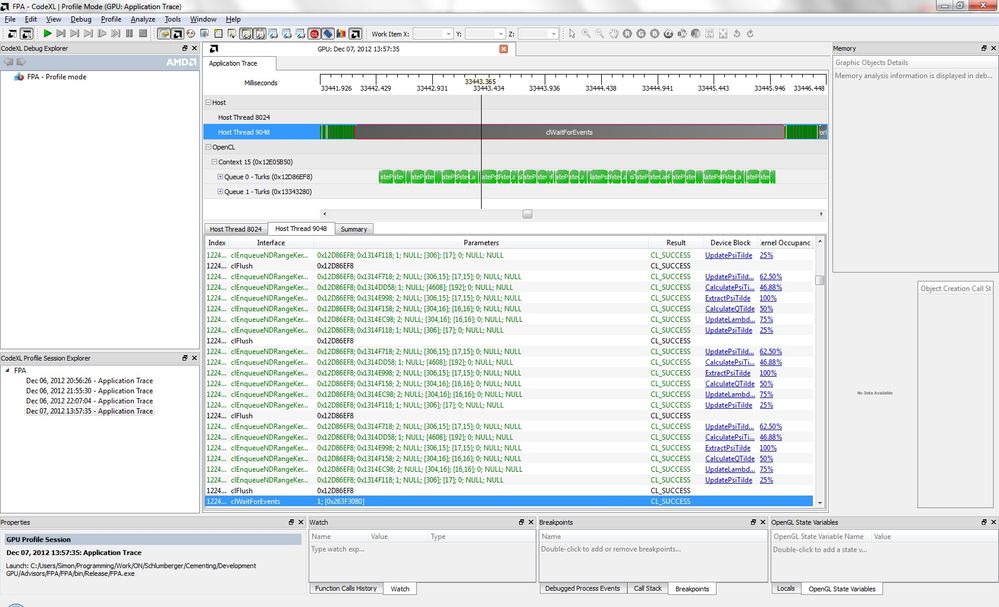

I am experiencing unexpected behavior when using clWaitForEvents. Now this may be related to using CodeXL to examine the operation of my code however I wanted to seek some advice. As I understand it clWaitForEvents should return once the events passed as its parameters are complete. In my code I am running a set of OpenCL kernels in a loop. I am creating an event for the final kernel in the loop and then queuing a number of additional sets of kernels (in this case ~10 in total with the event on the last kernel of the first set). However as can be seen the following screen grabs from CodeXL clWaitForEvents is demonstrating the same behavior as clFinish and waiting for all the queued kernels to complete.

Here I have highlighted the kernel on which the event is set, CodeXL shows it completing a long time before the clWaitForEvents on the event attached to it.

Here we see the call to clWaitForEvents for the event shown in the previous image. However as can be seen the call to clWaitForEvents seems to behave like clFinish and wait for all queued commands to complete.

Is this the expected behavior, an artifact of viewing the timeline in CodeXL or something else?

Many thanks.

Simon

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The following method gives the same behaviour of clWaitforEvents on AMD opencl as that on nVidia, using driver 9.10.8.0

The runtime submits the [A B C D E] as a single batch and will signal C when [A B C D E] is complete. But if the app does:

Enqueue A

Enqueue B

Enqueue C

Flush

Enqueue D

Enqueue E

WaitForEvent(C)

Then the runtime will submit [A B C] and signal C before dispatching D.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Would you mind uploading a small test code?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I can reproduce the problem. It is said that using the profiler disables kernel overlapping and is not always reliable in some discussions on this forum. So it could be an artifact. So maybe you can manually insert timers in your code.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for taking a look at this.

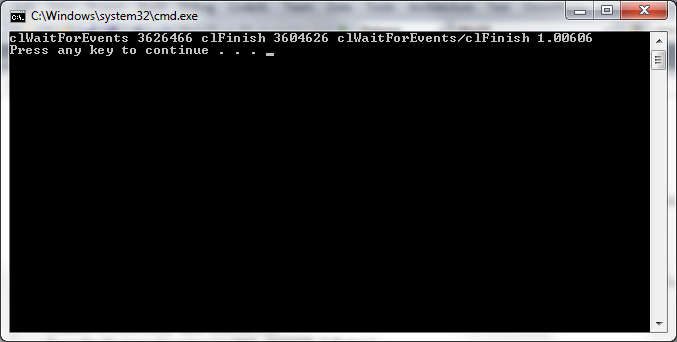

I agree, one of my concerns was that this could be an issue of viewing the timeline through CodeXL with profiling enabled. I have modified the original code I uploaded somewhat to hopefully provide better data:

- I am now using two kernels to avoid the possibility that the issue is related to running the same kernel object multiple times. The event waited for is now created on a "setup" kernel which is run once before the "work" kernel is queued multiple times from within a loop.

- I have added timers before the queue of the "setup" kernel and after the call to the blocking function, either clWaitForEvents or clFinish.

Running this code either in CodeXL or standalone produces the same result. The time taken until the blocking call returns is identical. The event set on the first kernel executed does not appear to be marked complete until the commandqueue is empty.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I slightly modified the kernel in the second attachment by adding the following block to make the kernel execution time longer. The ration is still around 1. So I believe it is not an artifact of codeXL.

-------

for (int j =0; j<100000;j++)

{

for (int i=0; i <42949672; ++i)

{

for (int jjj=0; jjj<100000;jjj++)

{

for (int iii=0; iii <42949672; ++iii)

{

for (int jj =0; jj<100000;jj++)

{

for (int ii=0; ii <42949672; ++ii)

{

float a = i/1.02;

}

}

}

}

}

}

________________________________

p.s.: http://devgurus.amd.com/thread/159601

This should be helpful.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

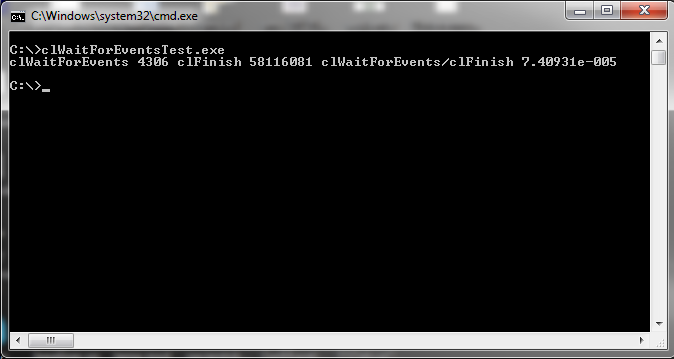

OK well this is probably something I should have tried earlier, however I have now run the test code uploaded above on a colleague's machine which has NVIDIA hardware. The results are in the image below and show the expected behaviour with clWaitForEvents returning much sooner than clFinish.

As such I am beginning to think that this may be an issue with the implementation of clWaitForEvents I wonder if/how this can be brought to the attention of the appropriate people?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am forwarding your message to the APPROPRIATE people...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I tried this in my own code using only a simple timer and see the same results. But looking at it, I think the problem may be with the assumptions, not with OpenCL or the analyzer.

A simple way to see what is happening is assume 10 kernels, each takes 1 second to execute, en-queue and other functions are take 0.0 seconds (instantaneous) Following your first code posting, start at the beginning with iter=intrsync = 0; You enqueue kernel 0 with an event followed by 9 kernels with no event. The que then looks like this, note the elapsed time on the right

QUE action/content TIME

add K(0) - sync event 0.00 kernel 0

add K(1).. K(9) 0.00

wait for K(0) 0.00 begin wait

clear sync event 1.00 done, clear event

add K(10) - sync event 1.00 second sync

add K(11) .. K(19) 1.00

** at this point the que has K(1) - K(19), and now wait for K(9) to finish

wait for K(10) 1.00 begin wait

clear event 11.00 done, clear event

add K(20) - event 11.00

add K(21) .. K (29) 11.00

wait for K(19) 11.00

clear event 21.00 done, clear event

See the pattern? The que always has from 10 to 19 kernels and waiting for the next event will always take the maximum cycle time of 10 seconds, or 10 kernels. Basically wait_for_events and clFinish are going to look about the same. I cant' quite read the detail in the Profiler images above, but I suspect they may be correct, after en-queuing the sync event, it must wait for an entire batch of kernels to execute

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The pattern you've described is actually what I expect to see. What I am seeing is more like:

QUE action/content TIME

add K(0) - sync event 0.00 kernel 0

add K(1).. K(9) 0.00

wait for K(0) 0.00 begin wait

clear sync event 10.00 done, clear event

add K(10) - sync event 10.00 second sync

add K(11) .. K(19) 10.00

** at this point the que has K(1) - K(19), and now wait for K(9) to finish

wait for K(10) 10.00 begin wait

clear event 20.00 done, clear event

add K(20) - event 20.00

add K(21) .. K (29) 20.00

wait for K(19) 20.00

clear event 30.00 done, clear event

Now in the case where we are looping round waiting and queuing there will be no difference to the length of time that clWaitForEvents waits, however instead of having 10 kernels left to process when the clWaitForEvents function returns there are none left in the queue so the GPU stalls while I enqueue more items.

If you look at the second test code uploaded I have created a simpler test. In this test I simply enqueue a large number of kernels and then wait on the first one. The timeline looks like this:

QUE action/content TIME

add K(0) - sync event 0.00 kernel 0

add K(1).. K(1000) 0.00

wait for K(0) 0.00 begin wait

clear sync event 1000.00 done, clear event

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

simonimpey wrote:

The pattern you've described is actually what I expect to see. What I am seeing is more like:...............

I now see exactly what you see. For sure, any time the event completes, the que is completely empty. To test this without a profiler, I inserted the following just after the clWaitForEvent()

settimer(); clFinish(); gettimer();

The timer reports nothing, zero time. If there were any kernels in the que, clFinish must wait for them to finish.

The problem also occurs with clGetEventInfo(event, CL_EVENT_COMMAND_EXECUTION_STATUS,...); which reports only that the event is waiting until the whole que has finished. It's as if the event status is not visible until the que completes.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The following method gives the same behaviour of clWaitforEvents on AMD opencl as that on nVidia, using driver 9.10.8.0

The runtime submits the [A B C D E] as a single batch and will signal C when [A B C D E] is complete. But if the app does:

Enqueue A

Enqueue B

Enqueue C

Flush

Enqueue D

Enqueue E

WaitForEvent(C)

Then the runtime will submit [A B C] and signal C before dispatching D.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The OpenCL runtime will batch up the processing of multiple enqueued kernels to minimize overheads.

clFlush could be used to hint the runtime that it reaches the end of batch so it could start the batch processing.

In the example you've given, you could add a clFlush after you've acquired a sync event and that should give you the behavior you want.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

from specification:

To use event objects that refer to commands enqueued in a command-queue as event objects to

wait on by commands enqueued in a different command-queue, the application must call a

clFlush or any blocking commands that perform an implicit flush of the command-queue where

the commands that refer to these event objects are enqueued.

IIRC i read somewhere that you must call clFlush before getting event status.