Archives Discussions

- AMD Community

- Communities

- Developers

- Devgurus Archives

- Archives Discussions

- Re: Concurrent kernel execution between CPU and GP...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Concurrent kernel execution between CPU and GPU

Hi. I am trying to verify simple heterogenous computing using a CPU and a GPU using OpenCL. The Kernel function is a simple BLAS level 1 saxpy (single-precision, scalar multplication and vector addition) algorithm, and I assigned "n" numbers of elements to the CPU and "nn-n" to the GPU, where "nn" is the vector length. Moving n variable, I wanted to figure out a splitting point "n" that minimizes whole computational time.

In order to get the ideal splitting point, OpenCL should guarantee a concurrency under heterogeneous system. So I tried to verify that concurrency by testing a simple program as follows.

CPerfCounter t1;

...

t1.Reset();

t1.Start();

// Enqueue to write the target vectors x and y to GPU Global memory.

clEnqueueWriteBuffer(cqCommandQueue_gpu, cl_x, CL_FALSE, 0, sizeof(FLOAT)*(nn-n), x, 0, NULL, NULL);

clEnqueueWriteBuffer(cqCommandQueue_gpu, cl_y, CL_FALSE, 0, sizeof(FLOAT)*(nn-n), y, 0, NULL, NULL);

// Enqueue NDRange to CPU

err = clEnqueueNDRangeKernel(cqCommandQueue_cpu, ckKernel[1], 1, NULL, &GWS2, &LWS2, 0, NULL, NULL);

// Enqueue NDRange to GPU

clEnqueueNDRangeKernel(cqCommandQueue_gpu, ckKernel[0], 1, NULL, &GWS, &LWS, 0, NULL, NULL);

// Enqueue to read the result vector to Host memory

clEnqueueReadBuffer(cqCommandQueue_gpu, cl_y, CL_FALSE, 0, sizeof(FLOAT)*(nn-n), z, 0, NULL, NULL);

//clFlush(cqCommandQueue_gpu);

clFlush(cqCommandQueue_cpu);

clFinish(cqCommandQueue_gpu);

clFinish(cqCommandQueue_cpu);

t1.Stop();

I intentionally remove "clFlush(cqCommandQueue_gpu)" since there were no big differences about results. Here is profile information using AMD Profiler. I found out some strang results.

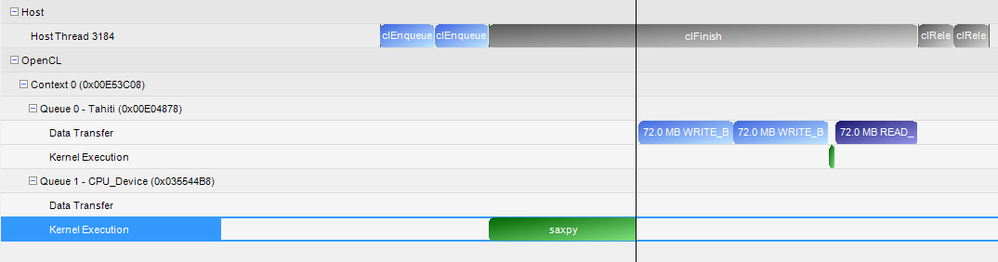

Case1. Not executed in parallel

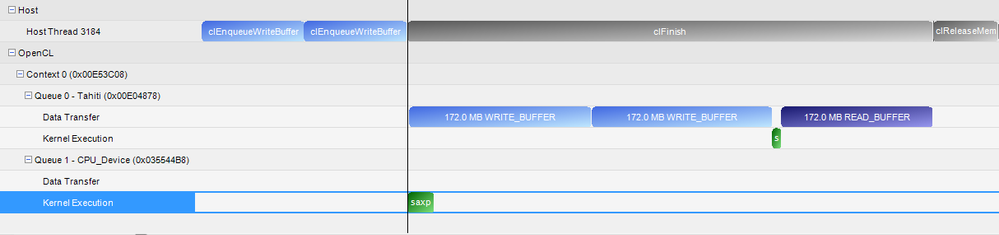

Case2. Working properly

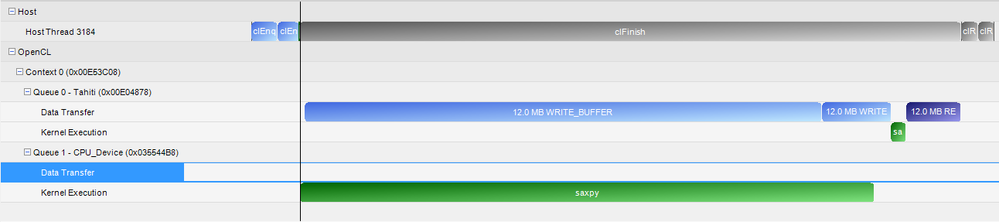

Case3. Strangely PCI express holds data while CPU computes

How can I analyze these results?

Thanks in advance.

------------- My information

Windows 7 64-bit, VS 2010

CPU : FX 8120

GPU : Radeon 7970

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

2 kernels updating same cl_mem object at the same time will not work properly.

Refer to Appendix A of the OpenCL spec.

Instead use sub-buffers (clCreateSubBuffer..). Assign 1 SB to CPU and other to GPU and they can work in parallel...and then read back the mother buffer....All will be well.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks.

I have already assigned indepent buffers for each kernel. Above profile was done by independent buffers.

// Create buffer objects

cl_x = clCreateBuffer(cxContext, CL_MEM_READ_ONLY, sizeof(FLOAT)*(nn-n), NULL, &err);

cl_y = clCreateBuffer(cxContext, CL_MEM_READ_WRITE, sizeof(FLOAT)*(nn-n), NULL, &err);

CheckErr(err);

cl_xc = clCreateBuffer(cxContext, CL_MEM_READ_ONLY | CL_MEM_USE_HOST_PTR, sizeof(FLOAT)*n, (void *)xc, &err);

cl_yc = clCreateBuffer(cxContext, CL_MEM_READ_WRITE | CL_MEM_USE_HOST_PTR, sizeof(FLOAT)*n, (void *)yc, &err);

CheckErr(err);

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

clEnqueueWriteBuffer(cqCommandQueue_gpu, cl_x, CL_FALSE, 0, sizeof(FLOAT)*(nn-n), x, 0, NULL, NULL);

clEnqueueWriteBuffer(cqCommandQueue_gpu, cl_y, CL_FALSE, 0, sizeof(FLOAT)*(nn-n), y, 0, NULL, NULL);

In the code above , Is there a reason why you use the GPU command queue for both the writes?

Also, I hope you have set the arguments correctly as well. You may want to re-check.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for replying my discussion. This will be great help to me.

Saxpy algorithm needs to have two vectors.

y = a*x + y, where "a" is a scalar variable and x and y are vector with given length. I assigned it as "n" for the CPU and "nn-n" for the GPU, so totally I want to calculate saxpy operation with "nn" vector length. Each device has two vectors x and y, therefore GPU writes two buffers x and y with length "nn-n" which are independent buffers from CPU vectors.

I have tested CPU only and GPU only program, and worked well. This is my first time to try heterogeneous computing with OpenCL. But this example is very easy, I expected almost ideal results...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Oh okay.. Now I understand...

from your original post, it is not clear:

1) Are you receiving wrong results?

2) Are you receiving correct results but not expected overlapped execution?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I attatched my source codes at the original posting.

1) Yes, I got correct results.

2) Yes, exactly. I expected overlapped executions.

I fixed nn = 50331648(=48K) where n varies from 1K to 47K. Here are timing results with CPU only and GPU only.

n nn-n CPU time(sec) GPU time(sec)

1048576 49283072 0.0009259 0.3113512

2097152 48234496 0.0019265 0.3030655

3145728 47185920 0.0027454 0.2960925

4194304 46137344 0.0043816 0.2872379

5242880 45088768 0.0046060 0.2832044

6291456 44040192 0.0055247 0.2767915

7340032 42991616 0.0063134 0.2695790

8388608 41943040 0.0076503 0.2652252

9437184 40894464 0.0082551 0.2594063

10485760 39845888 0.0092112 0.2530073

11534336 38797312 0.0101195 0.2437295

12582912 37748736 0.0109935 0.2392208

13631488 36700160 0.0119362 0.2346292

14680064 35651584 0.0129057 0.2290327

15728640 34603008 0.0137355 0.2227570

16777216 33554432 0.0158361 0.2124377

17825792 32505856 0.0157124 0.2050176

18874368 31457280 0.0165814 0.1993729

19922944 30408704 0.0173361 0.1932635

20971520 29360128 0.0188006 0.1867926

22020096 28311552 0.0191749 0.1798617

23068672 27262976 0.0199704 0.1736680

24117248 26214400 0.0211467 0.1651932

25165824 25165824 0.0222448 0.1622620

26214400 24117248 0.0225625 0.1567150

27262976 23068672 0.0232405 0.1501289

28311552 22020096 0.0247861 0.1425812

29360128 20971520 0.0258827 0.1376894

30408704 19922944 0.0258399 0.1315505

31457280 18874368 0.0267882 0.1256371

32505856 17825792 0.0287400 0.1184132

33554432 16777216 0.0283369 0.1076324

34603008 15728640 0.0290841 0.0996051

35651584 14680064 0.0298546 0.0935058

36700160 13631488 0.0308073 0.0863872

37748736 12582912 0.0324147 0.0819703

38797312 11534336 0.0329809 0.0750496

39845888 10485760 0.0332961 0.0686574

40894464 9437184 0.0340249 0.0611951

41943040 8388608 0.0349598 0.0551111

42991616 7340032 0.0356537 0.0483755

44040192 6291456 0.0364748 0.0426752

45088768 5242880 0.0371984 0.0357001

46137344 4194304 0.0388203 0.0290992

47185920 3145728 0.0388292 0.0225611

48234496 2097152 0.0400297 0.0168223

49283072 1048576 0.0415698 0.0123415

I want get a result of hetergeneous computing as max(CPUtime(n),GPUtime(nn-n)). But above posted program results in nothing but (CPUtime(n)+GPUtime(nn-n)). This is why I raised a question and I showed the profiling figures.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Okay... Instead of clFinish(), Can you try clWaitForEvents(). This way, you can wait for both CPU and GPU in 1 single API. clFinish() waits for only one to complete. And yes, clFlush() both queues and the wait on the 2 kernel completion events...HTH

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

btw.. I just checked the code. Please disable profiling in the CQ (CL_QUEUE_PROFILING_ENABLE). It can slow down lot of things and disable some code paths in the runtime....

Try measuring time using some high resolution timers (like QueryPerformanceCounter() API in windows (or) clock_gettime() in Linux using the librt.a...)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Timer was also a problem to me because I tried to use gettimeofday() or clock_gettime() functions on Winodws and those functions showed me totally incorrect results. (I guess that is my mistake in somewhere in timer functions.) Instead of using those functions, I looked at AMD SDK timer and this gave me reliable measurement.

I will post the result again using clWaitForEvents().

Thank you very much!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

GPU : 2 WriteBuffer, 1 NDRangeKernel, 1 ReadBuffer

CPU : 1 NDRageKernel

Above profiling figures are parts of single profiling information.

I categorized three cases based on behaviors. What I want to see is whether NDRangeKernel of the CPU and the first WriteBuffer of the GPU start at almost same time line or not.

Case1.

The CPU and GPU executions are serialized. This case shows that after the CPU had finished its kernel computation, the first WriteBuffer of the GPU have started to transfer.

Case2.

Both NDRangeKernel of the CPU and the first WriteBuffer of the GPU have started in almost similar time line. This is what I exactly expected.

Case3.

NDRangeKernel of the CPU and the first WriteBuffer of the GPU are executed at similar time line, but the first WriteBuffer of the GPU took a long time compared with usual case. The PCIe bandwidth of my machine is usually up to 3.0GB/s writing, but in this first buffer case it is 300~500MB/s. In my opinion, the CPU is busy for computing saxpy, so PCIe handling is somewhat delayed since I assigned work-group size as 1024, which is the maximum number of work-group size.

On the other hands, some interesting patterns have been found.

Case1 and Case3 occured when the CPU computes more than the GPU. If GPU is in heavy computation, Case2 was observed.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You have listed 3 cases above. So, I believe you randomly hit one of these cases as you run your application many number of times. Is that right?

Also, Please let me know if clWaitForEvents() helped you... And yes, Please clFlush() on all CQs before waiting...

Just a word of caution on Sub-buffer idea that I mentioned above:

I think, sub-buffer is an OpenCL 1.2 concept -- May not work on OpenCL vendors who have not matured to 1.2 yet, like for example: nvidia.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

That is right.

I have two test machines with almost same devices, call those two machines A and B. For some reasons, AMD profiler is not working properly in machine A. But I was able to get timing results without profiling data from machine A using clWaitForEvents(). The result shows almost same trend like (CPU_times(n)+GPU_times(nn-n)) rather than max(CPU_times(n),GPU_times(nn-n)).

I will profile soon using machine B, which is able to profile the program.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Now I tested with profiler.

Case 1, 2, and 3 also observed again randomly.

I applied clWaitForEvent() and AMD ADK Timer which uses QueryPerformanceCounter(). I put clFlush() in all equeueing functions and removed CL_PROFILEING_ENABLE flags for each commandqueue.

I attatched profile information at the original post.

Thank you.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

"Case1 and Case3 occured when the CPU computes more than the GPU. If GPU is in heavy computation, Case2 was observed."

One quick suggestion is to use device fission extension. This should block one CPU core for handling host-device communications, and rest of them can used as CPU device. Anyways i will try to read through your code, and will let you know.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you. I don't have any APU right now, so someone may try to test thist simple code.

My final goal is to find spliting ratio between a CPU and a GPU for saxypy (BLAS level 1), matrix vector multiplication (BLAS level 2), and matrix matrix multiplication (BLAS level 3). At first, I want to get real data reasonably, and then analyze it with hardware parameters like memory bandwidth, flops, kernel overheads, and latencies. Finally, I may be able to express this real data in terms of time function with given "nn" variable.

And I have two simple questions.

1. What is the ideal work-group size for CPU?

I know it is definitely depending on the kenel. But in the case of saxpy operation, this is very light and shows very clear results when "nn" increases. I have AMD FX 8120, which has eight cores, and this shows peak performance when I assigned the maximum work-group size as 1024. In my thought, since FX 8120 has 8 cores, it will be natural to assign work-group size as 8. But in this case, the performance is much lower than the 1024 case.

2. Max memory allocation

HD 7950 has 2GB global memory, and "Global memory size" in clinfo also shows 2GB. But "Max memory allocation" shows 25% of 2GB, which becomes 512MB... I have read some posts that the "Max memory allocation" will be about 60% of actual global memory size. Moreover, CPU clinfo also shows 25% of its global memory size. How can I use more "Max memory allocation"?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Max Mem Allocation, I believe, is for 1 OpenCL clCreateBuffer() call. You can still use the rest of memory with multiple allocations.

Please cross-check with the spec on the exact paraeter which you are querying...

+

Device Fission has nothing to do with APU. You can run the DeviceFission sample in the APP SDK and check out the code

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Okay. I understand. Thank you for kind reply.

I will look at the DeviceFission SDK and try that.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

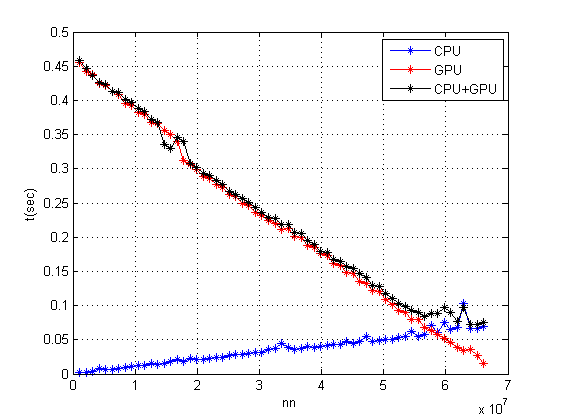

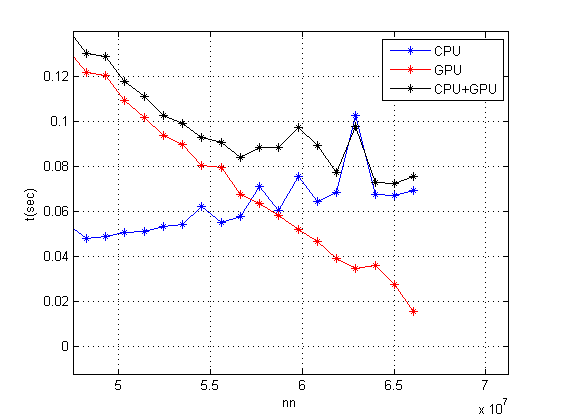

I got some results. I will start with a claim.

[CLAIM]

WriteBuffer() to the GPU device memory is delayed when the CPU runs heavy kernel.

By using clCreateSubDevice(), I assigned 4 cores of 8 cores CPU (FX 8120) as SubDevice_CPU so that remaining 4 cores can do some I/O operations.

Here are results. Still it does not show max(CPU_time, GPU_time).

Magnified figure around cross section.

I want to see further, but the CL_MEM_OBJECT_ALLOCATION_FAILURE kept showing whenever totally allocated GPU buffers (not one buffer object) exceed the MAX MEMORY ALLOCATION.

Now I am trying to profile this result. How do you think about my claim?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

CL_MEM_OBJECT_ALLOCATION_FAILURE I propoesd long ago that OpenCL runtime should swap buffers from device memory if it can't fit them together and return error only when sum off all buffers needed for current kernel execution exceed device memory size.

What is on X axis?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I did a mistake at labeling X axis. Through this post, the X axis should be "n". I have fixed "nn" which is the length of each vector in saxpy operation. I gave "n" numbers of element to the CPU and "nn-n" numbers of element to the GPU by vaying "n" from 1K to 47K or 63K with step size 1K.

nou, Could explain more about CL_MEM_OBJECT_ALLOCATION_FAILURE? What was "device memory size"? Is that "Max memory allocation" or "Global memory size" in clinfo?

Thanks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Global memory size. I didn't try it but maybe migrating memory object onto CPU device may free up device memory. IIRC CPU device doesn't have this limitation. So try migrate mem object with clEnqueueMigrateMemObjects but also use clFinish as it may need finish migration before enqueue of another kernels.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for sharing the results. It looks interesting. Can you also attach your latest code (with the latest reply). Will try to run it here.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Interesting. You can also do some more experiments after going through SimpleMultiDevice Sample. IMHO, that sample never gave exact overlap, but partial kernel overlap is easily reproducible.

BTW some timer header is missing from your attachment.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Ah.. Header files are from AMD BufferBandwith SDK.

I have two more ideas.

1. Test with lager size

Still I could not solve maximum memory allocation error. In my machine, if all the allocated buffers (sum of all) exceed the "Max memory allocation", I got CL_MEM_OBJECT_ALLOCATION_FAILURE. From Khronos website (http://www.khronos.org/registry/cl/sdk/1.0/docs/man/xhtml/clGetDeviceInfo.html), I found that CL_DEVICE_MAX_MEM_ALLOC_SIZE tells me 1/4 of global memory size is defined as "Max memory allocation". Is there any way to use full global memory?

2. Test more complex example

I will test again for BLAS level 2 and 3. The cross section point will move to left, so I can check some behaviors when CPU computes more than GPU.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

1. Test with lager size

Still I could not solve maximum memory allocation error. In my machine, if all the allocated buffers (sum of all) exceed the "Max memory allocation", I got CL_MEM_OBJECT_ALLOCATION_FAILURE. From Khronos website (http://www.khronos.org/registry/cl/sdk/1.0/docs/man/xhtml/clGetDeviceInfo.html), I found that CL_DEVICE_MAX_MEM_ALLOC_SIZE tells me 1/4 of global memory size is defined as "Max memory allocation". Is there any way to use full global memory?

For larger memory sizes, check:

http://devgurus.amd.com/message/1288008#1288008

http://devgurus.amd.com/message/1287163#1287163

2. Test more complex example

I will test again for BLAS level 2 and 3. The cross section point will move to left, so I can check some behaviors when CPU computes more than GPU.

You can checkout clAmdBlas for testing level 2 & level3 routines. You need not implement the kernels.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

beware of using this GPU_MAX_ALLOC_PERCENT and GPU_MAX_HEAP_SIZE as it can lead that buffers stays in RAM and GPU is read then through PCIe bus which lead to slow execution. at least in older version of SDK it was true.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for himanshu.gautam and nou.

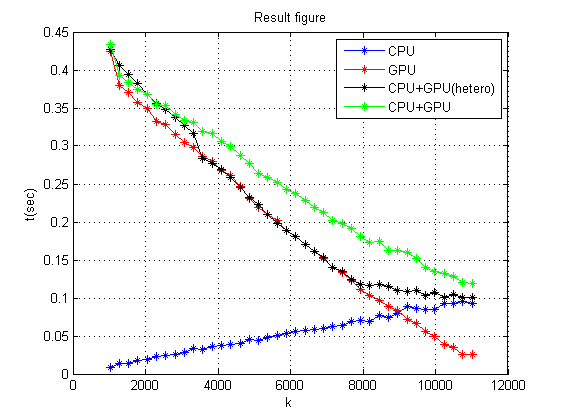

I tried BLAS level 2 matrix vector multiplication and I got almost similar results with BLAS level 1 saxpy algorithm. The possible reason why thoes figures showed somewhat identical behavior is that BLAS algorithms tend to be data transfer dominant algorithms. Here is the result.

The green line was added to express just sum of CPU and GPU (the red line value + the blue line value). I have n times n matrix "A", and moving variable k means k times n matrix to the CPU and (n-k) times n matrix to the GPU.

There exists some overheads around cross section point and around starting position. I have a lot of interests in the overhead around cross section point and want to figure out why.

I have looked at all useful links you recommended and some posts on the web about maximum memory allocation. I was able to get proper size of "Max alloc memory" using GPU_MAX_ALLOC_PERCENT=100. I double checked it using memtestCL by passing maximum available memory, and it worked fine. Strangely, the program I attatched in my original post kept showing same error CL_MEM_OBJECT_ALLOCATION_FAILURE even though I set the GPU_MAX_ALLOC_PERCENT variable correctly. And even the clGetDeviceInfo() for CL_DEVICE_MAX_MEM_ALLOC_SIZE tells me right information. The memory object error comes up whenever I allocate over 512MB totally which was the original max memory allocation value before setting GPU_MAX_ALLOC_PERCENT as 100. The one thing left to do is reinstalling OS. Does anyone else have the same problem?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Are you using any flags while allocating the buffer? (As per your attachment - there is none except Read/write).

Also, I see that you are allocating many buffers (some with USE_HOST_PTR) as well.

Are you sure, if you are not running out of Host memory? If you are allocating host buffers of size 512MB, your mallocs() may also be failing.

If you post your exact code here, (if different from the attachment in first post), I will try it here.

Thanks,